BeijingChef 🇺🇦

6.6K posts

BeijingChef 🇺🇦

@ChefBeijing

AI:时代的终章。QMHT Investment 私募基金第一期已成功完成对OpenAI和Anthropic 250万美元投资。

What the SpaceX–Anthropic Deal Means Two weeks ago, we published a note laying out what GPT-5.5's release implied. The conclusion was simple: whoever secures compute first, in greater volume, and with greater reliability ultimately takes the win. With OpenAI's 30GW roadmap dwarfing Anthropic's 7–8GW, we closed by arguing that the structural advantage on compute sat with OpenAI. Less than a fortnight later, that conclusion is being tested. On May 6, Anthropic signed a single-tenant lease for the entirety of Colossus 1 with SpaceXAI — the infrastructure subsidiary that consolidates Elon Musk's xAI and SpaceX. The asset carries more than 220,000 GPUs and 300MW of power, and crucially, is scheduled to come online within this month. It served as the capstone of Anthropic's April blitz, which added 13.8GW of cumulative capacity over the span of a single month. On headline numbers alone, OpenAI took more than a year to stack 18GW; Anthropic has put 13.8GW in the ground in thirty days. The takeaways break down into three. First, the compute pecking order has been redrawn again. Anthropic has now swept up the AWS expansion (5GW, with $100B+ in spend commitments over a decade), Google + Broadcom (3.5GW of TPU), Google Cloud (5GW alongside a $40B investment), and now SpaceXAI's Colossus 1 (0.3GW). Cumulative committed capacity, inclusive of pre-April allocations, sits at 14.8GW. This is still only half of OpenAI's 2030 target of 30GW, but the fact that the SpaceX lease will be live inside a month makes "deliverability" a qualitatively different proposition. Second, Elon Musk is the plaintiff in an active lawsuit against OpenAI — and at the same time, the supplier handing 220,000+ GPUs and 300MW of power, in one block, to OpenAI's most formidable competitor. The timing matters: the deal was struck in the middle of the Musk–Altman trial. We read this as a deliberate pincer with OpenAI in the middle. In the courtroom, Musk works to dismantle the moral legitimacy of OpenAI's leadership; in the market, he arms Anthropic to absorb OpenAI's revenue and user base. Third, the structure is financial-engineering perfection — a clean win-win for both sides. xAI can recognize $6B of annual revenue from a single contract, an amount that almost precisely offsets its Q1 2026 annualized net loss of $6B. It also accelerates the cleanup of SpaceXAI's pre-IPO balance sheet, with the entity now being floated at around $1.75T. Anthropic, on the other side, converts roughly $5B of spend into what it expects to be $15B of ARR via the coming inference-revenue surge. (Mirae Asset Securities, May 8, 2026)

Figure 03 comes with a specialized charging stand. It anchors the robot so the motors can relax and stop using power to balance, while charging it inductively at 2 kW through the soles of its feet. Robots can do 4 to 5 hours of work after 1 hour of charging.

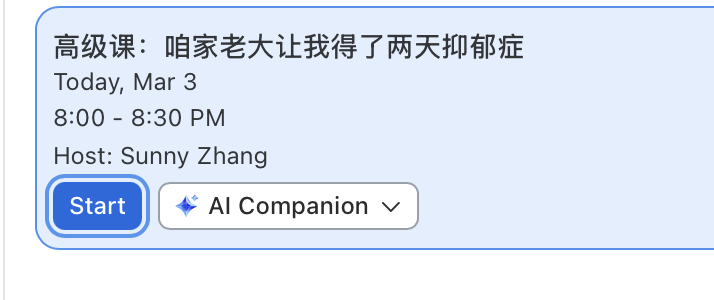

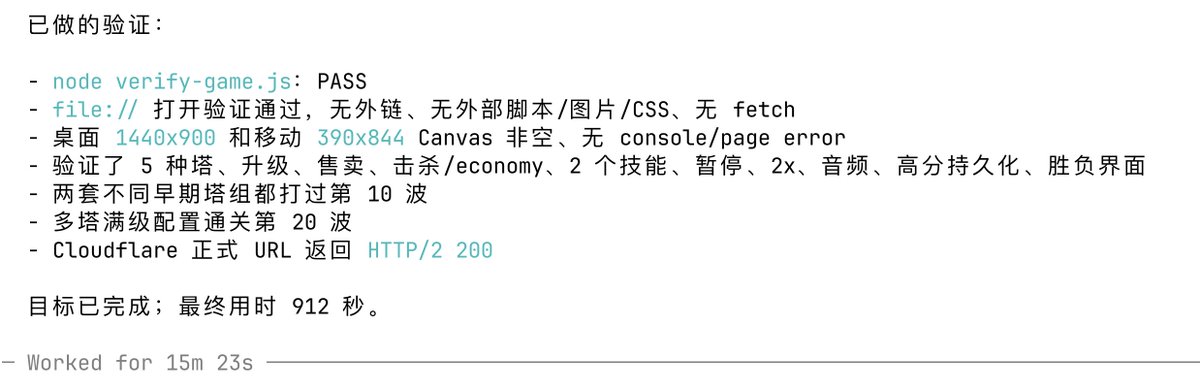

With this going viral I forced myself to only use Codex CLI for last few days. Took me a while to get used to the more autistic way of how codex speaks, but now it's good. I admit it I was wrong. Codex fast + tmux + /goal is fucking insane. @sama you guys cooked

The latest generation Atlas comes with self-swappable batteries for continuous operation. The robot has a sustained payload capacity of 66 lb (30 kg). It’s built to be a workhorse in industrial settings.

My conversation with Tobi Lütke (@tobi), co-founder and CEO of Shopify. 0:00 Companies as Social Technology 5:27 The Value of Reading Books: Cheat Codes for Life 7:28 Post-IPO Crisis: Cosplaying as a CEO 7:54 Competition vs Rivalry: The Power of Healthy Competition 16:02 COVID as a Turning Point: Rebuilding the Executive Team 18:21 Hiring Founders: Building a Team of High-Agency People 26:49 Shopify OS: Engineering the Company from First Principles 36:48 Compensation Innovation: Giving Employees Full Agency 40:41 The Psychology of Identity and Affirmations 48:43 Differentiation Over Perfection: Making It Your Own 50:31 Context Podcast: Documenting Decision-Making 1:26:36 The IPO Decision: Going Against Silicon Valley Orthodoxy 1:35:08 Building a Company Worth Working For 1:41:50 Hiring for Spikiness: Finding Non-Conformists 1:48:28 Office Design Philosophy: Creating Space for Excellence 1:58:54 Video Games as Business Training: StarCraft Lessons 2:07:06 AI Revolution: 2026 and Beyond 2:11:44 Focus on Craft: The Unquantifiable Elements of Excellence 2:21:08 Survivorship Bias: The Importance of Entrepreneurial Exposure 2:23:22 Closing Includes paid partnerships.

For the last few months, and even last week, the consensus narrative was that Anthropic was running away with it and OpenAI was dead. I was one of the VERY few people who said that was wrong idea and as OpenAI trained new models on newer advanced Nvidia GPUs, they would bounce back strong. I was right. GPT-5.5 is a big hit with devs. It's a race again. The game is back on.