Wu Lin

102 posts

@LinYorker

Postdoctoral fellow at @VectorInst. ML PhD at UBC. Mathematical and computational structures for ML. Geometric and algebraic methods.

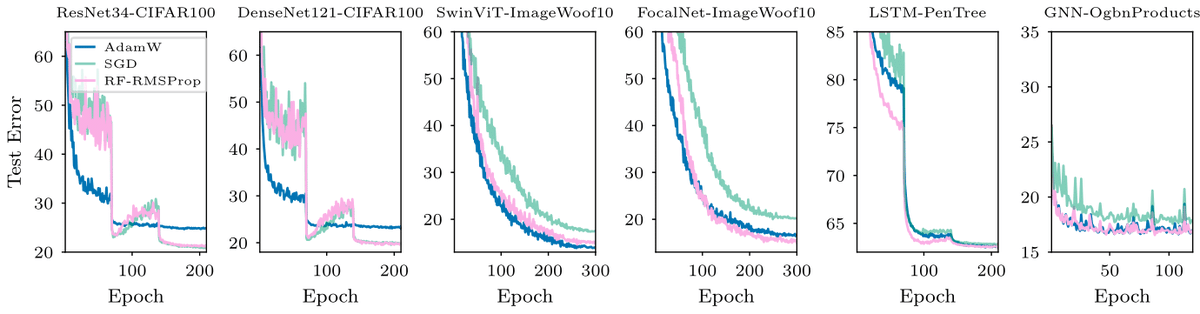

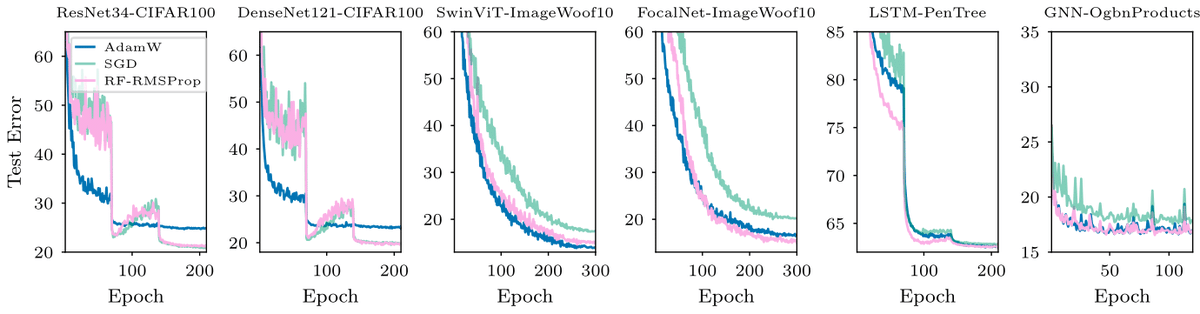

We released "The Newton--Muon Optimizer" . We show that Muon is secretly an implicit Newton method, and use this insight to build a better one. 1/n Paper: arxiv.org/abs/2604.01472

At Maximum Likelihood Estimator: Key property: observed Fisher information = Fisher information 2nd order Taylor expansion of likelihood: - likelihood curvature = Fisher information - radius of osculating circle=Variance of MLE for large sample size

@MLCommons #AlgoPerf results are in! 🏁 $50K prize competition yielded 28% faster neural net training with non-diagonal preconditioning beating Nesterov Adam. New SOTA for hyperparameter-free algorithms too! Full details in our blog. mlcommons.org/2024/08/mlc-al… #AIOptimization #AI