TimDarcet

1.4K posts

x.com/Fabien_Mikol/s… Luc Julia est à l'IA ce que Jancovici est au climat: un idiot utile qui détourne le débat des vrais sujets. Dire "l'IA c'est juste un outil, pas de panique" c'est endormir les gens pendant qu'un pourcentage massif de jobs va être balayé dans les années qui viennent. Pendant que Julia fait sa tournée des plateaux pour rassurer tout le monde, la Chine et les US avancent à vitesse grand V. Et nous on se rendort confortablement. Le résultat? Des entrepreneurs qui ne se lancent pas, des politiques qui ne bougent pas, et une France qui va se réveiller un matin en réalisant qu'elle a raté le train. Ce dont on a besoin c'est pas de gens qui minimisent ce qui arrive. C'est d'un électrochoc collectif.

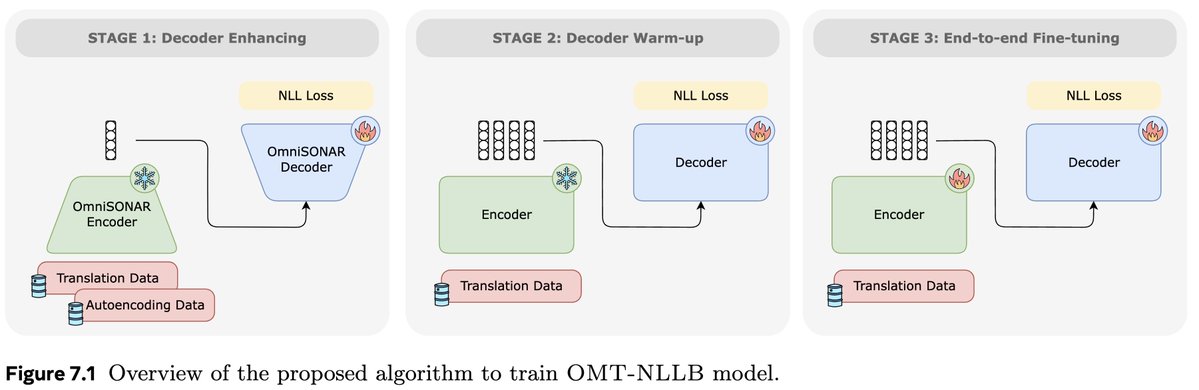

pro-tip you can fire up stardew on the second screen while you listen to semi-educational topics such as : - test-time training for deep exploration by jonas hübotter - training large scale video models by dilara gökay - continual learning for foundational models by ameya prabhu