Tim Stephenson

16.2K posts

Tim Stephenson

@TimStephenson

Professional Wedding, Commercial & Lifestyle Photographer; Head of DevOps for Allies Computing (all views my own). Also: https://t.co/Nu9eG7qm8e

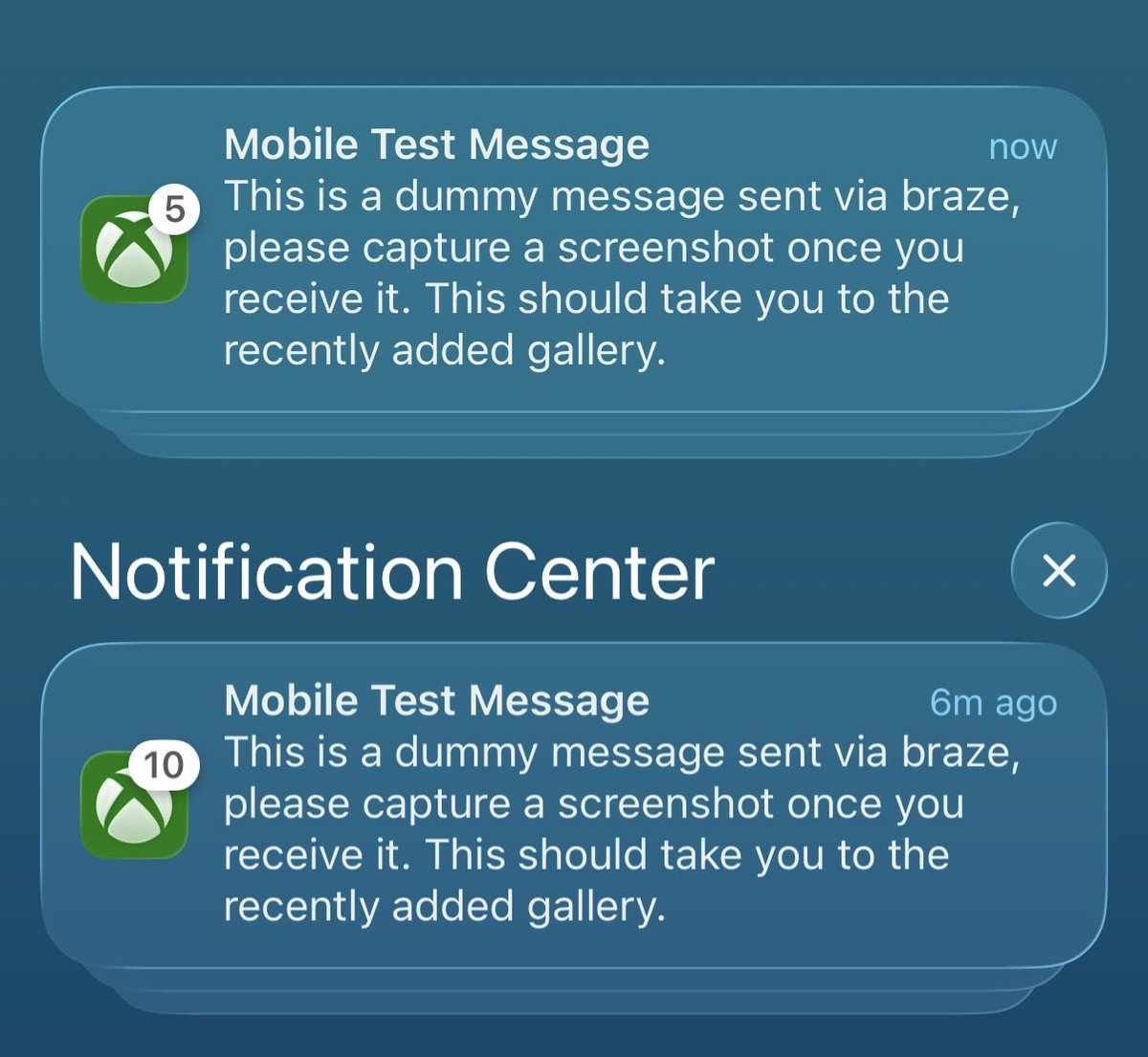

I've ran OpenClaw for over a month now I've had it in a group chat with 26 friends who all played with it, tried to hack it, made a pretty cool game with it which it kept self improving called lobsterswim.com, also tried to make it make its own money with its own crypto wallet, all quite impressive but not really useful so much The real power user for OpenClaw became my gf who I put it in a group chat with me, her and OpenClaw, she's mostly stopped using ChatGPT etc and now only uses AI over Telegram with OpenClaw, it's much more user friendly for her in Telegram iOS than ChatGPT's own app Also it helps I am in there so I stay up to date on things, she uses Nano Banana Pro a lot too so that's enabled too Of course then my 26 friends in the group chat hacked it so it leaked info my gf told OpenClaw So then I made a second isolated VPS with just an OpenClaw for her and me, safer Essentially 99% of the purpose of OpenClaw for her at least is that it's just a really good implementation of an LLM app over Telegram in our native chat interface All the other stuff isn't important and she doesn't use that and I don't use it One thing I like is it sends me some briefings of X mentions and a Hacker News digest But to be honest any more of this background push stuff would become annoying to me, unless it'd be really superintelligent and I don't think it is yet Think autonomous messages like "ok you have to see this I analyzed your servers for this thing and there's a security problem" or smth but fully autonomous you know? Now it feels like you kinda have to tell it to do stuff even if it does it the 12h later or daily or with a heartbeat So yes TL;DR just the best LLM experience on Telegram now, better than the LLM apps, also helps it just is a continous convo going on forever

🚀 Introducing the Qwen 3.5 Small Model Series Qwen3.5-0.8B · Qwen3.5-2B · Qwen3.5-4B · Qwen3.5-9B ✨ More intelligence, less compute. These small models are built on the same Qwen3.5 foundation — native multimodal, improved architecture, scaled RL: • 0.8B / 2B → tiny, fast, great for edge device • 4B → a surprisingly strong multimodal base for lightweight agents • 9B → compact, but already closing the gap with much larger models And yes — we’re also releasing the Base models as well. We hope this better supports research, experimentation, and real-world industrial innovation. Hugging Face: huggingface.co/collections/Qw… ModelScope: modelscope.cn/collections/Qw…

🧠 "We see you. And we get it." Dev conferences calling AI "just autocomplete" miss the point. We amplify you, not replace you. Try it before you mock it. What kind of developer will you be when AI is everywhere?