VerbumEng

381 posts

VerbumEng

@VerbumEng

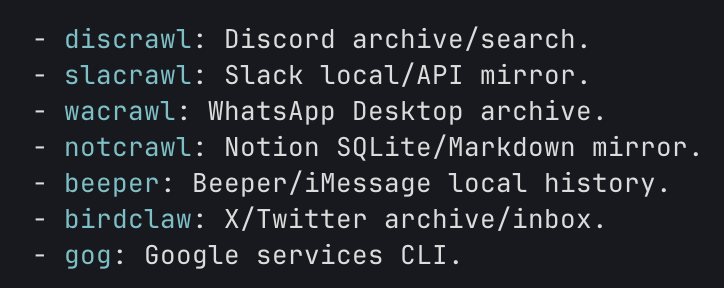

Building agent-native productivity tools. Local-first, markdown-native, BYOA. Newsletter: https://t.co/lf3LHv0boU

Talking to smarter folks than me, I'm convinced many of the AI folks in my timeline are full of shit. Nobody is "running 20 agents over night" and building stuff for actual users. Maybe some are building internal tools or disposable software. Maybe. But building software people like using? That doesn't get hacked on day one or blow up after the 3rd user? Nope. I don't even understand what that's supposed to look like. Do you work out a 57 pages document that perfectly describes what you want to build and then summon 14 agents and have them run wild for 6 hours? And what comes out on the other end isn't a broken pile of shit? Nope. Not buying it. PS: it may also be that I have an IQ of 82 and can't figure it out.

Wanted to provide more clarity about this. Yesterday, we had a regression in merge queue behavior where, in some cases, squash or rebase commits were generated from the wrong base state, making earlier changes appear reverted in branch history. 2,804 pull requests out of over 4M merged on April 23 (roughly 0.07%) were affected. We fixed the issue, we've contacted every impacted customer, and we're expanding our automated test coverage for merge queue operations. The team will be updating the status page with RCA details as well.

.@GitHub is screwing up so hard here. Terrible terrible bug, and worse: they’ve provided Zipline zero support for identifying afflicted repos and PRs. We’re still cleaning up their mess.

DeepSeek V4 Pro, for how massive it is (1.6T Parameters), is quite undertrained (32T Tokens) Yes, undertrained It has less intelligence density than that of V3.2 which is like 1/3rd of its size

Everything is markdown now and it will likely be an important part of the rest of human history. @gruber must think this is extremely funny.

Starting to notice that even with /grill-me, Opus 4.7 w/ Claude Code jumps straight to implementation 😡 Just WAIT until we're aligned, silly harness