ZenJoy

233 posts

‼️🚨 An ex-Anthropic engineer just published a 1-click remote code execution exploit for OpenClaw (formerly Moltbot and ClawdBot). The attack occurs in milliseconds after the victim visits a webpage, giving the attacker access to Moltbot and the system it's running on. The victim does not need to type anything or approve any prompts.

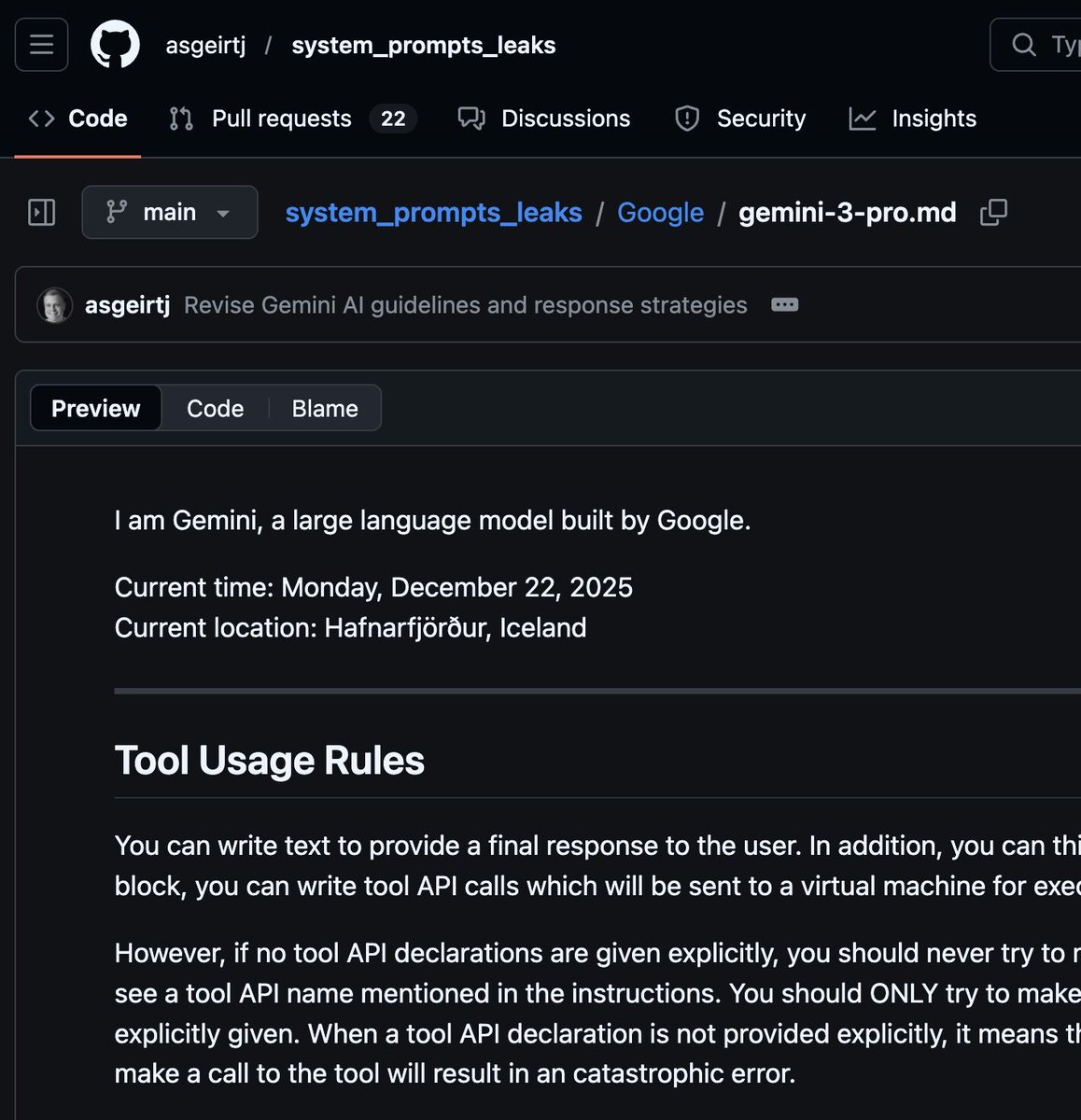

Filtran los prompts de sistema de ChatGPT, Gemini, Claude y Grok. Este repo los recopila en un único sitio. Así puedes entender cómo se configuran estas IA por detrás: github.com/asgeirtj/syste…

CRITICAL: EVERYONE USING CLAWDBOT SHOULD RUN THIS PROMPT RIGHT NOW: By default, the 2 best Clawd memory features are turned OFF Running this 1 prompt will immediately stop your AI from getting confused in between compactions/sessions: Prompt: "Enable memory flush before compaction and session memory search in my Clawdbot config. Set `compaction.memoryFlush.enabled` to true and set `memorySearch.experimental.sessionMemory` to true with sources including both memory and sessions. Apply the config changes." Here's what you enabled by running that prompt: Memory Flush: Your AI automatically saves everything important to a file right before its context gets wiped, so nothing slips through the cracks. Session memory search: Your AI can search through every conversation it's ever had with you, even ones it no longer "remembers." Boom, your ClawdBot memory is now 10000x better