Scott Condron

3K posts

Scott Condron

@_ScottCondron

Helping build AI/ML dev tools at @weights_biases. I post about machine learning, data visualisation, software tools.

Dublin, Ireland 参加日 Nisan 2018

2K フォロー中5.7K フォロワー

固定されたツイート

Here's an animation of a @PyTorch DataLoader.

It turns your dataset into a shuffled, batched tensors iterator.

(This is my first animation using @manim_community, the community fork of @3blue1brown's manim)

Here's a little summary of the different parts for those curious:

1/5

English

@drscotthawley Great! Team is eager for feedback if you have any

English

@_ScottCondron OMG, I missed the announcement! Downloading this app immediately. Looking forward to not having to fiddle with mobile browser.

English

Scott Condron がリツイート

Scott Condron がリツイート

What the wandb app sees when you’re looking at your loss curve at 3:37am

Weights & Biases@wandb

We heard you. The wandb mobile app is now LIVE on iOS 🚀 Monitor training runs from anywhere. Crash alerts the second something breaks. Live metrics on your phone. This has been the most requested feature in wandb history and it's finally here!

English

Scott Condron がリツイート

A nice pattern for autonomous research:

Start from a baseline model

LLMs generate ideas

Fan out coding agents in parallel

Evaluate each branch

Merge the best changes

Repeat

@wandb is already great for tracking all of this: artifacts, traces, diffs, evals, and lineage

English

@minu_who I’d like to give both hanks a whirl, would you mind sharing :)

English

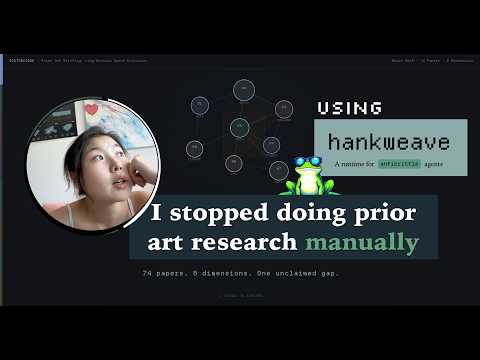

So I dumped the entire output folder from Prior Arts hank into this one, and asked it to make it pretty: I'll leave you to watch the video for the output:

youtube.com/watch?v=QNg-xr…

There was no human in the loop, except for the one who sealed a shard of their taste (or even trauma) into these hanks.

If you want to try either hank, lmk! Happy to :)

YouTube

English

I need an IDE for RL research. Who’s making this?

I need a tool that can help me understand existing research, look at their datasets, understand their evaluation metrics, compare insights across papers, and also for my own experiments.

I’m vibe coding UIs for each paper and environment, but I want an agent to have reusable primitives that compose well across modalities and task domains, do a preliminary lit review, show me just the parts that I care about without me having to find the repo, download the dataset and prompt

Are there tools ppl use @willccbb?

English

@NicholasBardy There'll be more shared about this soon I believe

English

@_ScottCondron Is it public?

I had emailed shawn a version of this I've been doing myself or almost a year now. works very well on top of wandb api to compare runs and pupeteer to screenshot charts

English

Research repositories for auto-research agents is essentially MLOps + LLMOps observability

- tracked experiment configs & metrics

What was tried, why, what worked, what didn’t

- versioned files

What files changed - e.g. training code for neural architecture search

- agent traces

What was the agents reasoning, tool calls, model/prompts used

Some open questions:

- how the research context is exposed to the agent e.g. scratchpads of experiments, convenience CLIs to navigate through prior work

- the agent harness / runtime e.g. where is it running, sandboxed, resumable etc.

I think you want this all to be very flexible. The types of experiments it’s doing, how expensive the evaluation runs are, and how you want to navigate and debug/learn/reproduce it’s work will vary a lot

English

@NicholasBardy Yes - and lots of teams are already using us for this

English

Scott Condron がリツイート

OpenClaw-RL Technical Report! Make your🦞@openclaw stronger by just using it. We propose a method that combines the advantages of GRPO and OPD, and evalution results. The repo is already 1.7k stars now, feel free to contribute! Come in and have fun~

@MengdiWang10 @LingYang_PU

English

Scott Condron がリツイート

The cofounder and CTO of Perplexity, @denisyarats just said internally at Perplexity they’re moving away from MCPs and instead using APIs and CLIs 👀

English