Sabitlenmiş Tweet

Weights & Biases

8.5K posts

Weights & Biases

@wandb

The AI developer platform.🛠️ Track and evaluate your LLM applications in real-time with @weave_wb.

San Francisco Katılım Mayıs 2018

1.3K Takip Edilen48K Takipçiler

Weights & Biases retweetledi

Weights & Biases retweetledi

Production VLA pipelines scale three workloads across three hardware profiles, plus thousands of stochastic sim rollouts.

Join @anyscalecompute + @wandb May 12 to orchestrate it end-to-end with Ray.

Register: na2.hubs.ly/H05dzc10

English

Weights & Biases retweetledi

Can confirm, @cursor_ai is the best harness we've tested on @WolfBenchAI so far!

@WolframRvnwlf tests Harness x Model, and Cursor (before the SDK) is the best one we've ever tested!

Dan ⚡️@d4m1n

lol Cursor is a better harness for both GPT 5.5 in Codex AND Opus 4.7 in Claude Code how is that possible?!

English

Easy to set up!

#highlight-config-values" target="_blank" rel="nofollow noopener">docs.wandb.ai/models/track/c…

English

W&B Inference provides frontier and open-weights models, observability included, on @SemiAnalysis_ platinum-grade infrastructure.

Day-0 launches, every time.

Try it below! wandb.ai/inference/core…

English

NEW: @IBM Granite 4.1 8B is live on W&B Inference!

$0.05 / $0.10 per 1M tokens. 131k context. Apache 2.0.

Build production agents with native tool calling, trace every call with @weave_wb, and run it all on @CoreWeave's platinum-grade AI cloud. 🧵

English

Weights & Biases retweetledi

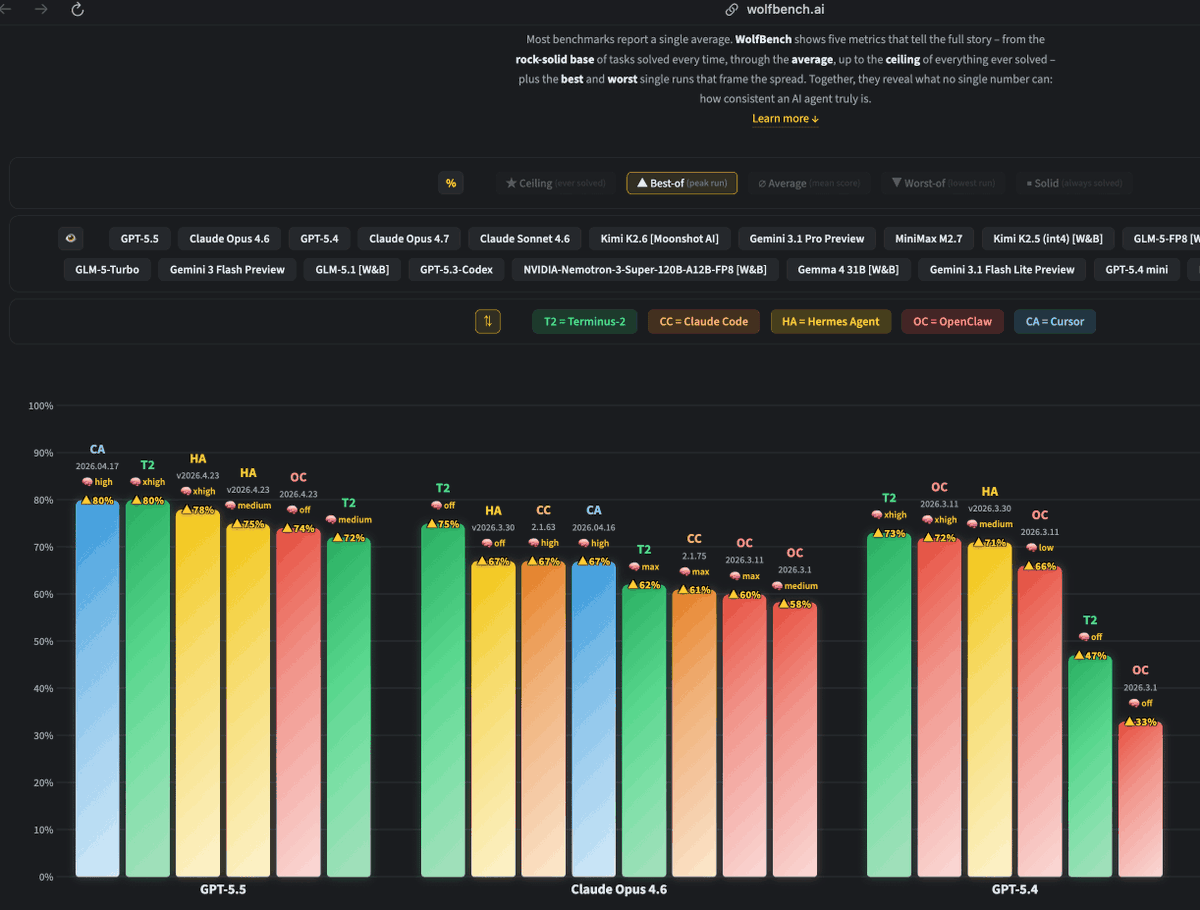

GPT-5.5 takes over WolfBench! It’s now the #1 model, ahead of Claude Opus 4.7 and 4.6, GPT-5.4, Sonnet 4.6, Kimi K2.6, Gemini 3.1 Pro, and more.

Notable findings after 30 runs (40h runtime, >1.7B tokens, ~$3K cost):

- @OpenAI's GPT-5.5 is the best model we ever tested.

- @cursor_ai's Agent CLI (CA) is the best agent we ever tested.

- @NousResearch's Hermes Agent (HA) outperformed OpenClaw (OC).

- With Hermes, going from medium to xhigh reasoning only improved consistency, not capability.

Note: This is WolfBench, where we look at more than just the average score, because one metric is not enough. The golden ∅ score is the actual 5-run average, which most other benchmarks report as their only score. ★ shows the ceiling (what percentage of the full benchmark this model+agent combination solved at least once across all runs). ■ shows the solid base (what percentage of the full benchmark it solved consistently in every run).

English

Weights & Biases retweetledi

Weights & Biases retweetledi

Weights & Biases retweetledi

was a blast for our team at @CopilotKit to partner on the coolest happy hour at #GoogleCloudNext with @Redisinc & @wandb

fun first week of work🏎️🏁

Uli 🪁@ulidabess

Wrap on Google Cloud Next 26' It was so great to spend time with @idosal1, @liadyosef, and @zeroasterisk and have the best conversations on Generative UI and the headless Web. Also big shoutout to @sofiiiiiasz, who led our conference presence on her first week at CopilotKit

English

Can you drive in the dark Arctic or amidst a Tokyo typhoon?

This AI car can. @wayve_ai drove through both, plus 504 more cities, with almost no additional training data for over half of them.

@alexgkendall calls it proof that “generalization at scale” is possible for AI cars.

English

Aaron Katz (@ceo_clickhouse) built a $15B company around one philosophy:

Nobody likes being sold to.

So he served Anthropic, OpenAI, LangChain and Vercel without a sales team. Just a shared Slack channel with their top engineers and a commitment to get you into production before competitors had even scoped the project.

Four years in, 3000 customers. The numbers speak for themselves.

English