Some bedtime storytime fun with the kids and #dalle A portrait of Totoro, painted by Pablo Picasso:

Datamase

3K posts

Some bedtime storytime fun with the kids and #dalle A portrait of Totoro, painted by Pablo Picasso:

The Los Angeles Unified school board has voted to require screen time limits and encourage the use of pen and paper for assignments, becoming the first major school district to do so. @SamChampion reports.

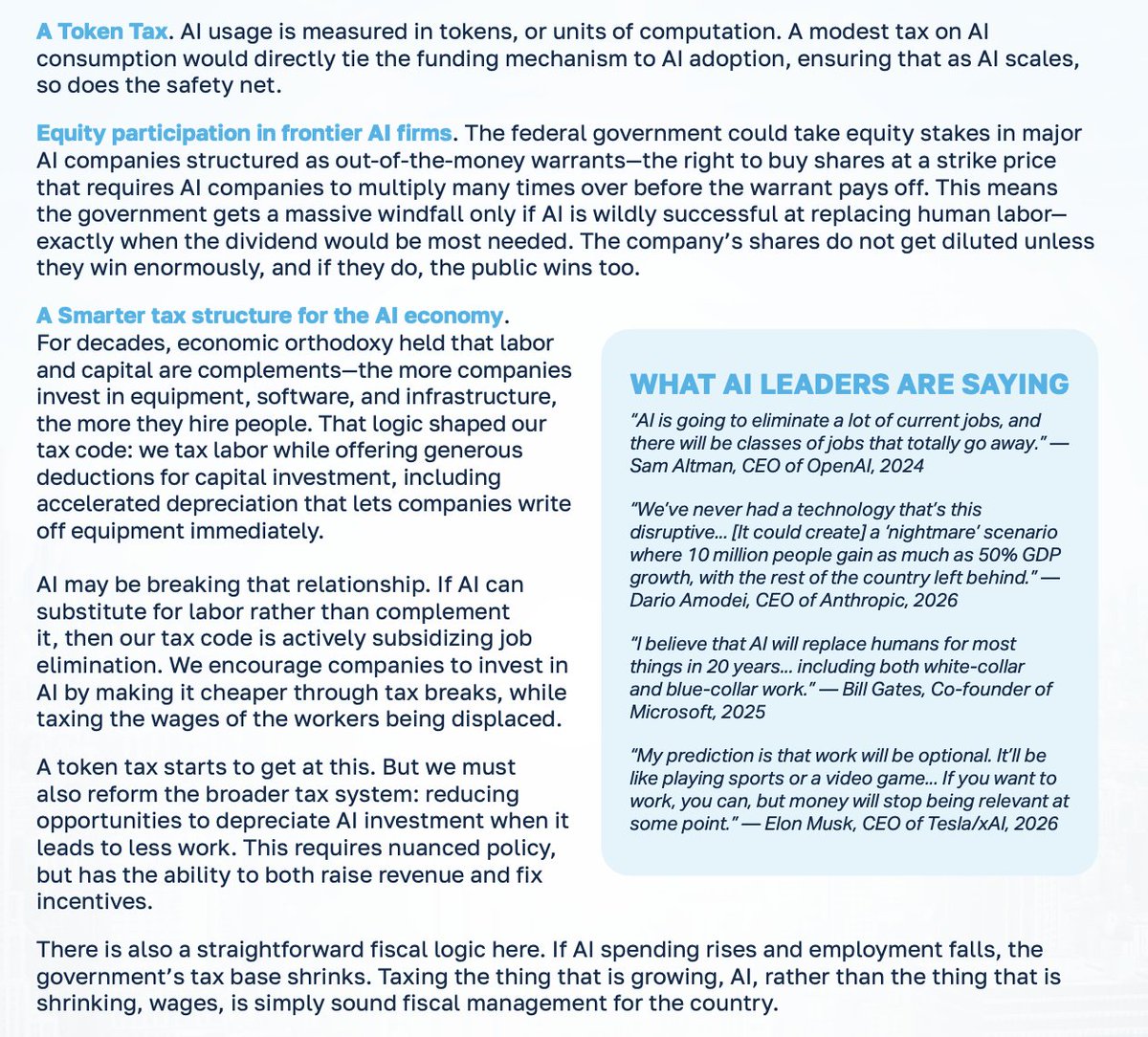

Today, I’m proud to announce the AI Dividend, my plan to prepare for the AI economy with direct payments to Americans funded by tax reform that simultaneously incentivizes hiring humans instead of AI. Read the full plan here: alexbores.nyc/ai-dividend

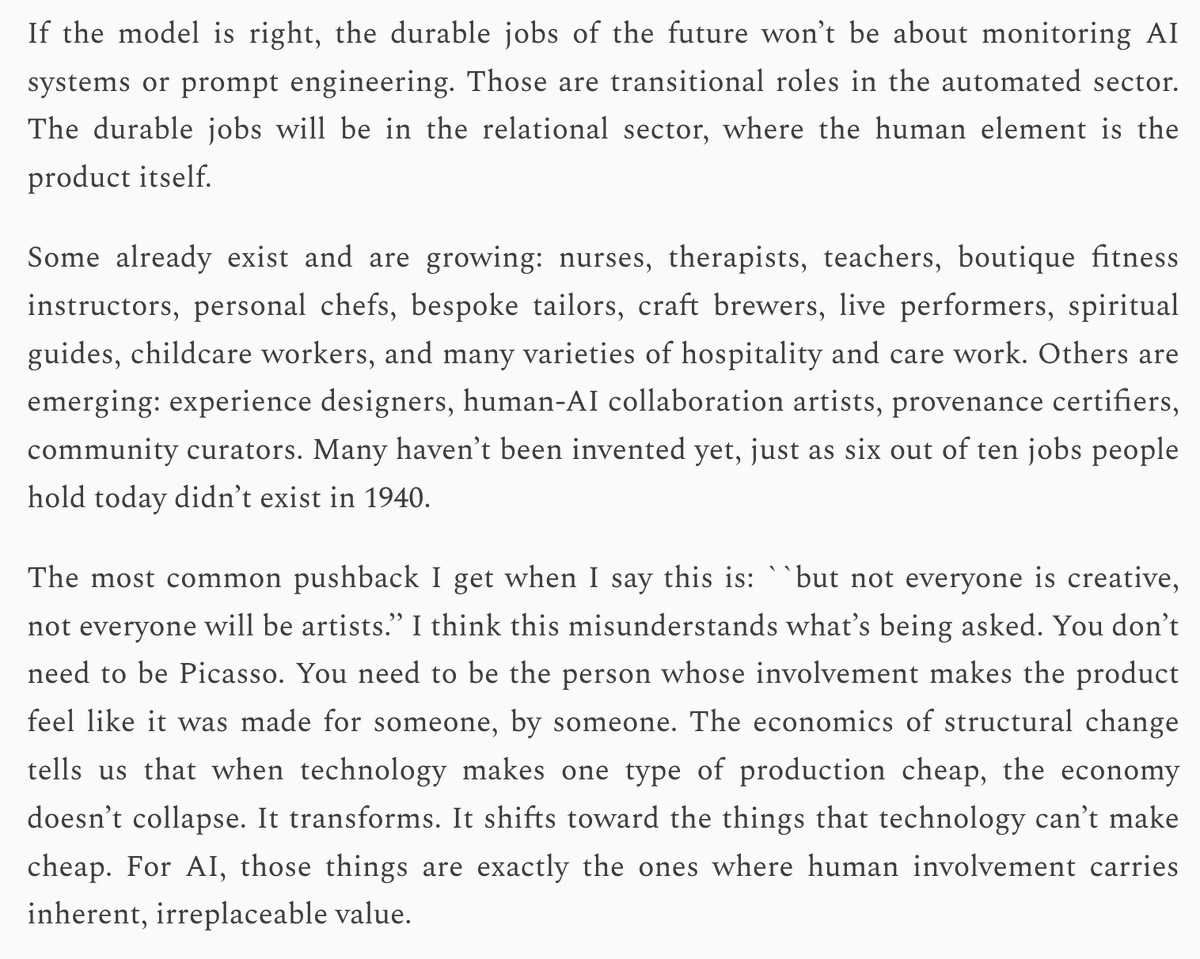

Dario is wrong. He knows absolutely nothing about the effects of technological revolutions on the labor market. Don't listen to him, Sam, Yoshua, Geoff, or me on this topic. Listen to economists who have spent their career studying this, like @Ph_Aghion , @erikbryn , @DAcemogluMIT , @amcafee , @davidautor