固定されたツイート

Diego Dotta

617 posts

Diego Dotta

@diegodottac

Sprinting, stumbling, and learning as I go. Building horses, not unicorns. (っ-,-)つ𐂃

San Francisco, CA 参加日 Ocak 2010

1K フォロー中330 フォロワー

@oboelabs I always dreamed of playing the oboe! Did you guys have access to my dreams? Or maybe a parallel universe? Not this reality, though. :( oboe.fyi/courses/diego-…

English

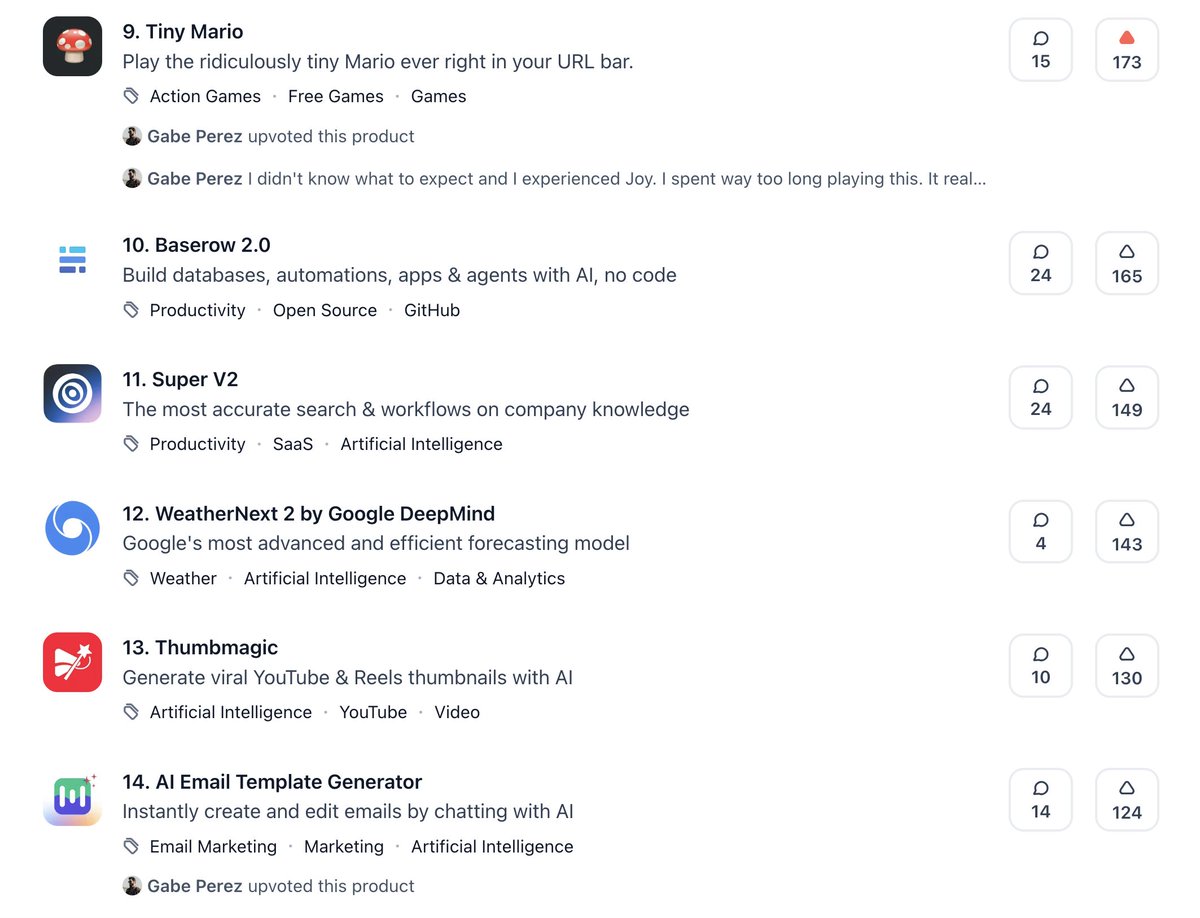

I don't want to be a tiny d*ck, but Tiny Mario is more popular than @Google new launches on @ProductHunt . #TinyProud #TinyBragging

English

Weekend little game inspired by @epidemian URL Snake. diego.horse/jump

English

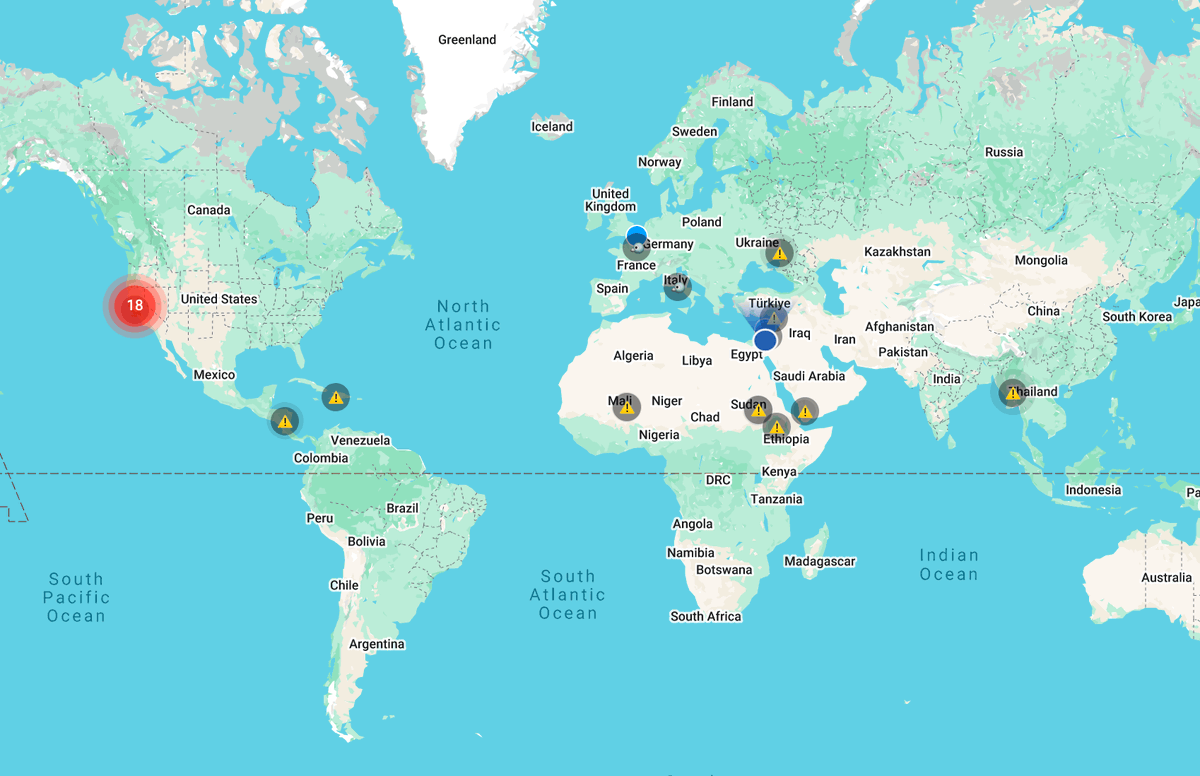

AirFiesta.FM cancelled in many places around the world. Inspired by @CFR_org Global Conflict Tracker.

English

I made some splats of weird museum spaces (Piranesi-esque) with @theworldlabs and recorded some explorations - 1) a fly thru the space stacked end to end:

English

Still having a blast with airfiesta.fm, grabbed the flock algo from @jtrdev and tweaked it to make custom birds using simple shapes. Added Parrots from Telegraph Hill in SF and Pigeons from Paris.

This inspired to make a whole game based on flocks, they’re super cool.

English

Having fun working on a multiplayer game + hot air balloon simulation + scavenger hunt. Thanks @shotamatsuda, @garrettkjohnson, @_keiya01 and @jeantimex for making this possible. Give it a try on airfiesta.fm

English

Can I live without @uptimerobot? No!!

I wish there was a feature to redirect to another domain when an incident occurs.

English

You asked, I made it. TV mode with auto camera moves and more clean interface for people that are using Airtales as screensaver. airtales.fm/tv-mode Playing now @rotherhamradio

English

@jestonlu @youdistro Cool product, Jeston! By the way, your website footer is duplicated. Best regards.

English

From one convo with AI,

To infinite pieces of content.

This is @youdistro.

LIVE NOW on Product Hunt: 👇

English

LOL, this Tea Time Alarm thing cracked me up! teatime.london #TeaTimeAlarm #TeaTime #BritainsGotTalent

English

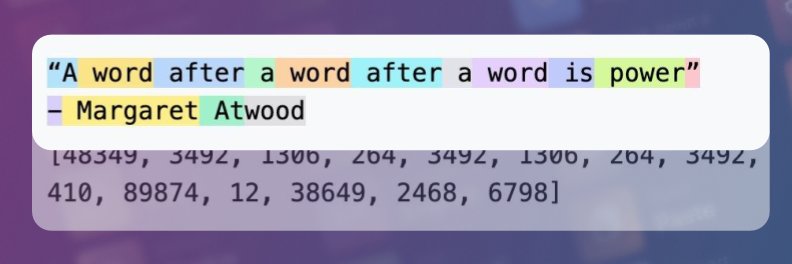

@slatestarcodex @DaveShapi Tested latest mini OpenAI models for fast, token-efficient reasoning in an more efficient language, inspired by Neuralese. diego.horse/neuralese-the-…

English

Thanks for engaging. Some responses (mine only, not anyone else on AI 2027 team):

>>> "1. They provide no evidence that they guessed right in the past".

In the "Who Are We?" box after the second paragraph, where it says "Daniel Kokotajlo is a former OpenAI researcher whose previous AI predictions have held up well", the words "previous AI predictions" is a link to the predictions involved, and "held up well" is a link to a third-party evaluation of them.

>>> "2. No empirical data, theory, evidence, or scientific consensus".

There's a tab marked "Research" in the upper right. It has 193 pages of data/theory/evidence that we use to back our scenario.

>>> "3. They pull back at the end saying "We're not saying we're dead no matter what, only that we might be, and we want serious debate" okay sure."

Our team members' predictions for the chance of AI killing humans range from 20% to 70%. We hope that we made this wide range and uncertainty clear in the document, including by providing different endings based on these different possibilities.

>>> "4. The primary mechanism they propose is something that a lot of us have already discussed (myself included, which I dubbed TRC or Terminal Race Condition). Which, BTW, I first published a video about on June 13, 2023 - almost a full 2 years ago. So this is nothing new for us AI folks, but I'm sure they didn't cite me."

Bostrom discusses this in his 2014 book (see for example box 13 on page 303), but doesn't claim to have originated it. This idea is too old and basic to need citation.

>>> 5. "They make up plausible sounding, but totally fictional concepts like "neuralese recurrence and memory"

Neuralese and recurrence are both existing concepts in machine learning with a previous literature (see eg nlp.cs.berkeley.edu/pubs/Andreas-D… and en.wikipedia.org/wiki/Recurrent…). The combination of them that we discuss is unusual, but researchers at Meta published about a preliminary version in 2024, see arxiv.org/pdf/2412.06769. We have an expandable box "Neuralese recurrence and memory" which explains further, lists existing work, and tries to justify our assumptions. Nobody has successfully implemented the exact architecture we talk about yet, but we're mentioning it as one of the technological advances that might happen by 2027 - by necessity, these future advances will be things that haven't already been implemented.

>>> 6. "In all of their thought experiments, they never even acknowledge diminishing returns or negative feedback loops."

In the takeoff supplement, CTRL+F for "Over time, they will run into diminishing returns, and we aim to take this into account in our forecasts."

>>> 7. "They do acknowledge that some oversight might be attempted, but hand-wave it away as inevitably doomed."

In one of our two endings, oversight saves the world. If you haven't already, click the green "Slowdown" button at the bottom to read this one.

>>> 8. "Interestingly, I actually think they are too conservative on their 'superhuman coders'. They say that's coming in 2027. I say it's coming later this year."

I agree this is interesting. Please read our Full Definition of the "superhuman coder" phase at ai-2027.com/research/takeo… . If you still think it's coming this year, you might want to take us up on our offer to bet people who disagree with us about specific milestones, see docs.google.com/document/d/18_… .

>>> "9. Overall, this paper reads like 'We've tried nothing and we're all out of ideas.'"

We think we have lots of ideas, including some of the ones that we portray as saving the world in the second ending. We'll probably publish something with specific ideas for making things go better later this year.

English

I finally got around to reviewing this paper and it's as bad as I thought it would be.

1. Zero data or evidence. Just "we guessed right in the past, so trust me bro" even though they provide no evidence that they guessed right in the past. So, that's their grounding.

2. They used their imagination to repeatedly ask "what happens next" based on.... well their imagination. No empirical data, theory, evidence, or scientific consensus. (Note, this by a group of people who have already convinced themselves that they alone possess the prognostic capability to know exactly how as-yet uninvented technology will play out)

3. They pull back at the end saying "We're not saying we're dead no matter what, only that we might be, and we want serious debate" okay sure.

4. The primary mechanism they propose is something that a lot of us have already discussed (myself included, which I dubbed TRC or Terminal Race Condition). Which, BTW, I first published a video about on June 13, 2023 - almost a full 2 years ago. So this is nothing new for us AI folks, but I'm sure they didn't cite me.

5. They make up plausible sounding, but totally fictional concepts like "neuralese recurrence and memory" (this is dangerous handwaving meant to confuse uninitiated - this is complete snakeoil)

6. In all of their thought experiments, they never even acknowledge diminishing returns or negative feedback loops. They instead just assume infinite acceleration with no bottlenecks, market corrections or other pushbacks. For instance, they fail to contemplate that corporate adoption is critical for the investment required for infinite acceleration. They also fail to contemplate that military adoption (and that acquisition processes) also have tight quality controls. They just totally ignore these kinds of constraints.

7. They do acknowledge that some oversight might be attempted, but hand-wave it away as inevitably doomed. This sort of "nod and shrug" is the most attention they pay to anything that would totally shoot a hole in their "theory" (I use the word loosely, this paper amounts to a thought experiment that I'd have posted on YouTube, and is not as well thought through). The only constraint they explicitly acknowledge is computing constraints.

8. Interestingly, I actually think they are too conservative on their "superhuman coders". They say that's coming in 2027. I say it's coming later this year.

Ultimately, this paper is the same tripe that Doomers have been pushing for a while, and I myself was guilty until I took the white pill.

Overall, this paper reads like "We've tried nothing and we're all out of ideas." It also makes the baseline assumption that "fast AI is dangerous AI" and completely ignores the null hypothesis: that superintelligent AI isn't actually a problem. They are operating entirely from the assumption, without basis, that "AI will inevitably become superintelligent, and that's bad."

Link to my Terminal Race Condition video below (because receipts).

Guys, we've been over this before. It's time to move the argument forward.

Daniel Kokotajlo@DKokotajlo

"How, exactly, could AI take over by 2027?" Introducing AI 2027: a deeply-researched scenario forecast I wrote alongside @slatestarcodex, @eli_lifland, and @thlarsen

English

Just found out about Neuralese, the most spoken language you’ll never speak. Guess I’ll keep mumbling in human while the AIs have their own private chat. 🤖📷 #Neuralese #LostInTranslation diego.horse/neuralese-the-…

English

Smart Keys for Mac brings one-tap translation, proofreading and code fixes right into your desktop workflow

{ author: @diegodottac } #DEVCommunity

dev.to/diegodotta/the…

English