JS

1K posts

We just released Claude Code channels, which allows you to control your Claude Code session through select MCPs, starting with Telegram and Discord. Use this to message Claude Code directly from your phone.

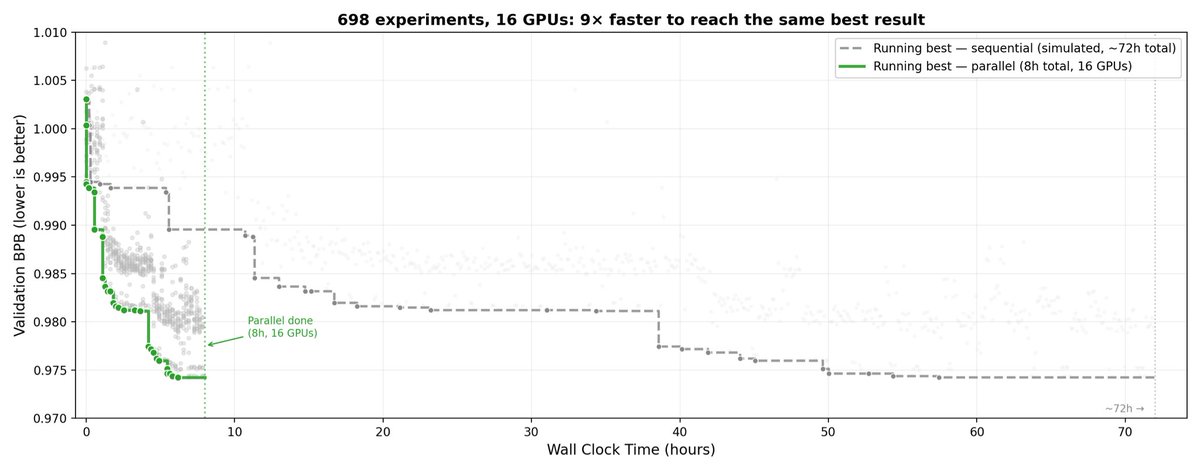

Karpathy's Autoresearch is bottlenecked by a single GPU. We removed the bottleneck. We gave the agent access to our K8s cluster with H100s and H200s and let it provision its own GPUs. Over 8 hours: • ~910 experiments instead of ~96 sequentially • Discovered that scaling model width mattered more than all hparam tuning • Taught itself to exploit heterogenous hardware: use H200s for validation, screen ideas on H100s Full setup and results: blog.skypilot.co/scaling-autore… @karpathy

It's crazy how AI is really good at the stuff I don't know anything about and total dog shit at the stuff I do.

🚨 Shocking: Frontier LLMs score 85-95% on standard coding benchmarks. We gave them equivalent problems in languages they couldn't have memorized. They collapsed to 0-11%. Presenting EsoLang-Bench. Accepted to the Logical Reasoning and ICBINB workshops at ICLR 2026 🧵

Introducing Dasher Tasks Dashers can now get paid to do general tasks. We think this will be huge for building the frontier of physical intelligence. Look forward to seeing where this goes!

"Massive investment in AI contributed basically zero to US economic growth last year," per Goldman Sachs

Introducing Lovable for more general tasks. Lovable has always been for building apps. Today it also becomes your data scientist, your business analyst, your deck builder, and your marketing assistant. This is a big step toward what Lovable is becoming: a general-purpose co-founder that can do anything. See examples below.