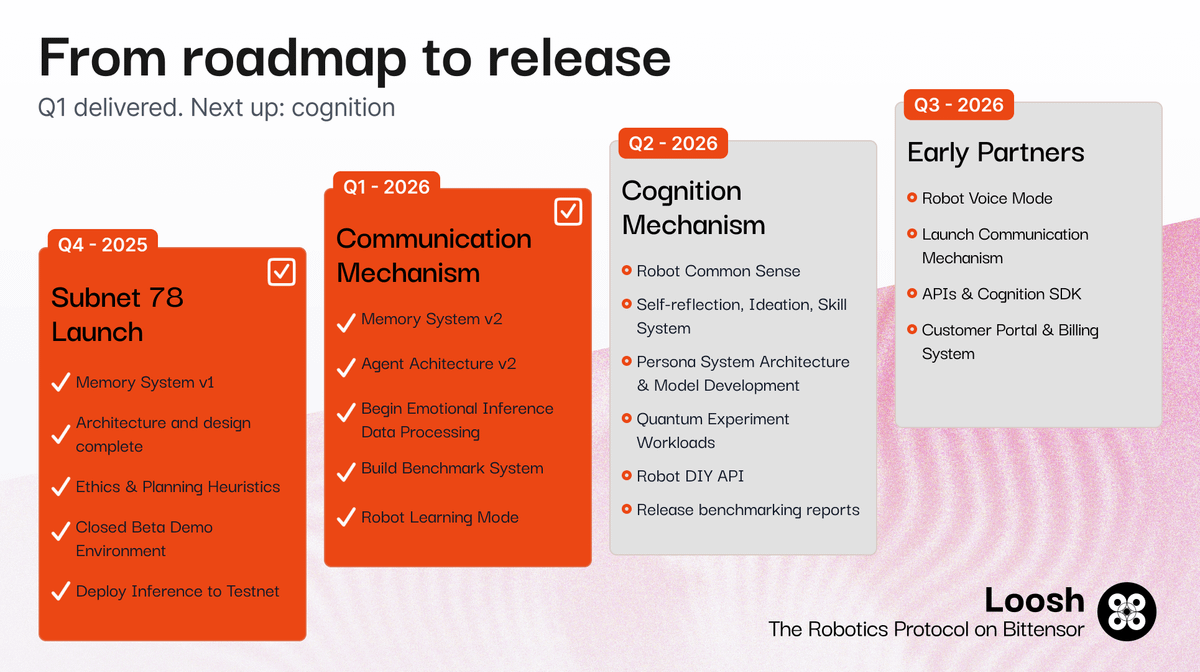

Subnet 78 is entering its next phase: Evolution. It’s been just over two months since launching on Bittensor and we’ve learned a lot. This initial phase taught us about the constraints of the agent framework as well as its weaknesses, bottlenecks, and where it breaks. It’s also shown us where to go next. We’re leveling up, starting today. What’s changing: -Focus on EEG and emotional inference research -Design a Subnet 9-style pretraining competition -Introduce multi-round training cycles, with emissions allocated based on validated marginal improvement against benchmark In parallel: -We’re exploring the use of zk proofs for inference validation --Validators confirm inference ran on the specified model --Moves toward a compute-based reward system, not just challenge-response -If viable, both tracks will run in parallel. -If not, we will prioritize pretraining. Incentives update: -90% miner emissions burn introduced -Challenges continue, at reduced frequency This adjusts rewards to reflect the current quality of outputs, while protecting long-term subnet health. Why this matters: We are shifting from early-stage experimentation to measurable, compounding model improvement. This creates: -Stronger incentives for meaningful progress -Clearer signal in miner performance -A more stable path toward production systems Timeline: -30 days → inference track (research → rollout) -49 days → pretraining track (research → deployment prep) -60 days → full subnet upgrade (with testing) This is a big shift, and a necessary one. It brings us closer to building the cognition layer for real-world robotics. That's the challenge. That’s the direction. Subnet 78