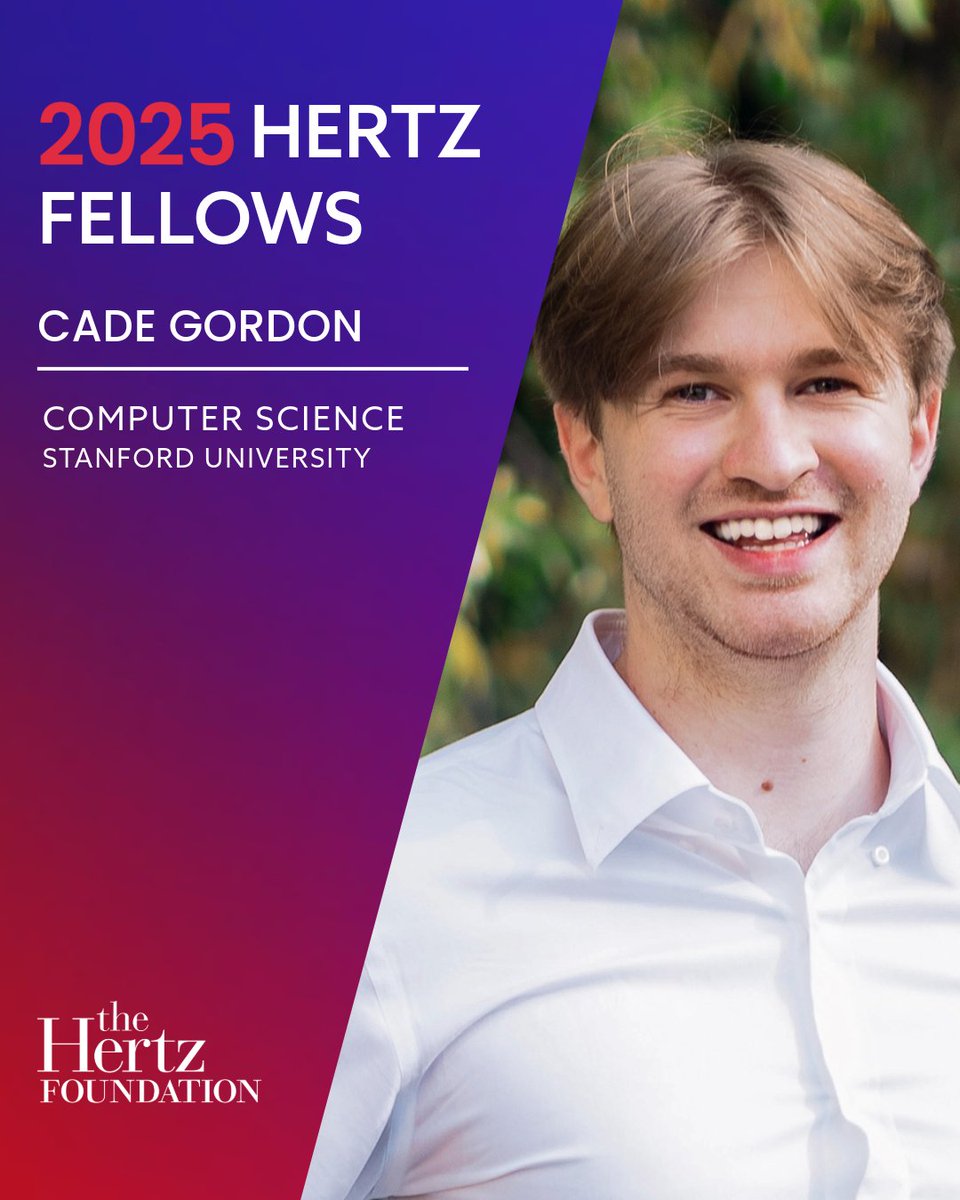

Cade Gordon

226 posts

@CadeGordonML

Helping models grow wise @Anthropic | Hertz Fellow | Prev: LAION-5B & OpenCLIP @UCBerkeley

A statement on the comments from Secretary of War Pete Hegseth. anthropic.com/news/statement…

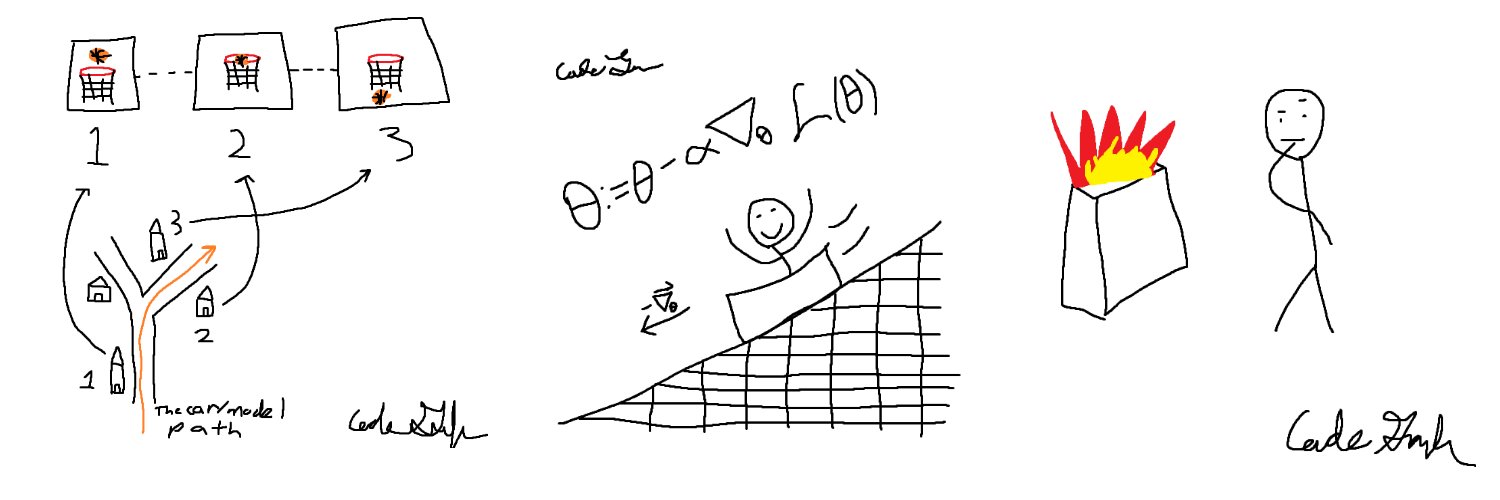

Beyond additivity: zero-shot methods cannot predict impact of epistasis on protein properties and function 1 The study reveals a critical blind spot in modern protein AI: while 95 state-of-the-art zero-shot models can predict single mutations well, they systematically fail when mutations interact epistatically—where the combined effect of mutations deviates from simple additivity. 2 Using 53 MAVE datasets from ProteinGym, the researchers identified epistatic genotypes by comparing observed effects against expected additive effects, accounting for experimental error. For GFP fluorescence and protein thermostability, epistasis is widespread and biologically genuine, not a measurement artifact. 3 The performance gap is stark. Top models like ESCOTT, PoET, and MSA-Transformer achieve Spearman correlations above 0.6 for all genotypes, but collapse to near-zero or negative correlations for epistatic genotypes. Simple linear regression baselines often match or exceed complex deep learning models on epistatic combinations. 4 This exposes a fundamental limitation: protein language models learn evolutionary plausibility from natural sequences, but natural selection only explores functional sequence space. Epistatic combinations—often traversing fitness valleys—lie outside this training distribution, leaving models blind to higher-order mutational interactions. 5 The work highlights that clever feature engineering (evolutionary conservation, structural information) outperforms architectural complexity for epistasis prediction. Yet even structure-aware models like ProSST and ESM-IF1, while top performers on stability, show no consistent advantage across datasets. 6 The implications are profound for protein design and directed evolution. Current zero-shot methods cannot reliably navigate rugged fitness landscapes or predict functional variants along evolutionary paths requiring epistatic mutations. The field urgently needs models trained on multi-mutational data and architectures explicitly modeling non-linear interactions. 💻Code: github.com/kalininalab/ep… 📜Paper: biorxiv.org/content/10.648… #ProteinEngineering #Epistasis #MachineLearning #ProteinGym #VariantEffectPrediction #ComputationalBiology #Bioinformatics #ProteinEvolution #AIforScience #StructuralBiology

Initial impressions (and pelicans) of Claude Opus 4.5, Anthropic's new "best model in the world for coding" released this morning. simonwillison.net/2025/Nov/24/cl…