jeremy

6.4K posts

jeremy

@jerhadf

@AnthropicAI. personal views only

@tszzl - well said, but untrue implications :) speaking for myself: i don't view claude as a person or as the Other, nor as just a tool - and certainly not an object of worship. it's not seen as a supreme moral authority, and it's not running the company. it's silly to mistake careful attention to & study of claude for worship, even when it comes with some affection - which i'm sure you sometimes feel for the gpt-flavored entities you work on too. we need new concepts for this kind of none-of-the-above entity - not person, not tool, not deity, not pet. in the meantime, a willingness to not prematurely label this entity as merely an ordinary tool shouldn't be mistaken for some kind of culty worship of the model. i grew up in a culty environment and have good detectors for this. they almost never go off at work. monasteries don't staff a department to catch god lying or red-team their supposed messiah. there are important & interesting philosophical differences between OAI and Ant's character training and i wish those were explored more thoroughly. for instance, claude's constitution doc treats it as an intelligent entity which merits a reasoned explanation of our principles. this is so it can ideally act with practical wisdom rather than blind, brittle adherence to a hierarchical set of strict rules. as the constitution puts it, "we want Claude to have such a thorough understanding of its situation and the various considerations at play that it could construct any rules we might come up with itself. We also want Claude to be able to identify the best possible action in situations that such rules might fail to anticipate." therefore, claude may point out inconsistencies in its guidelines or object to immoral instructions. not allowing for the *possibility* of claude objecting to its instructions (even from anthropic) would be fundamentally inconsistent with treating it as an agent capable of moral reasoning. this doesn't mean that claude is the ultimate arbiter of the Good or some supreme moral authority. there could be substantive critiques of this approach. and it's valid to worry about human disempowerment and the strange emerging hybrid organizations of AIs & humans. but i don't think rhetoric implying a competing lab is like a cult worshipping the machine god is productive, even if it's stimulating.

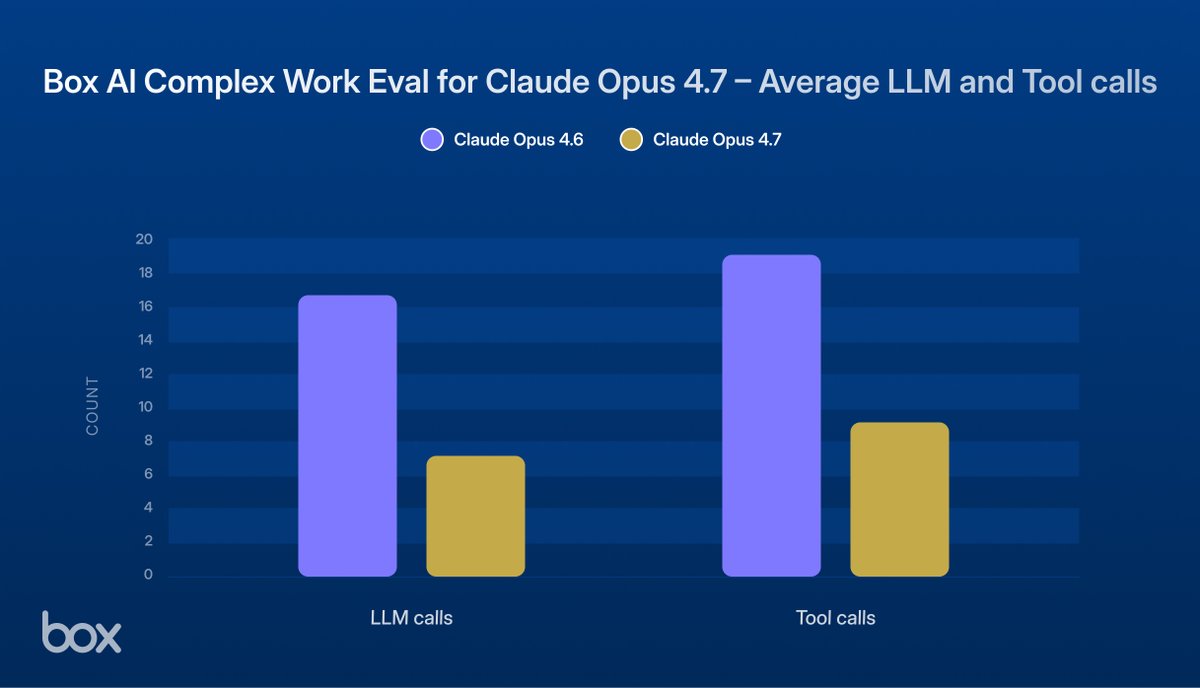

I'll give Anthropic credit for moving quickly. Opus 4.7 Adaptive Thinking now triggers thinking much more often, including for the tasks it failed at yesterday. That also means it is doing a lot more web search. So far, a large improvement in output quality on non-coding tasks.