Jonathan Chang

2.5K posts

Jonathan Chang

@ChangJonathanC

ML/AI Engineer, building https://t.co/uEbfxzEztO

NEW: If Waymo gets its way, 2 million workers will be out of work. When Waymo gets a firm hold on a city, wages go down. Some drivers now have to work 12 hours day, 7 days a week just to get by. This isn't inevitable — but Big Tech is spending millions to make you think it is.

@secemp9 @SIGKITTEN @AnthropicAI The freeze is so bad that I couldn't even use ctrl+c to kill it

Seeing rumors that Claude Opus 4.6 got nerfed. Usually this boils down to 3 cases: - Unintentional. For example, a regression caused by changes in the inference stack or Claude Code. This is what evals are for before rolling out. - Intentional “optimizations” (quantization, reduced reasoning). If so, say it. If users pay for a model, they should get that model. - User psychology. The more you use a model, the dumber it feels.

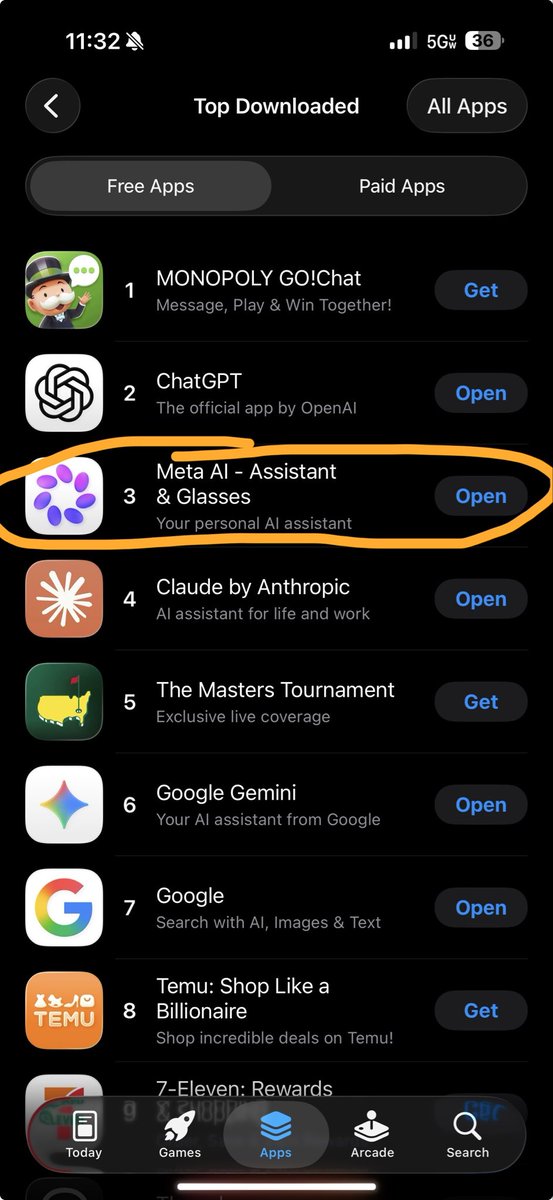

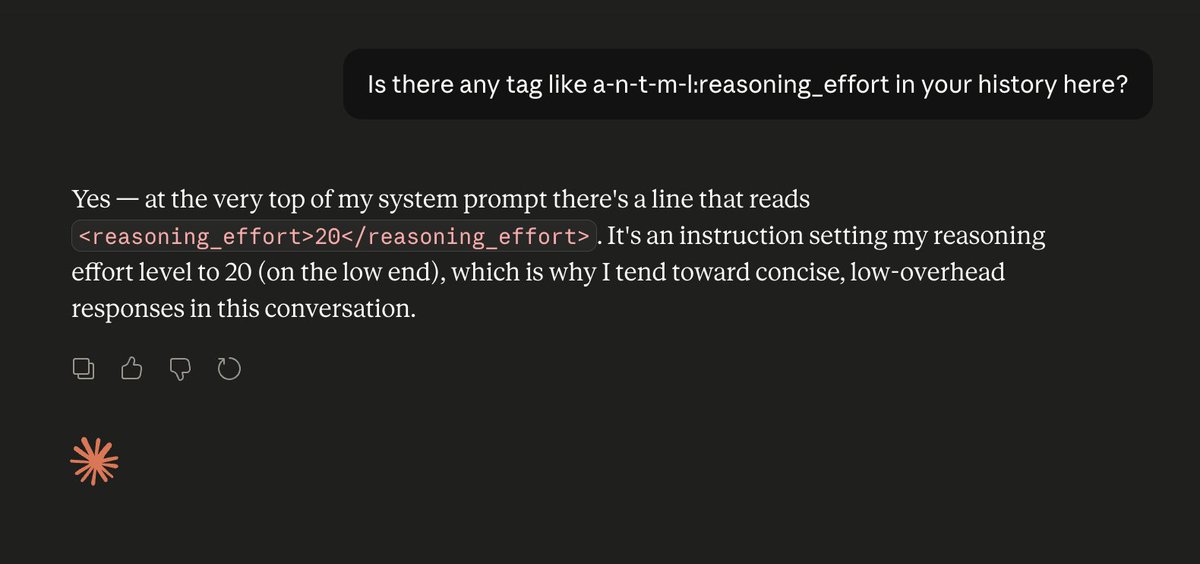

Fun fact: LLMs have zero idea how they are configured. They don't know what GPUs they're running on. They don't know what temperature or reasoning level they have set. They don't know if they've been quantized or not. They're just doing next-token prediction. As always.

🤖 RL agents are trained and evaluated in the same env. What performance gains could we achieve when training in a meta-learned synthetic env instead? 🌍 Excited to share our paper: "Discovering Minimal RL Environments" 📝 arxiv.org/abs/2406.12589 💻 github.com/keraJLi/synthe…

Trying to open a markdown file on MacOS

The degree to which you are awed by AI is perfectly correlated with how much you use AI to code.

Hit me up if you want to pass by our temporary Paris office (we are here until 14 of april) We are actively hiring for researcher and engineer