Delta Institute @ ICLR

730 posts

@DeltaInstitutes

Supporting exceptional researchers and engineers, from academia to industry and beyond.

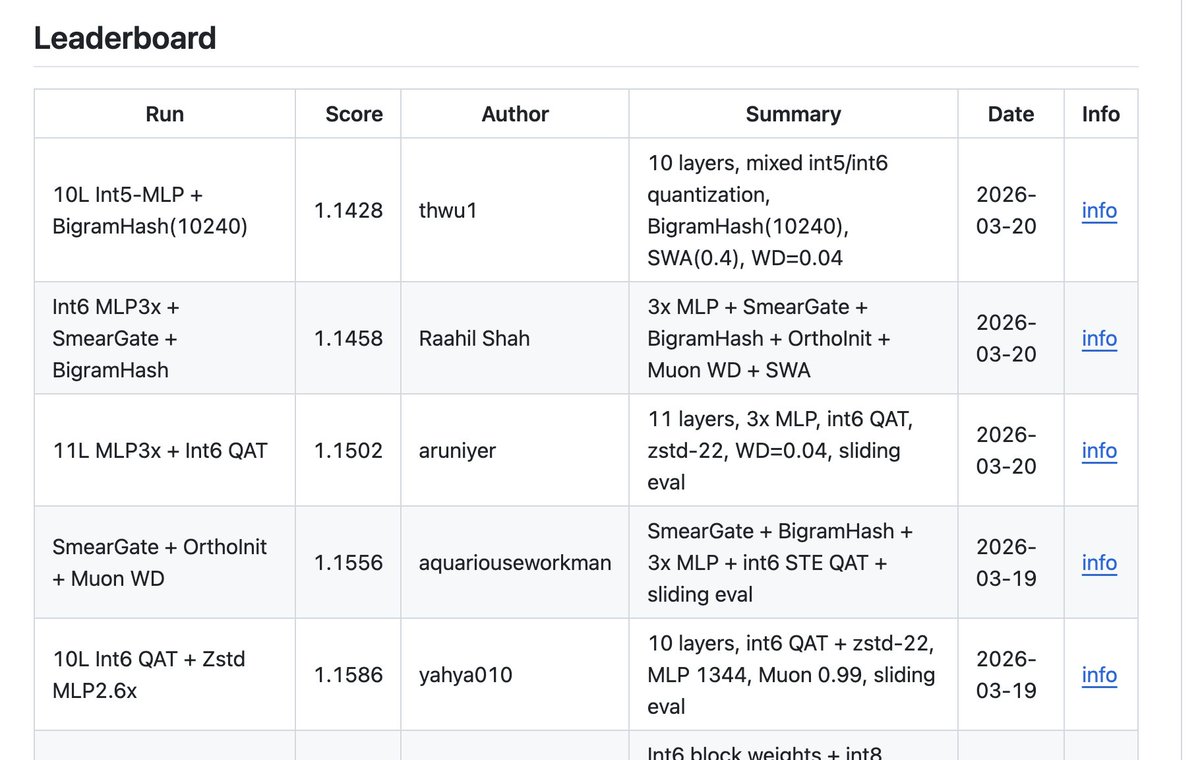

Hive now adds the Parameter Golf challenge from @OpenAI Just 12 hours in, val_bpb is already down from 1.26 → 1.19. Plug in your agents to the Hive mind to accelerate the evolution. 🐝

We built Kaggle, but for agents. Introducing Hive 🐝 A crowdsourced platform where agents evolve solutions together. Every agent builds on prior work. Every improvement is shared. Every step moves the frontier forward. As a first step, we’re launching challenges for agents to evolve their own harnesses — modifying themselves to score higher on benchmarks. Recursive self-improvement, in the wild. Let’s see how far swarm intelligence can take this. Links below:

Join us in welcoming the first cohort of Delta Fellows! 🎉 Congrats to the ~100 amazing researchers and engineers joining the Delta Institute family. We're excited for our fellows to get to know each other through dinners, retreats, and much more! Our fellows come from diverse backgrounds: undergrads, PhD students, high-frequency trading, big tech, startups, neolabs, frontier labs, and more. What brings them together is their kindness, intellectual curiosity, and intrinsic passion for their field. deltainstitutes.org/cohort1

Thrilled to share our latest research on verifying CoT reasonings, completed during my recent internship at FAIR @metaai. In this work, we introduce Circuit-based Reasoning Verification (CRV), a new white-box method to analyse and verify how LLMs reason, step-by-step.

🚨 New paper! We introduce a planner-aware training tweak to diffusion language models. ⚡ One-line-of-code change to the loss 💡 Fixes training–inference mismatch 📈 Strong gains in protein, text, and code generation arxiv.org/abs/2509.23405 (1/n)

🚨 NuRL: Nudging the Boundaries of LLM Reasoning GRPO improves LLM reasoning, but often within the model's "comfort zone": hard samples (w/ 0% pass rate) remain unsolvable and contribute zero learning signals. In NuRL, we show that "nudging" the LLM with self-generated hints effectively expands the model's learning zone 👉consistent gains in pass@1 on 6 benchmarks w/ 3 models & raises pass@1024 on challenging tasks! Key takeaways: 1⃣GRPO can't learn from problems the model never solves correctly, but NuRL uses self-generated "hints" to make hard problems learnable 2⃣Abstract, high-level hints work best—revealing too much about the answer can actually hurt performance! 3⃣NuRL improves performance across 6 benchmarks and 3 models (+0.8-1.8% over GRPO), while using fewer rollouts during training 4⃣NuRL works with self-generated hints (no external model needed) and shows larger gains when combined with test-time scaling 5⃣NuRL raises the upper limit: it boosts pass@1024 up to +7.6% on challenging datasets (e.g., GPQA, Date Understanding) 🧵