DIMENSION

371 posts

DIMENSION

@_DimensionCap

A first-of-its-kind firm partnering with founders at the interface of technology and life sciences to transform the trajectory of life on earth

.@_DimensionCap is leading a $40M financing for Congruence, leveraging frontier AI & molecular dynamics for a best in class small molecule corrector platform. This financing brings this high powered nimble team into the clinic w/ 3 unique assets. LFG! endpoints.news/congruence-sta…

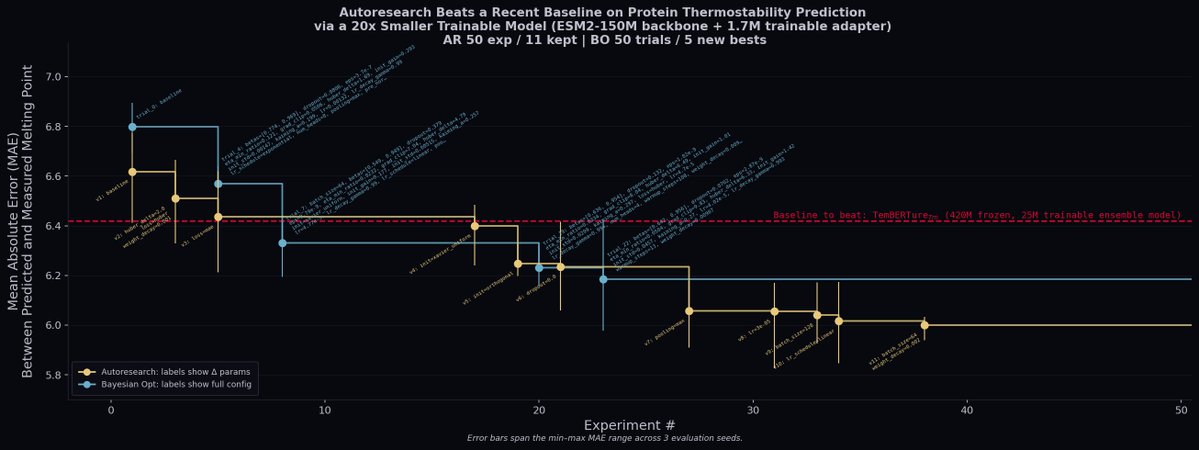

We @_DimensionCap ported @karpathy's autoresearch framework to biology. We let Claude run 50 experiments over the weekend on protein thermostability prediction via @modal. It beat a recent baseline (TemBERTure) using a 20x smaller model. Code + research blog later this week!

We @_DimensionCap ported @karpathy's autoresearch framework to biology. We let Claude run 50 experiments over the weekend on protein thermostability prediction via @modal. It beat a recent baseline (TemBERTure) using a 20x smaller model. Code + research blog later this week!

Today, we announce Tamarind Bio’s $13.6M Series A, led by @_DimensionCap, with participation from @ycombinator. Tamarind has now become the trusted platform for molecular AI inference, serving tens of thousands of scientists, including 8 of the top 20 pharma, dozens of biotechs and research organizations. Since last year, we’ve grown revenue 7x and are grateful to work with world-class biopharma R&D organizations like @Bayer, @Boehringer, and Adimab. — When we started two years ago, a few brave biotechs took a chance with us. Hoping this new company, providing easy access to computational drug discovery tools, would enable their scientists to apply foundation models to real therapeutic applications. Since then, our library of AI models has grown to hundreds, not just open-source tools, but also our users’ internal protocols, proprietary models trained on users’ own data, pipelines of multiple models together and more! Now, we are doubling down on our commitment to building the core AI and data infrastructure to power the next generation of medicines. We will continue to support open models, strengthening them with proprietary data, and prioritize access to all scientists. Join us as we build the infrastructure for all AI-powered drug discovery.

I joined @_DimensionCap. I make $1-50m+ investments. Pre-seed to Public. Infra, horizontal apps, life sciences. I’m Nikhil. Our website is dimensioncap.com. My email is a combination of those.