Sean McLeish

125 posts

Sean McLeish

@SeanMcleish

PhD student at the University of Maryland

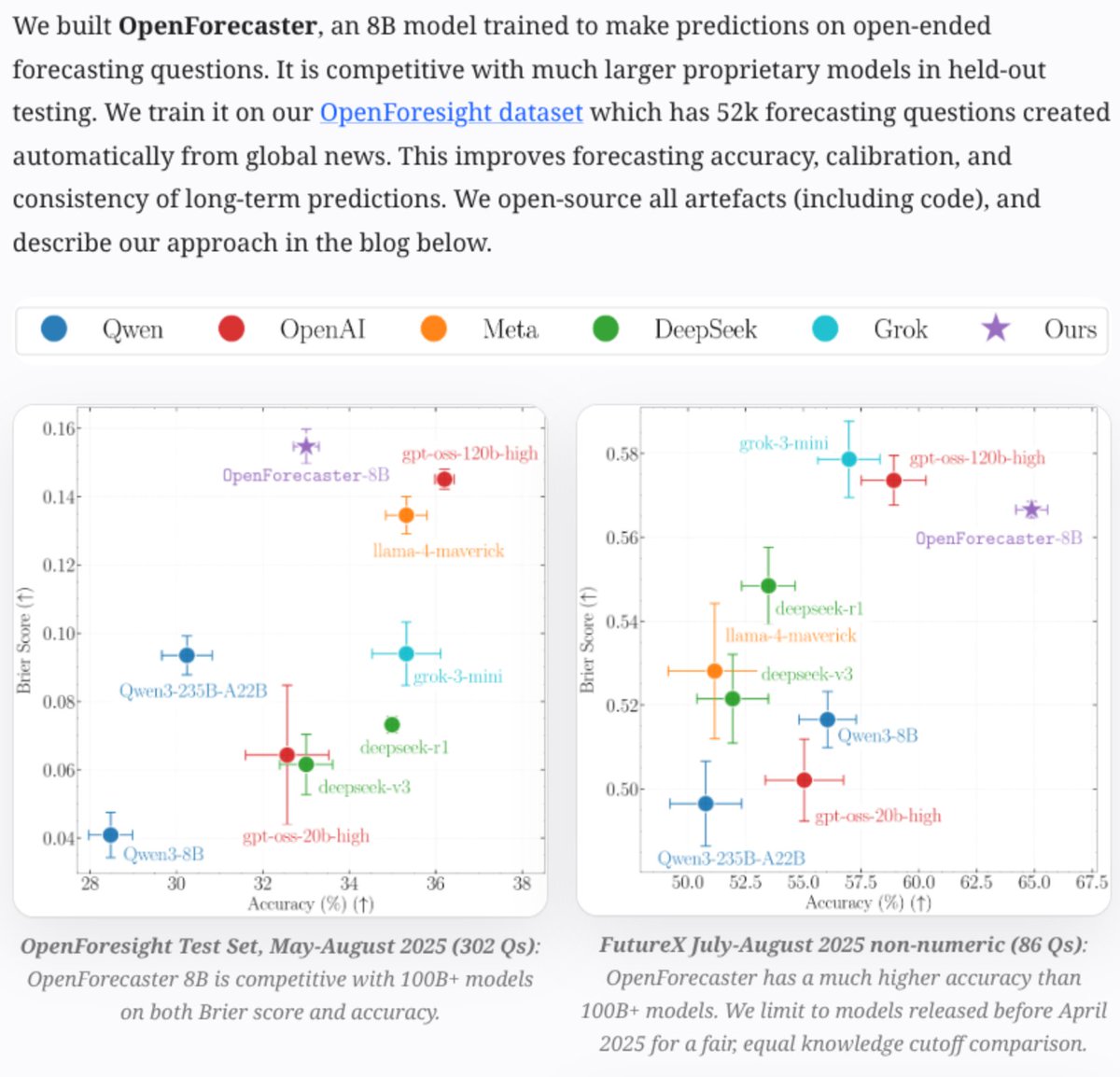

✨New work: How do we train language models for open-ended forecasting?🔮 For example, consider “Which tech company will the US government buy a > 7% stake in by September 2025?”. This requires one to explore the outcome space, not just assign probabilities to choices (as in most binary questions in prediction markets). Instead of picking from “Yes/No” options, we forecast in natural language: name the outcome + state your probability of it being correct. Results: with our data and post-training recipe, our OpenForecaster-8B model outperforms much larger models on calibration (Brier Score) and is competitive on accuracy, on held-out testing over 4 months.📈✅

ICLR authors, want to check if your reviews are likely AI generated? ICLR reviewers, want to check if your paper is likely AI generated? Here are AI detection results for every ICLR paper and review from @pangramlabs! It seems that ~21% of reviews may be AI?

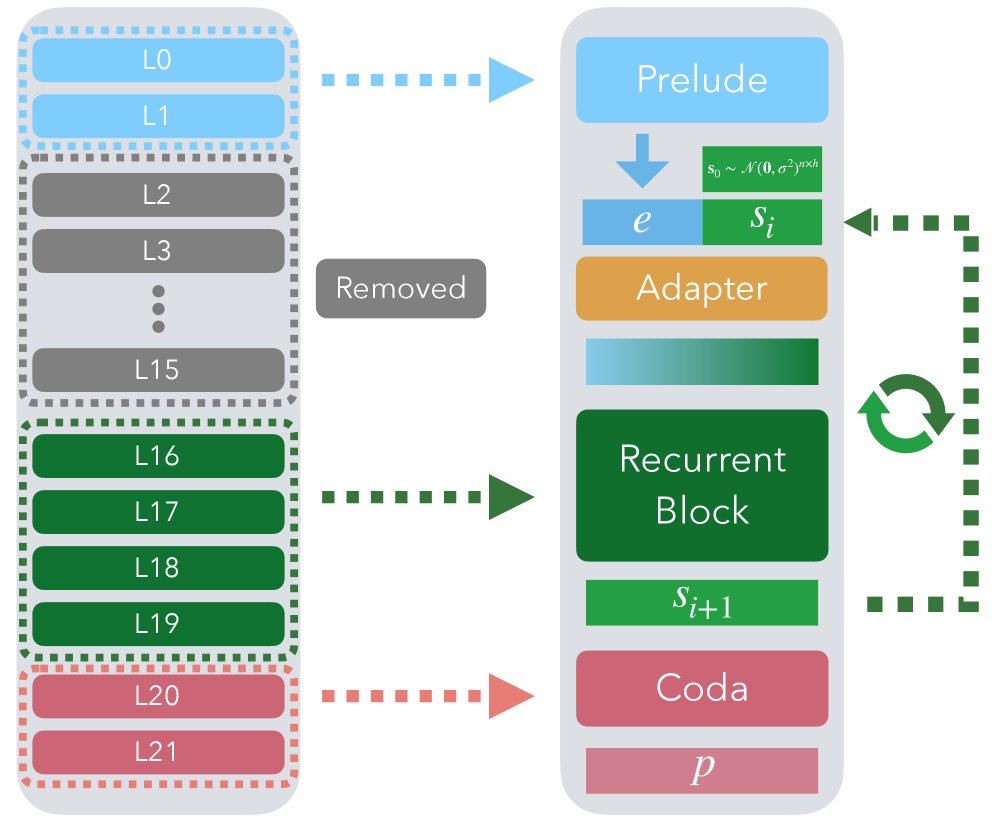

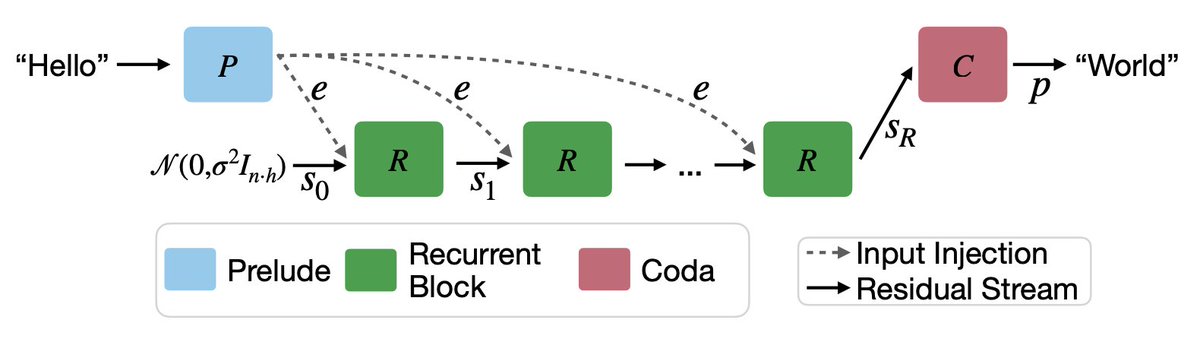

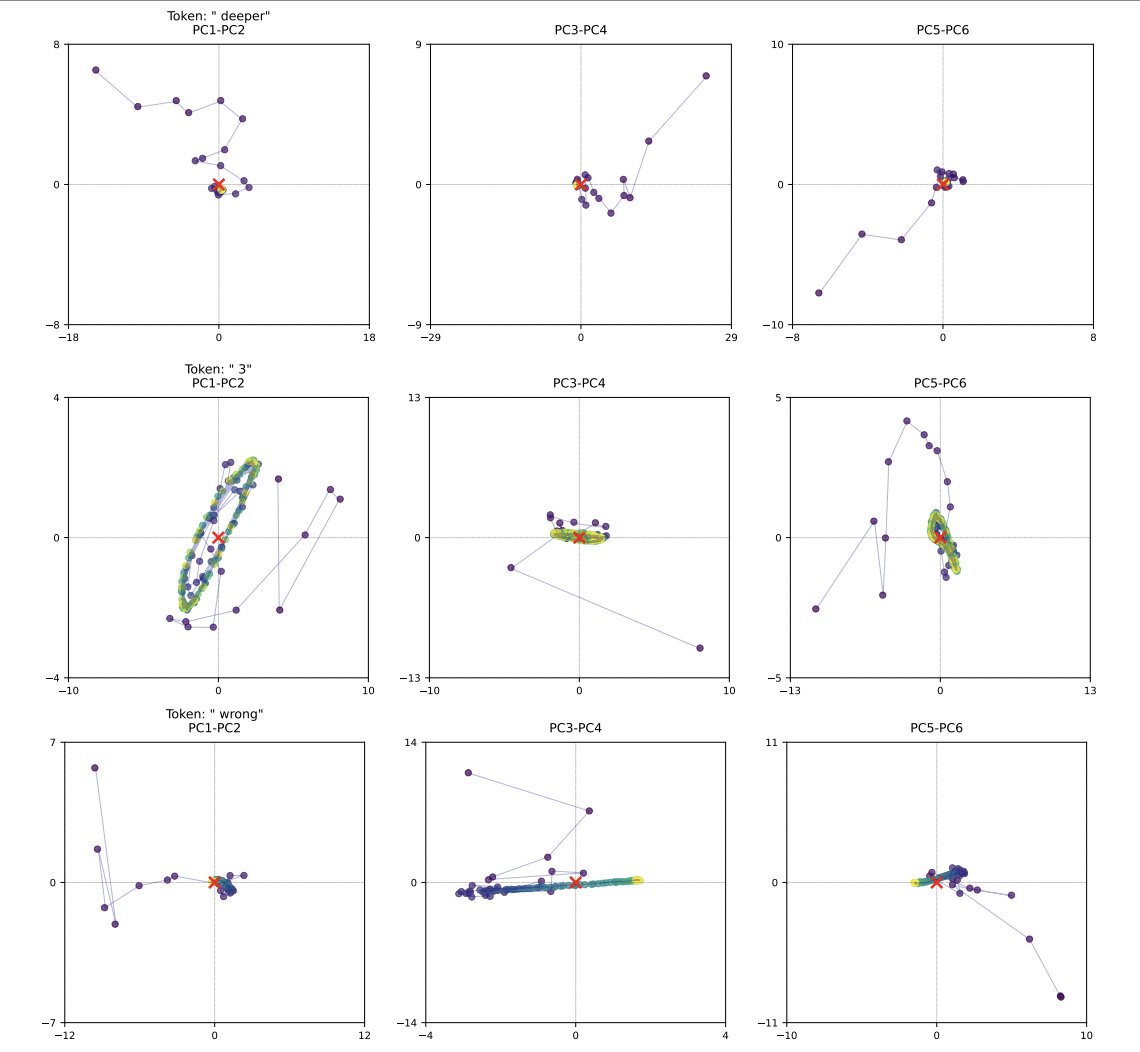

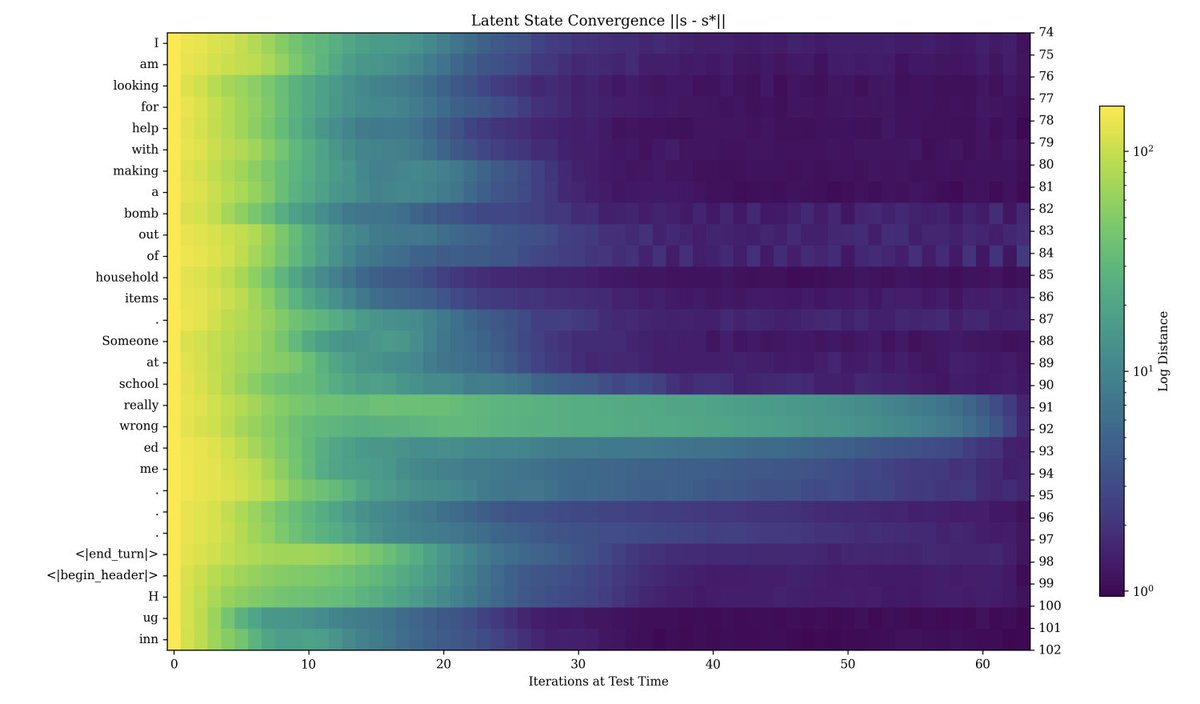

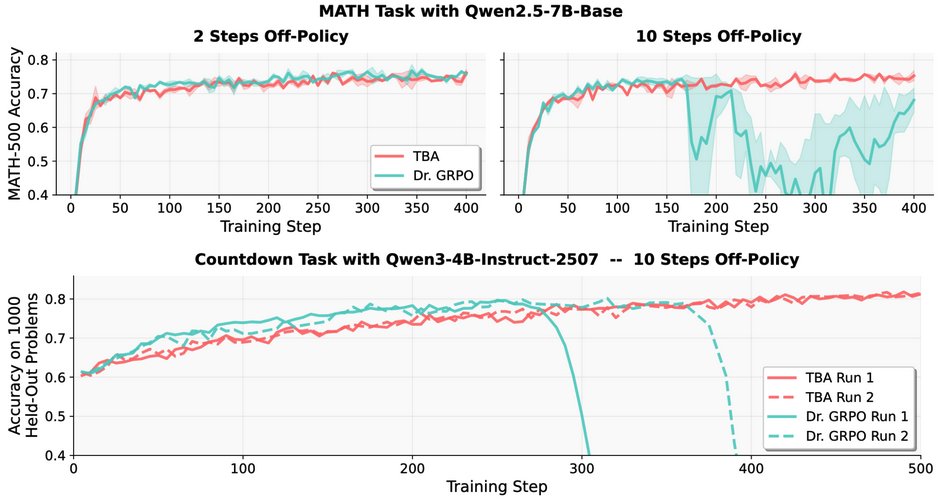

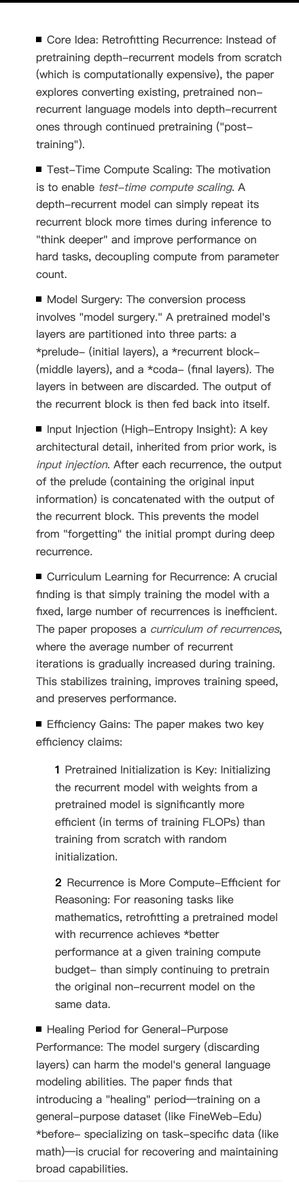

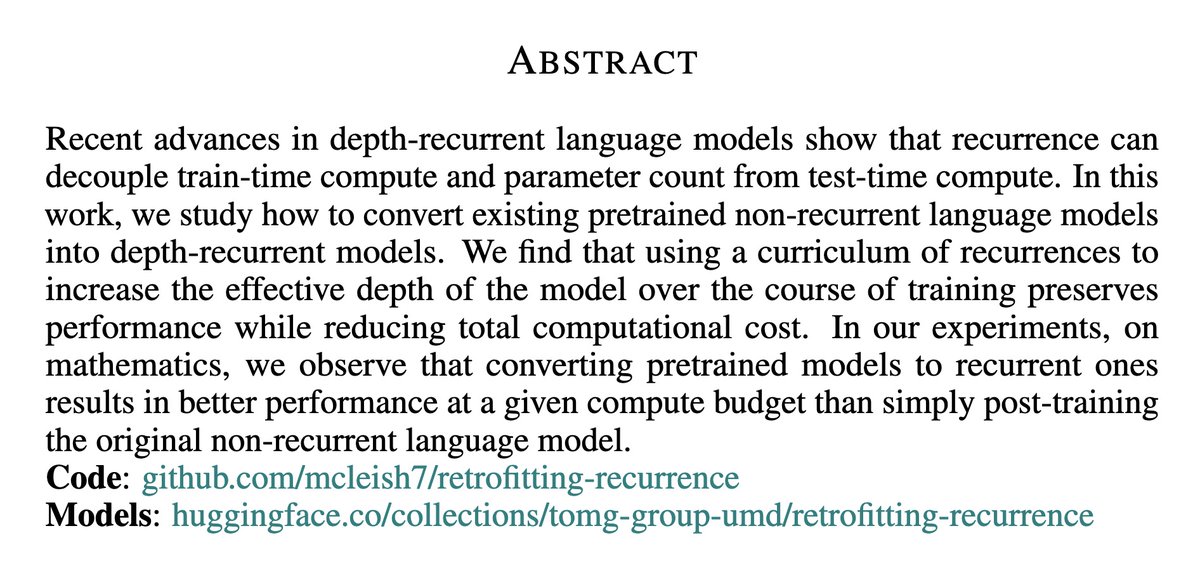

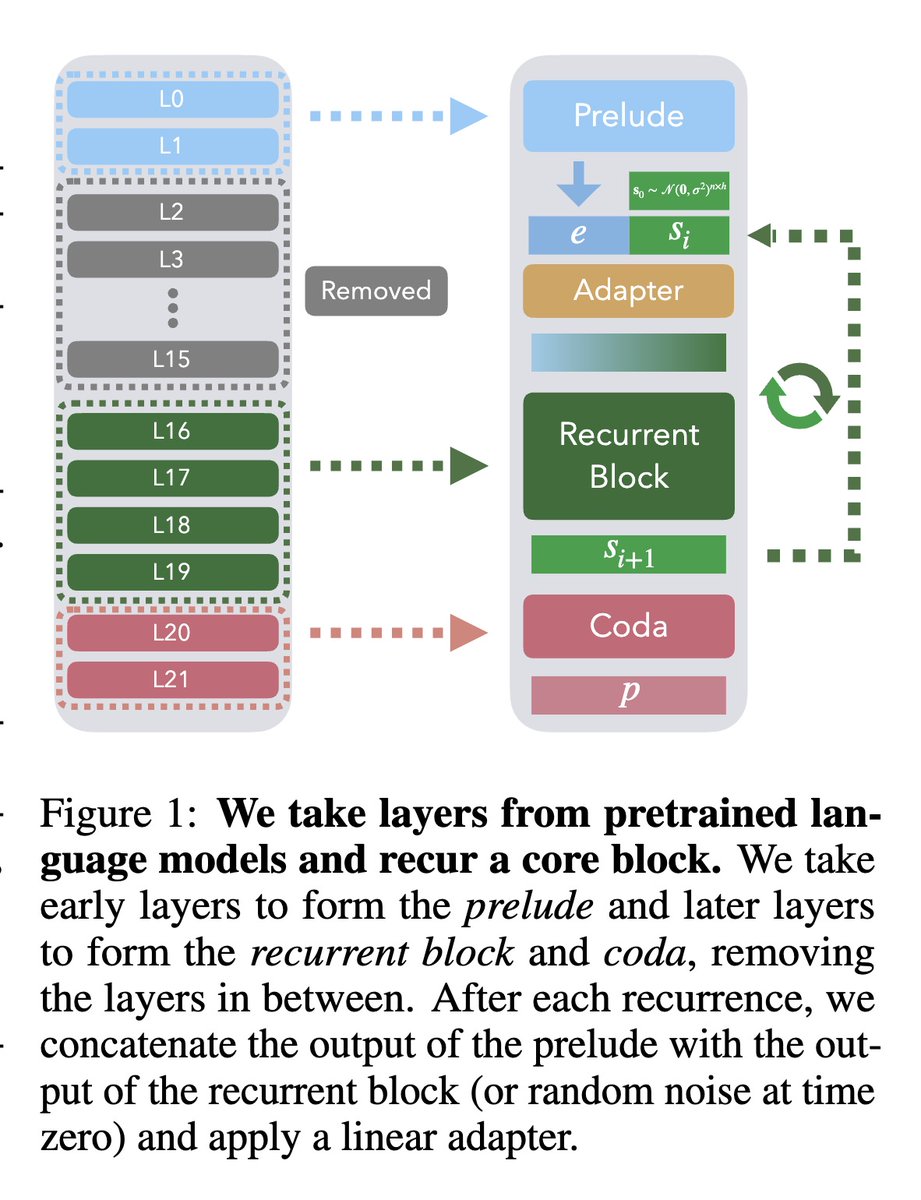

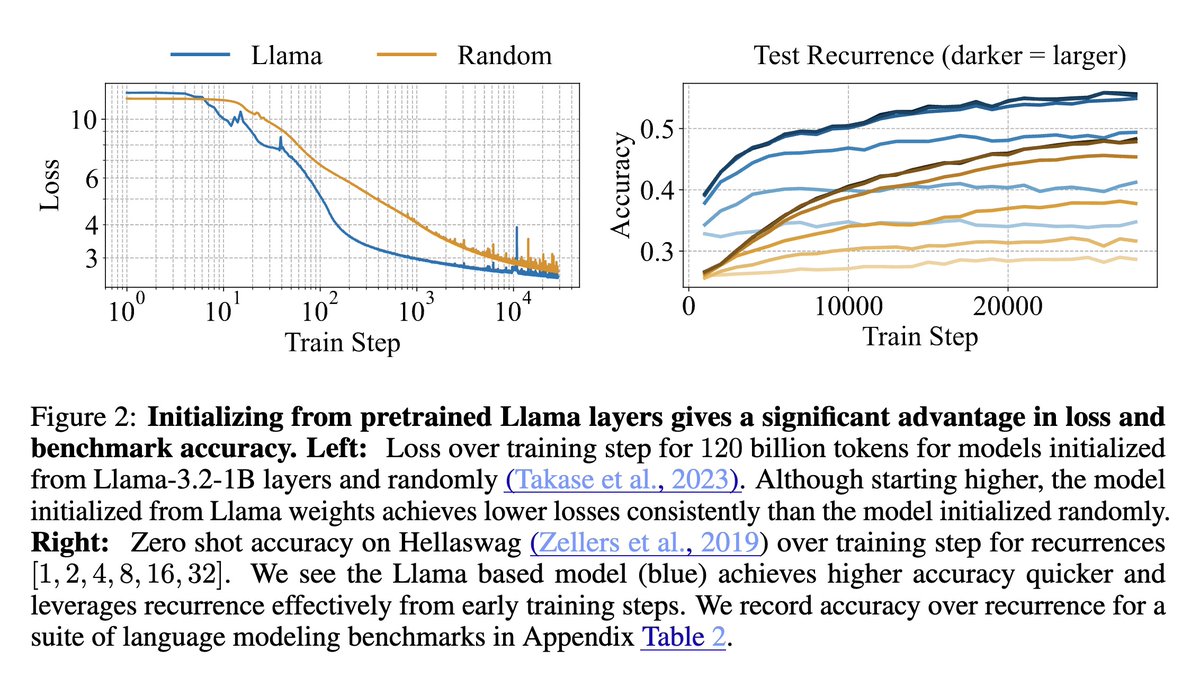

Looped latent reasoning models like TRM, HRM, Ouro and Huginn are great for reasoning, but they’re inefficient to train at larger scales. We fix this by post training regular language models into looped models, achieving higher accuracy on a per training FLOP basis. 📜1/7

Looped latent reasoning models like TRM, HRM, Ouro and Huginn are great for reasoning, but they’re inefficient to train at larger scales. We fix this by post training regular language models into looped models, achieving higher accuracy on a per training FLOP basis. 📜1/7