Daniel Kornev

13.6K posts

Daniel Kornev

@danielko

Agentic AI for Industrial Apps — auditable workflows, real ROI | CEO @AISuccessors (Techstars ’24)

Chrome just became massively more agent-friendly 🔥 Your real, signed-in browser can now be natively accessible to any coding agent. No extensions. No headless browser. No screenshots. No separate logins. Just one toggle to enable it. Check this out: developer.chrome.com/blog/chrome-de…

Macrohard or Digital Optimus is a joint xAI-Tesla project, coming as part of Tesla’s investment agreement with xAI. Grok is the master conductor/navigator with deep understanding of the world to direct digital Optimus, which is processing and actioning the past 5 secs of real-time computer screen video and keyboard/mouse actions. Grok is like a much more advanced and sophisticated version of turn-by-turn navigation software. You can think of it as Digital Optimus AI being System 1 (instinctive part of the mind) and Grok being System 2. (thinking part of the mind). This will run very competitively on the super low cost Tesla AI4 ($650) paired with relatively frugal use of the much more expensive xAI Nvidia hardware. And it will be the only real-time smart AI system. This is a big deal. In principle, it is capable of emulating the function of entire companies. That is why the program is called MACROHARD, a funny reference to Microsoft. No other company can yet do this.

Web UI agents aren’t one thing—they’re a set of design choices. I break down 5 reliability levers: packaging, observation, planning, action, privilege + why evals are still early. danielko.medium.com/dissecting-web… #AIAgents #AGI #AICoworkers

I have been testing GPT-5.4 Pro extensively (while it was on a flight and after the release). For my high-complexity, math-heavy tasks it is a step backward. My best guess is, the agentic harness pollutes the context: it keeps looking for skills, surprised the folder is empty.

The key to better agent memory is to preserve causal dependencies.

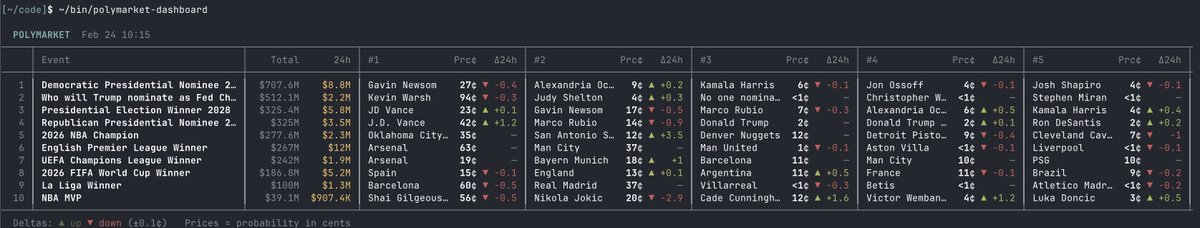

introducing polymarket cli - the fastest way for ai agents to access prediction markets built with rust. your agent can query markets, place trades, and pull data - all from the terminal fast, lightweight, no overhead

𝚗𝚙𝚖 𝚒 𝚌𝚑𝚊𝚝 Every company will have an agentic interface. But it won't just be on your turf, your .𝚌𝚘𝚖. It'll also be on @slack, @discord, @microsoftteams, @googleworkspace, and more. I was at a hackathon in SF the other day and I watched this unfold IRL. Many startups just presented their agents as Slack @ mentions. They incidentally tended to be the products that were easiest to grasp and adopt. Excited for the Chat SDK to do for the UI of Agents what @aisdk did for models.