Jason Cui

1.3K posts

Jason Cui

@JasonSCui

Partner @a16z investing in infra & AI | Prev product @databricks & @uber, founder at Jemi (YC S20, acq) | Technology optimist ☀️

Super fulfilled and energized hosting this 7-hour video hackathon with our friends at @fal @MuxHQ @zakariaornot at Overshoot. We’ve seen cool demos ranging from real-time moderation, to virtual tryon, to live podcasting to AI monitoring the situation. It’s a good remember how powerful video is as a computing interface and the most versatile medium. Thank you our judges @joshalphonse @Christos_antono @zakariaornot. And congrats to all the prize winners! 👏

.@MaikaThoughts says modern AI models work a lot like Memento: pre-training gives them the past, and everything after that needs scaffolding. "In Memento, the main protagonist has a form of this amnesia where he cannot form new memories. He uses sticky notes where he writes some of the notes to himself. He even tattoos some of the memories that he wants to imprint." "It kind of maps one to one to AI, how AI models work today." "We have the training phase where we basically encompass all of the world's knowledge, and that part is what we call pre-training. After the training phase, we basically have the cutoff date after which point we deploy the models into the world." "The model is basically frozen." "We use retrieval mechanisms like RAGs, we have the system prompt that essentially serves as a tattoo."

SpaceXAI and @cursor_ai are now working closely together to create the world’s best coding and knowledge work AI. The combination of Cursor’s leading product and distribution to expert software engineers with SpaceX’s million H100 equivalent Colossus training supercomputer will allow us to build the world’s most useful models. Cursor has also given SpaceX the right to acquire Cursor later this year for $60 billion or pay $10 billion for our work together.

We @a16z are co-hosting a video hackathon with Overshoot @zakariaornot @fal @MuxHQ! 4 hours to hack on real-time video, generative media, and new workflows with the best tools. Space is limited & sign up below (approval required) 👇 w/ @JenniferHli @venturetwins @JasonSCui

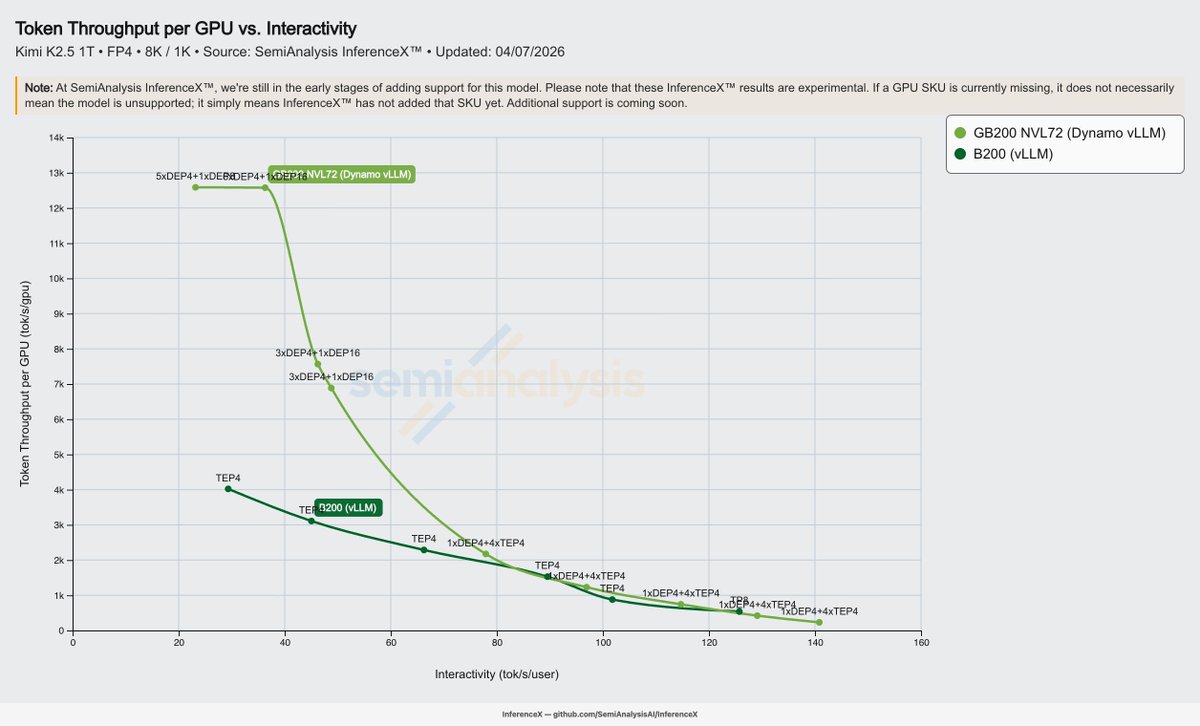

🎉 Congrats to the Moonshot team on Kimi K2.6 — day-0 support on vLLM 0.19.1. • 1T total / 32B active MoE — 384 experts, 8 routed + 1 shared • MLA attention, 256K context • Native multimodal: MoonViT vision encoder + video input • Native INT4 quantization • Interleaved thinking with multi-step tool calls, for long-horizon agent workflows 🔗 recipes.vllm.ai/moonshotai/Kim…