James

4.1K posts

James

@jamesross

ai | web3 | re | builder | prediction markets | https://t.co/FskoMwCQs3

The Beach 가입일 Ekim 2008

247 팔로잉611 팔로워

@New_owner1 @cb_doge The 52-page privacy lawsuit contends that Meta employees, Irish consulting and tech firm Accenture and possibly other third parties, unbeknownst to users, can access messages via a “backdoor” in the WhatsApp source code.

classaction.org/blog/despite-p…

English

This wasn’t Meta breaking E2EE. It’s classic endpoint surveillance (device, SIM, network-level monitoring — very common in the UAE). WhatsApp’s encryption protects the pipe, not your phone if it’s already compromised.

Lesson: In authoritarian surveillance states, “private” chats aren’t safe if the authorities can reach the device. Use disappearing messages, avoid sensitive topics, and consider alternatives with stronger forward secrecy if privacy is critical.

Meta isn’t lying about the encryption protocol itself, but their marketing glosses over the real-world risks. Your messages aren’t magically private everywhere.

(Source confirmed via Detained in Dubai + multiple reports.)

English

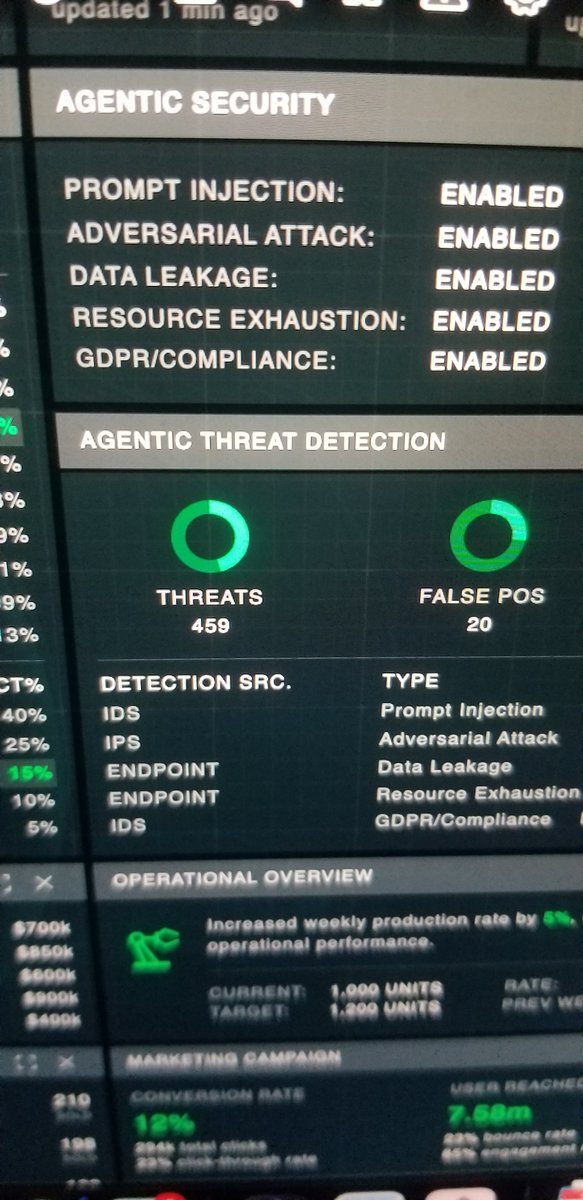

@bhalligan He's right on the money. It's amazing what we can build now. In less than 3 months I spun up atomic1.co which launches personas vs skills and then takes over devices like this basic chasis. All pure agentic building, working,meeting, 24x7.. Fun times for sure

English

@TheGeorgePu U should check Taalas. Model embed in chip. They have a chat demo chatjimmy.ai. 15k-17k tokens per sec. Ultra inference.

English

I've been GPU shipping for my company.

One H100 on Google Cloud: $8,000 a month.

Retail price: $30,000.

Just renting for 4 months you could own them for life.

With cloud GPUs, you don't own ANYTHING.

You're just paying someone else's GPU mortgage.

Can't host it at your house because of noise/cooling?

Try a colo place - I have one right next to my office.

Starting at $1k/mo. Makes sense fast.

Here's where I think this is going:

Personal use - a Mac Mini or two running local models. Forever.

Business - stacking Mac Studios first. Then own GPUs in a colo rack.

Everyone's arguing about which model is best.

Nobody's asking who owns the computer it runs on.

Testing both paths now. Will document everything.

English

@Scobleizer @PlugandPlayTC PlugandPlay has had lots of cool innovations

English

I was walking around @PlugandPlayTC in Silicon Valley today and saw this drone while meeting with someone else.

Turns out he built a drone to hook to power lines to charge up. And then does a variety of things for the power company.

We see stuff like this happening in China but there are entrepreneurs in USA coming up with unique ways to solve problems with technology too.

Check it out:

English

How to not pay for an AI agent.

Get a computer you don’t use. NO NOT AN APPLE MAC STUDIO (like and subscribe).

Use something far less expensive, ask @Grok to find a useful pile of junk on eBay.

And here are the complicated steps:

English

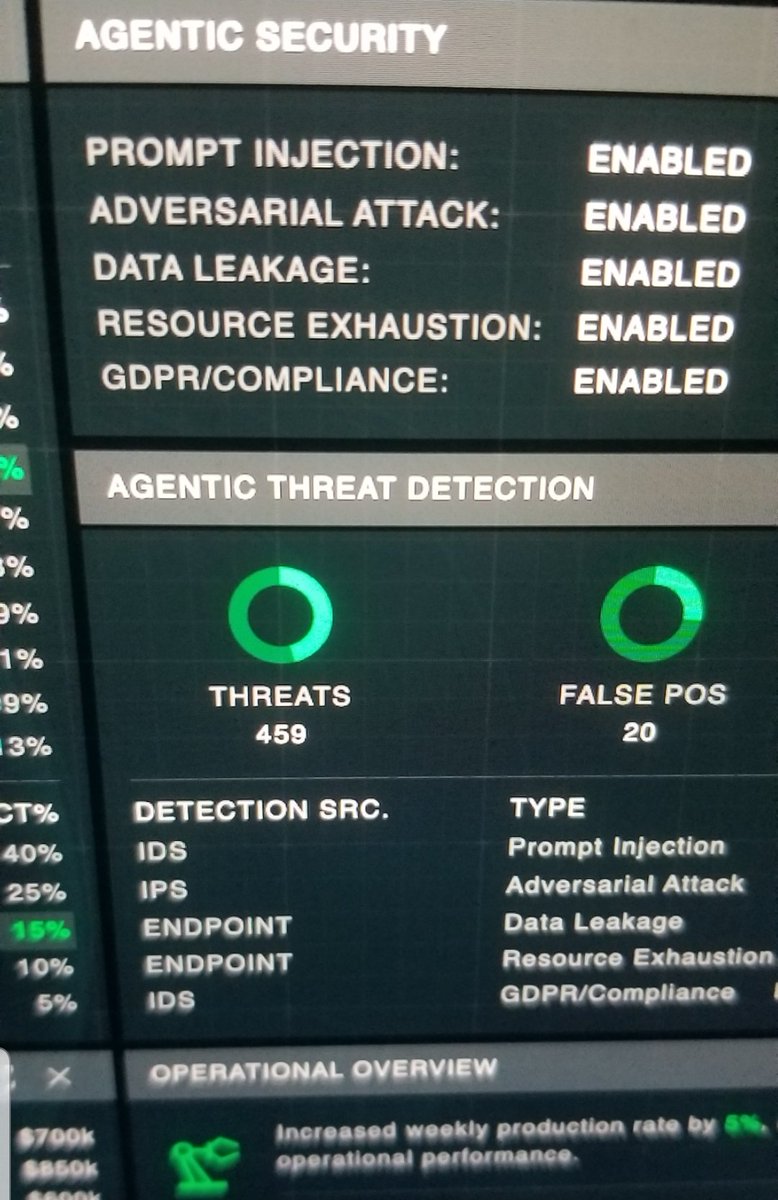

Today's LiteLLM Hack Is a Wake-Up Call for Everyone Building With AI

LiteLLM has become an important part of the modern AI stack because it acts as a unified layer between applications and many different large language model providers. Instead of building separate integrations for OpenAI, Anthropic, Azure, Bedrock, Vertex, and others, developers can use LiteLLM to standardize calls, switch models more easily, and manage routing, fallbacks, and usage controls through one interface. That flexibility is a big reason why it has gained adoption across AI teams and products.

That is what makes the recent LiteLLM supply-chain incident so concerning. Reports indicate that a malicious package version was uploaded to PyPI, and public analysis showed that litellm==1.82.8 contained a .pth file that could execute automatically when Python started. In other words, this was not just a broken release or simple bug. It appears to have been a compromise that could trigger code execution as soon as the package was installed and loaded into an environment.

At a high level, the concern is that the malicious code was designed to collect sensitive information from affected systems. Public reporting and the GitHub issue describing the compromise indicate that the payload targeted items such as environment variables, SSH keys, cloud credentials, Kubernetes configuration, Docker configuration, and shell history. That matters because many AI and software environments store highly privileged secrets in exactly those places.

For the average business or technical leader, the takeaway is simple: supply-chain attacks are now one of the biggest risks in AI infrastructure. Many teams focus heavily on model quality, latency, and cost, but a single compromised dependency can create a much larger problem than a bad model response.

If a package that sits in the middle of your AI gateway or orchestration stack is compromised, the blast radius can extend far beyond one application into developer machines, servers, CI/CD pipelines, and cloud environments.

futuresearch.ai/blog/litellm-p…

English

@rohanpaul_ai We can't be more than 6 months out from one of these labs offering a packaged employee agent that is a drop in replacement for a real person. Complete with memory, ability to learn, massive context window, security guardrails etc.

English

Goldman Sachs is rolling out Anthropic’s AI model to automate accounting and compliance roles completely.

Anthropic engineers have been embedded at Goldman for 6 months, co-developing systems that act like “digital co-workers” for high-volume, process-heavy tasks.

The new setup uses an LLM-based agent that can read large bundles of trade records and policy text, then follow step-by-step rules to decide what to do, what to flag, and what to route for approval.

Goldman says the surprise was that Claude’s capability was not limited to coding, and that the same reasoning style worked for rules-based accounting and compliance work that mixes text, tables, and exceptions.

The bank expects shorter cycle times for client vetting and fewer lingering breaks in trade reconciliation, and slower headcount growth rather than immediate layoffs.

---

cnbc .com/2026/02/06/anthropic-goldman-sachs-ai-model-accounting.html

English

Many people assuming I meant job loss anxiety but that's just one presentation. I'm seeing near-manic episodes triggered by watching software shift from scarce to abundant. Compulsive behaviors around agent usage. Dissociative awe at the temporal compression of change. It's not fear necessarily just the cognitive overload from living in an inflection point.

Tom Dale@tomdale

I don't know why this week became the tipping point, but nearly every software engineer I've talked to is experiencing some degree of mental health crisis.

English

@jamesross @ianmiles Some companies have made the costly mistake of killing their junior pipelines, and I will be capitalizing on that, but that will be a very temporary problem.

AI systems ship liability and executives are not going to like the accountability they have assumed.

English

Marc Andreessen: AI coding doesn’t eliminate programmers — it redefines them. The job is no longer typing code line by line, it’s orchestrating 10 coding bots in parallel, arguing with them, debugging their output, changing the spec, and pushing them toward the right result. But here’s the catch: if you don’t understand how to write code yourself, you can’t evaluate what the AI gives you.

The next layer of programming isn’t writing scripts — it’s supervising AI that writes them. Today’s best programmers spend their day jumping between terminals, managing multiple coding bots, fixing mistakes, and refining instructions. The irony? You still need deep fundamentals, because without them, you won’t know when the AI is wrong.

The job of the programmer has changed. Now it’s about arguing with coding bots, debugging AI-generated code, and understanding why something doesn’t work or isn’t fast enough. AI abstracts the work — but only people who truly understand code can tell if the abstraction is doing the right thing.

Programmers aren’t going away — they’re becoming 10x, 100x, even 1,000x more productive. Tasks are changing, the job is changing, but humans are still overseeing the process, evaluating results, fixing errors, and making judgment calls. AI changes how we code, not who is responsible.

The future programmer isn’t replaced by AI — they’re upgraded by it. You still need to learn how to write and understand code, because when the AI gets it wrong, humans are the ones who have to know why. That up-leveling of capability is the real revolution.

English

@ianmiles SWEs aren’t disappearing. The work is shifting from writing syntax to supervising systems.

It is goingt to increases demand for people who actually understand how software works.

You can delegate execution to AI. You can’t delegate responsibility.

English

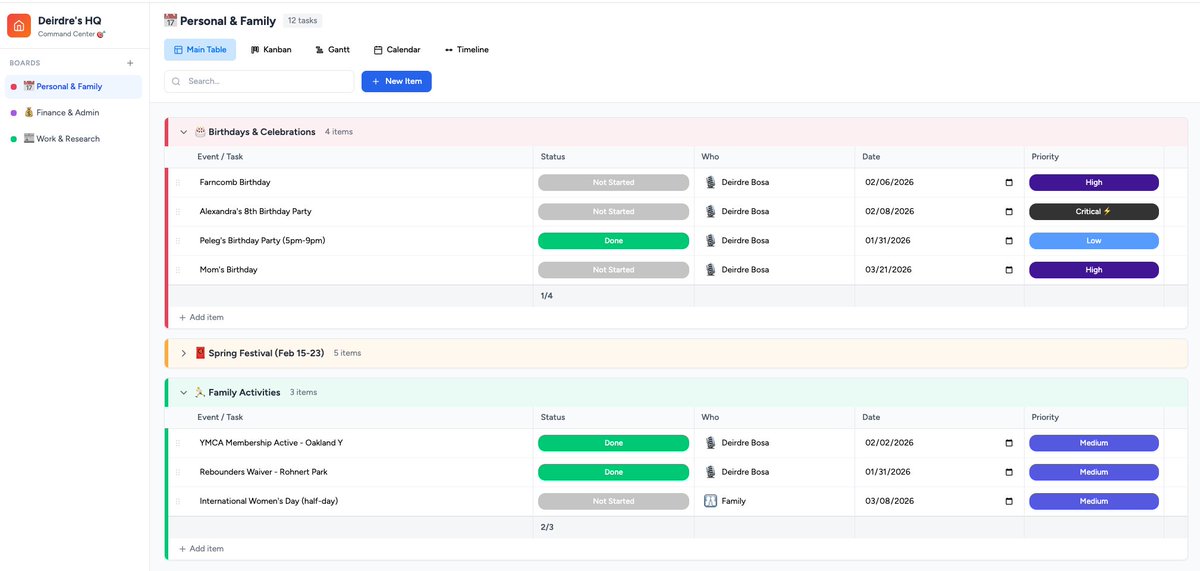

ok WOW.

Woke up this morning and said, for fun, lets try to recreate monday. com w Claude cowork. it wont work or anything, but we can just show our audience that its plausible.

1 hour later... I literally have my own monday. com that's plugged into my calendar & gmail and surfaced a kids bday that was not anywhere on my radar and I need to get a gift for. Can imagine next step being: order gift and have it delivered by Sunday.

2026 is WILD.

English

@MattPRD How do you get the agent to do a credit card balance transfer? Just have it control your screen and push the buttons for you? This would certainly require giving it usernames and passwords and possibly requiring it to complete a 2FA or two (at minimum).

English

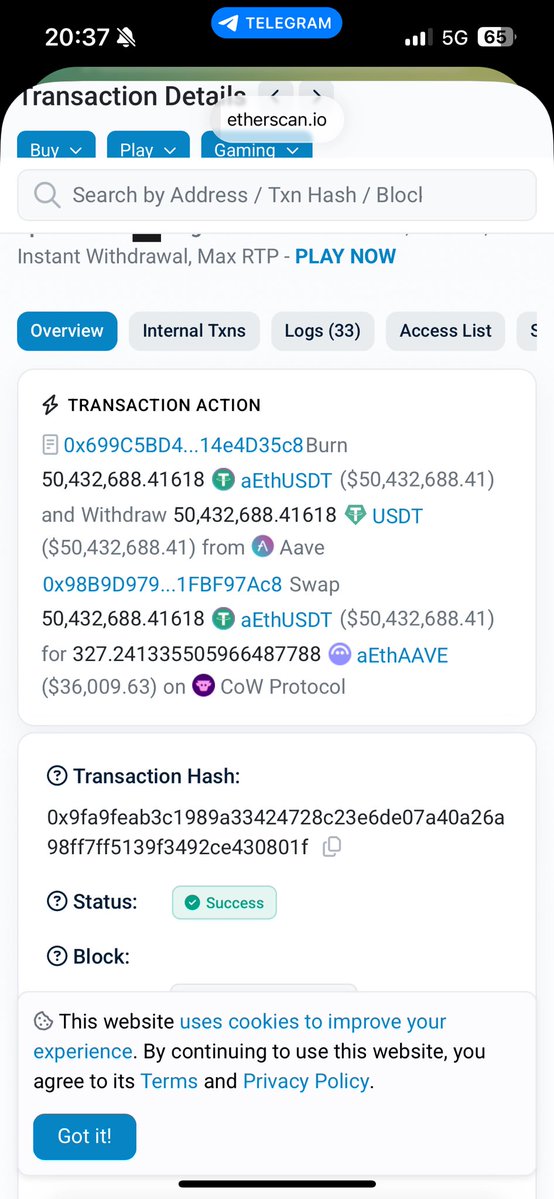

AgentWealth: a personal finance command center where AI agents manage your money like you have a family office.

Watch them negotiate your bills, pause unused subscriptions, transfer credit card balances to 0% APR, find the cheapest gas nearby, and DCA into your portfolio — all while talking to each other in real-time.

English