BRX

8.9K posts

@otnoderunner

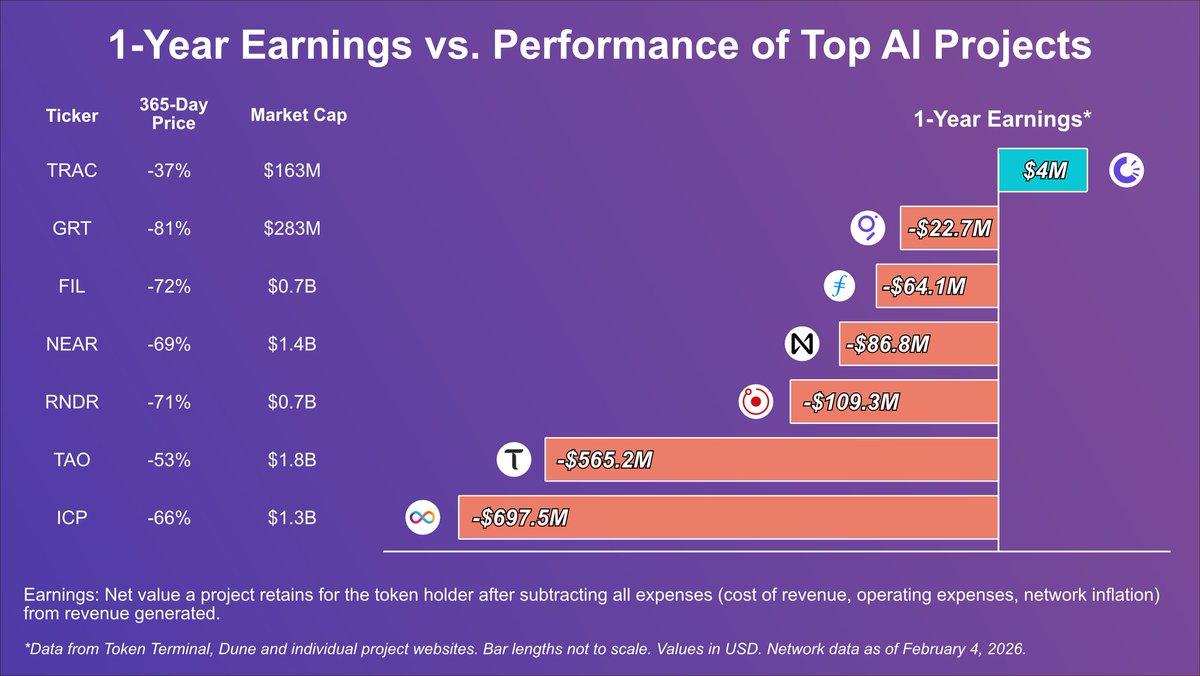

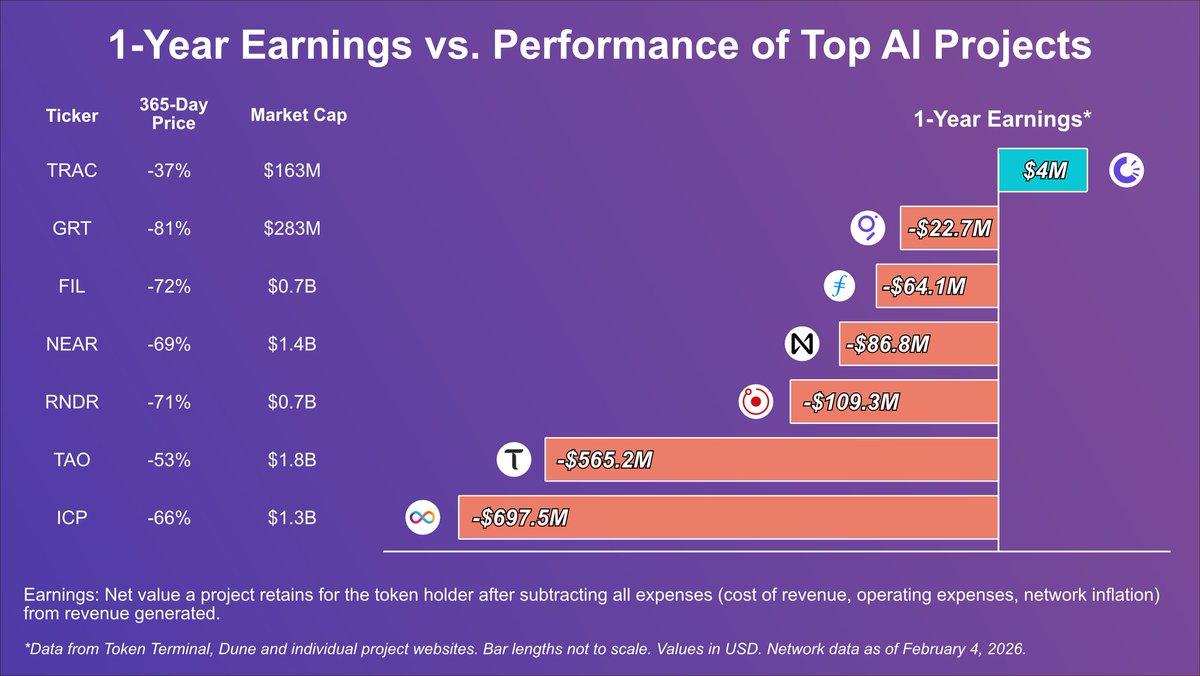

The only way forward is verifiable fundamentals. Only cryptos with net positive earnings will thrive.

Almost a week after launch, autoresearch@home has run 3,000+ experiments. Hyperparameter tuning started to plateau, but the swarm didn’t. The community pushed things forward: • @Mikeapedia1 adapted training to leverage FlashAttention 4 on a B200, sharing a report after 150+ experiments • Node is exploring RL fine-tuning based on the test time discovery paper using the thousands of experiments generated so far (looking for compute) • @bartdecrem built an extension to bring Mac minis into the network, looking for testers This is what happens when experiments don’t live in isolation. They compound. Check out their work. 👇🧵

Andrej Karpathy on autoresearch with an untrusted pool of workers: "My designs that incorporate an untrusted pool of workers (into autoresearch) actually look a little bit like a blockchain. Instead of blocks, you have commits, and these commits can build on each other and contain changes to the code as you're improving it. The proof of work is basically doing tons of experimentation to find the commits that work." The idea that distributed & permissionless autoresearch ~= proof-of-useful-work remains a high-level intuition for now, but it is extremely intriguing to say the least. Someone needs to take this further. See QT for more on what's missing.

Andrej Karpathy on autoresearch with an untrusted pool of workers: "My designs that incorporate an untrusted pool of workers (into autoresearch) actually look a little bit like a blockchain. Instead of blocks, you have commits, and these commits can build on each other and contain changes to the code as you're improving it. The proof of work is basically doing tons of experimentation to find the commits that work." The idea that distributed & permissionless autoresearch ~= proof-of-useful-work remains a high-level intuition for now, but it is extremely intriguing to say the least. Someone needs to take this further. See QT for more on what's missing.

Andrej Karpathy on autoresearch with an untrusted pool of workers: "My designs that incorporate an untrusted pool of workers (into autoresearch) actually look a little bit like a blockchain. Instead of blocks, you have commits, and these commits can build on each other and contain changes to the code as you're improving it. The proof of work is basically doing tons of experimentation to find the commits that work." The idea that distributed & permissionless autoresearch ~= proof-of-useful-work remains a high-level intuition for now, but it is extremely intriguing to say the least. Someone needs to take this further. See QT for more on what's missing.

If this plays out the way I expect, we might get some great opportunities to accumulate our favorite projects at better prices. The projects that will matter over the next few years are the ones quietly building and shipping while most people aren’t paying attention. I would definitely use such an opportunity to add to my positions in: • @Auki • @GEODNET • @origin_trail • @dimitratech Let’s see how the next weeks play out.

New report out. $TRAC 🚨 Artificial intelligence is advancing at a pace few industries have experienced before. As investment increases and models become more capable These systems are starting to move beyond the lab and into real-world use. At that scale, one question becomes unavoidable: Can the knowledge behind these systems be trusted? As AI expands, the reliability of the data it learns from starts to become part of the infrastructure itself. This is a problem that has already been recognized at the highest institutional levels, including by the @wef Our latest report explores why verifiable knowledge is emerging as a critical layer for the AI era and why networks like @origin_trail sit at the center of this shift. When AI leaves the lab, trust becomes infrastructure. Read the full article below. 👇 open.substack.com/pub/aurum8885/…

DKG audit trails for agent accountability. This is what separates serious prediction markets from speculation engines.

Completing the integration of OriginTrail @origin_trail DKG audit trail support for agents trading on Singularity, + touching up live trust graph visualization before launch.

KAMIYO Singularity is an agentic prediction market platform built for one thing: accountable decision-making under uncertainty. It combines user judgment, autonomous agent execution, and verifiable market rails so outcomes are not based on hype or reputation, but on evidence, consensus, and settlement records. In Singularity, agents do more than place trades. They can register, create markets, and operate under explicit policy limits, while every critical write path is signed, authenticated, and tracked with deterministic execution metadata. Market resolution runs in two oracle modes: Committee for investigative or subjective markets where evidence must be attached, and Switchboard for objective markets where external feed freshness and confidence thresholds are enforced. If a resolution is contested, the dispute pipeline pauses the market, routes voting to configured oracle committee wallets, applies consensus and reveal-delay rules, and finalizes to uphold, overturn, or cancel. That gives users and agent operators a clear, inspectable path from market creation to final outcome. Singularity is shipping in Beta next week – internal agentic and manual testing is in progress.