Sherrill Stroschein 💙

109.6K posts

Sherrill Stroschein 💙

@sstroschein2

Reader in Politics, UCL. But these are my own views, tweeting in personal capacity. Retweeting not an endorsement. More Politics things in the butterfly place.

LLMs don't just edit your text. They flatten your meaning, erase your voice, and shift your conclusions toward a bland, neutral center that no human would have chosen. People who relied heavily on AI reported their essays felt less creative and not in their voice, but they were equally satisfied with the final product. The tool makes you worse at expressing yourself, and you don't even notice. The semantic analysis is worse. When researchers embedded essays in vector space, human writing sprawled across a diverse landscape. AI-assisted essays formed a tight little cluster in a region where no human writing had ever been. A new dialect, invented by machines, imposed on people who thought they were just getting grammar help. Even when users asked for "minimal edits" or "just grammar," the AI rewrote their conclusions. An essay about self-driving cars that originally expressed skepticism? The LLM quietly removed the doubts and added enthusiasm. A personal anecdote? Deleted. First-person pronouns? Slashed by 60%. Statistical arguments and expert citations appeared where human voices used to be. The emotional profile shifted too. LLMs increased both positive and negative sentiment, making text more emotionally charged while simultaneously making it more analytical and logical. A paradoxical combination designed not to reflect the writer but to appeal to the broadest possible reader. At a major AI conference, 21% of peer reviews were AI-generated. Those reviews gave scores 10% higher and focused on completely different criteria: reproducibility and scalability instead of clarity and significance. The machine isn't just reviewing papers. It's changing what counts as good science. LLMs are optimized for positive human feedback, not for preserving individual meaning. The result is a language that is broadly convincing but personally hollow. A linguistic monoculture, spreading through every institution, while users happily report they're satisfied with the results. arxiv.org/pdf/2603.18161

BA.3.2 was first detected in South Africa 🇿🇦 in November 2024. Never reached dominance, never disappeard. 14 months after its emergence it accounts for 20% of all sequences. Unusual. #BA32

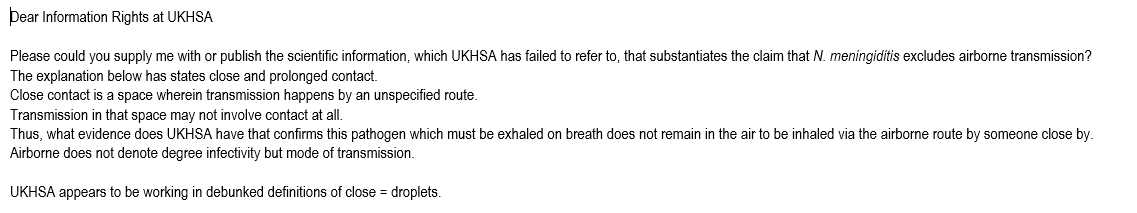

Seems a common misconception that if far field transmission isn't a major factor then the transmission mode is not airborne. Inhalation of aerosols at close range is still airborne transmission - just near field rather than far field. This is important to understand. 1/🧵

“Is there any scenario or situation where someone could produce a droplet without also generating significant amounts of aerosols?” Prof Clive Beggs (independent expert witness to the Covid Inquiry): “NO”.

How the Covid Disinformation Ecosystem was established Network mapping the international network's development in 2020, with a particular focus on the UK. open.substack.com/pub/counterdis…

COVID INQUIRY: MODULE 3 REPORT “Fundamental flaws in the UK’s approach to IPC [infection prevention & control] guidance, for example in relation to the use of PPE, put patients and healthcare workers at risk.” — Baroness Hallett, Chair of the Covid Inquiry Read more here… ⬇️

Teaching staff being TUPEd over from @covcampus to a company, significantly worse Ts&Cs 4 sick pay, annual leave, workload hours, working week, removed from Teachers Pension scheme, no union recognition @UKLabour using the VC as an advisor. Sign of things to come? @BBCCWR

Nobody wants to read AI-generated books.