Xingjian Zhang

37 posts

@_Jimmy_Zhang_

Ph.D. candidate @UMich. Intern @GoogleDeepMind. Incoming intern @AIatMeta. AI for science, LLM reasoning, and more.

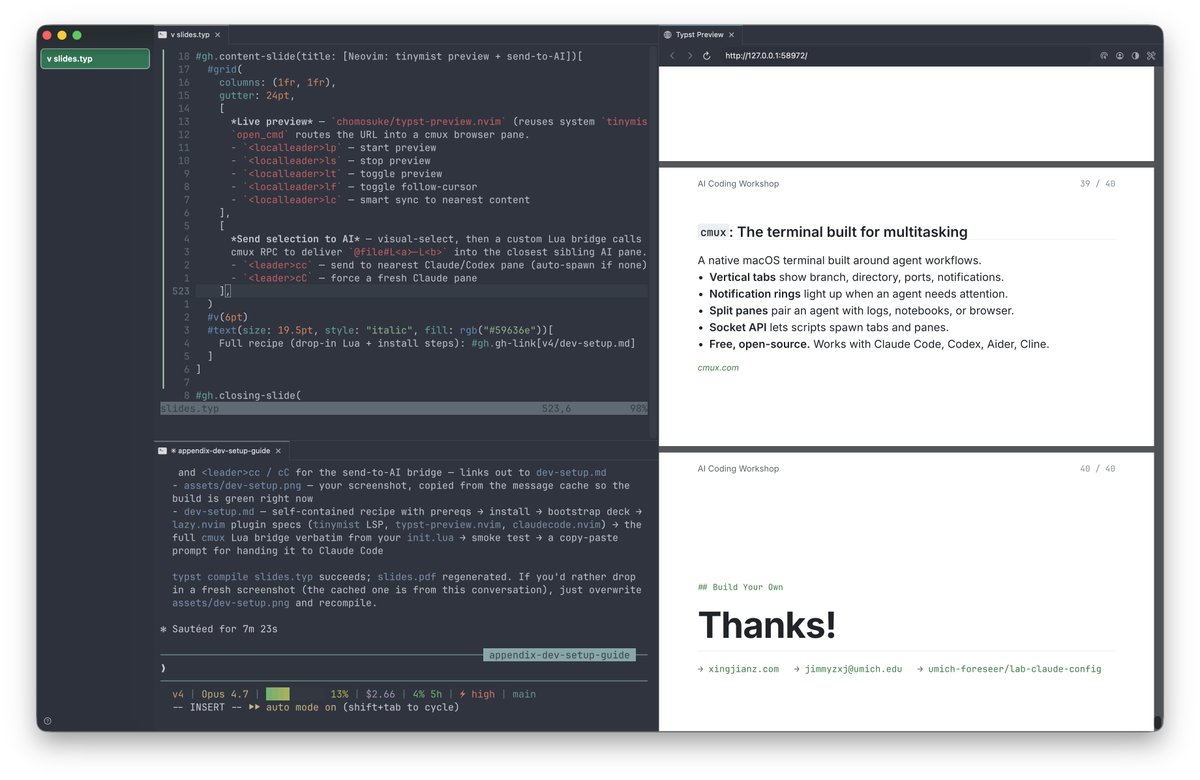

This is the best slides I've seen so far about claude code pro tips (esp. for AI researchers): xingjianz.com/assets/talks/a… By @_Jimmy_Zhang_

@karpathy Very inspiring as always! We are also open sourcing part of our infra on automated research for Gemini to evolve itself at github.com/google-deepmin… More complex than the nanochat setup but closer to SOTA LLM pre/post-training while staying as minimal as possible. More on the way.

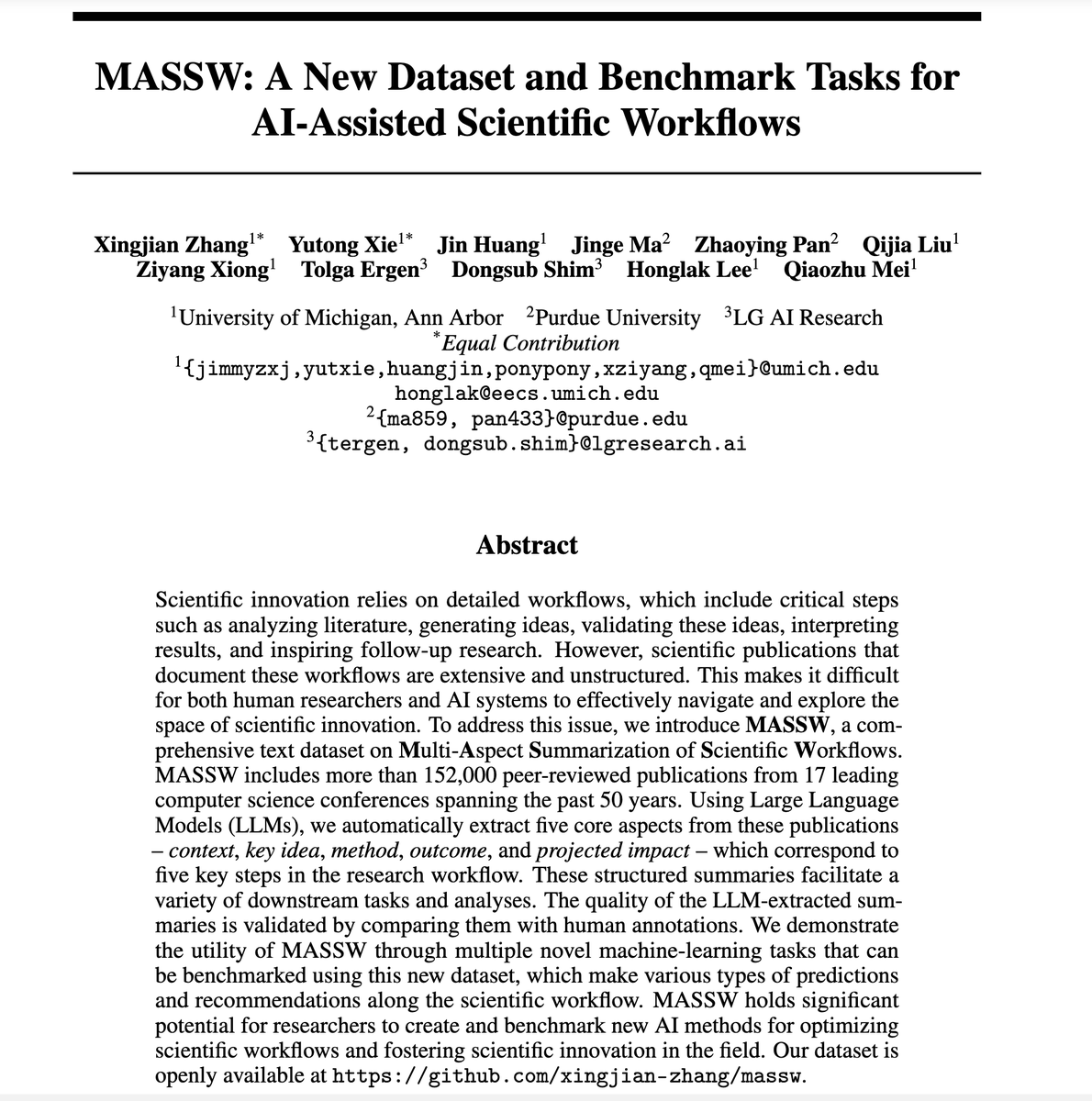

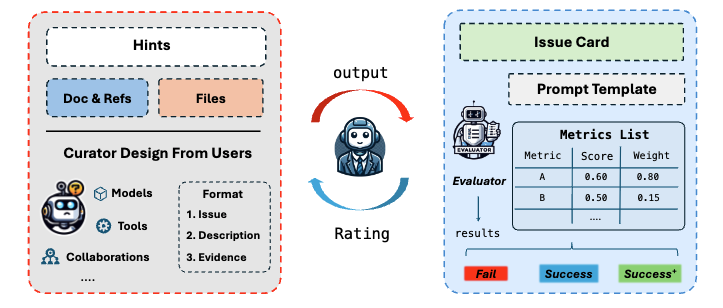

Excited to share our recent work on #dataset & #benchmark for AI-assisted scientific workflows. With over 152,000 peer-reviewed CS papers, we provide structured insights from research workflows using LLMs. 🧐 GitHub: github.com/xingjian-zhang… arXiv: arxiv.org/abs/2406.06357