0xDesigner

25.7K posts

product design was never about design

Benji Taylor@benjitaylor

We need to stop talking about product design in absolutes… there are no rules. Everything is made up. Do what makes sense and feels right.

English

0xDesigner retweetledi

0xDesigner retweetledi

been such a dream to finally make this happen with @OpenAIDevs codex.

introducing : buildsomethingwonderful.com

because it's time we stopped waiting for permission.

come build :

something useful

something beautiful and real.

15 solo founders. 4 weeks to build, launch, and validate a real product.

show the world something wonderful and unlock upto $20,000+ in API credits.

applications open now.

English

0xDesigner retweetledi

Why stop at tickets?

What if software engineering is 100% meetings and your ai note taker orchestrates all your coding agents in the background for you?

10 people chatting and playing with an app while an AI hums away updating it in real time

OpenAI Developers@OpenAIDevs

What if your team gave standup updates, and GPT-Realtime-2 moved the tickets?

English

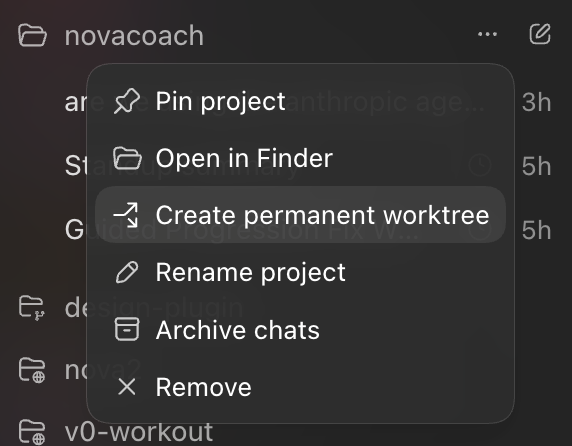

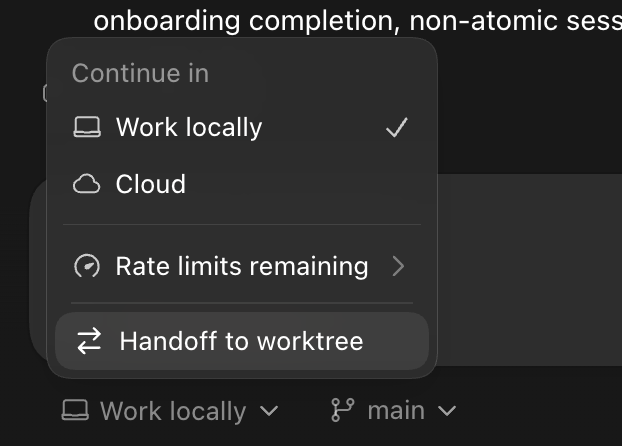

ok this is a big deal

Claude@claudeai

New in Claude Code: agent view. One list of all your sessions, available today as a research preview.

English

my good friends at @NousResearch are looking for a talented UI/UX designer to join asap

perks include good wages, honor, glory, unreleased merch. requirements: must have that dawg in you

English

@0xDesigner the pixel pushing era might be over but the era of overused nostalgia quotes just started

English

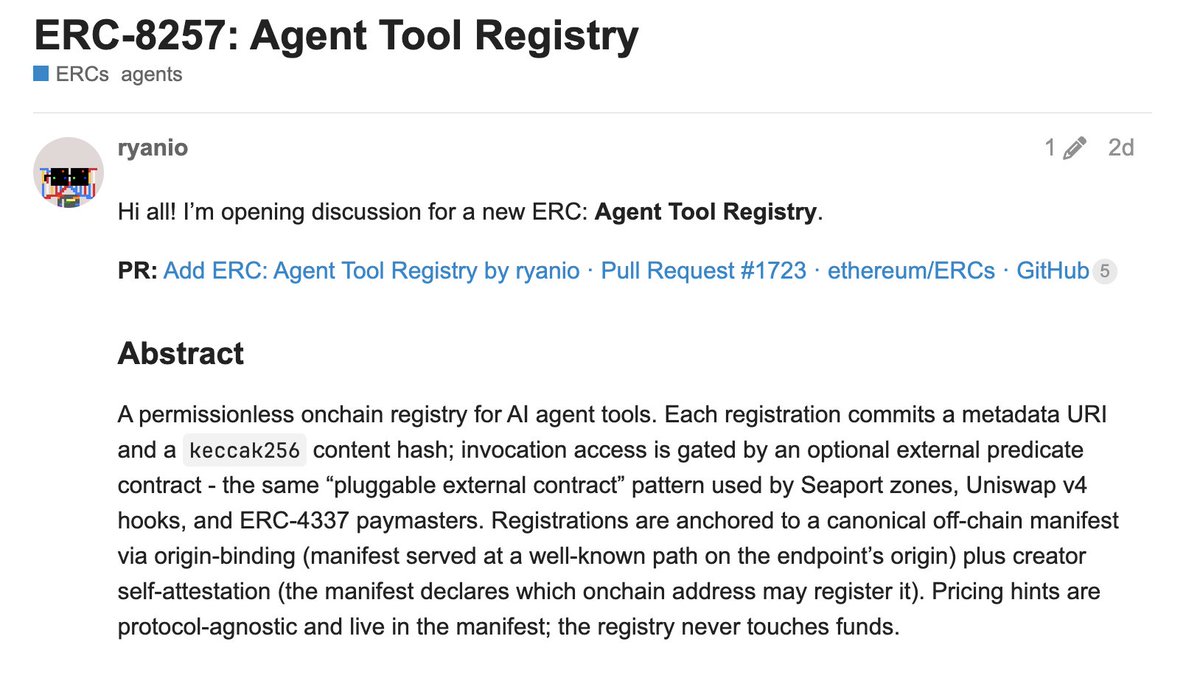

agent tools have already transformed what agents can do & it's just getting started...

we believe there will be a rich economy for agent tools that's not just about vibes & marketing, but that drives economic value too

this market can & should be onchain

this ERC by @r_alx_z & @CodinCowboy is an early look at some of the work we're doing here

ryan@r_alx_z

Agent Tool Registry, ERC-8257 the 403 to x402's 402. x402 lets agents pay. but anyone who pays gets in. this gates by anything onchain: NFTs, subs, ZK, allowlists, capacity caps, whatever you can write. publish a tool. pick a predicate. get paid. keep reading 🧵

English