1i

248 posts

On-Premise Business AI Center After my posts on the 2-GPU and 4-GPU builds, people reached out asking how to build an 8-GPU box for their businesses. Why? - Protect their IP - Protect customer data - Save on inference costs - Train their own models Here's how to build one: 🧵

@APompliano We need stacks of GPUs in every house, not really big stacks of GPUs controlled by companies who are trying to extract value from us.

Thought this was a joke 1mil lines Bun commit re-writing Bun in Rust They learned Bun is now officially the good side of history

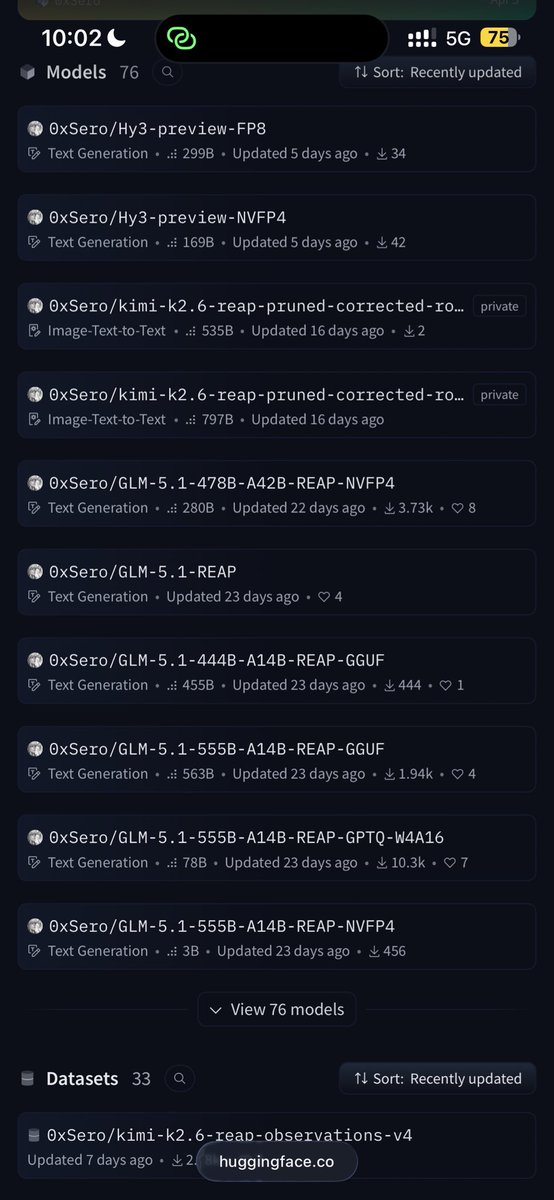

So to give you a detailed and very close number i'd have to dig into the architecture more, see how much kv per token holds and weights, I'll make a general rule of how to approach that, and you can refine it if you dig more into the model specs. So the amount of concurrent users relies heavily on how much memory you have left for kv cache, so you'd need to compute how much memory we're allocating for fixed stuff and how much left for kv for example if we're lets say kimi for example fp8, to make math easy let's do 1T x fp8 = 1TB will be allocated for the weights. for int4 or nvfp4 it would be 520GB activation workspace you'd need like 20-30gb, this is usually relies on your max prefill batch possible you just multiply batch tokens x hidden dim x fp8 or fp16 activation x number of intermediate tensors. keep like 2% for cuda overhead, another 4% for safety margins and you're left with like 1.2TB of memory for KV example with MHA, MLA is much much more efficient maybe less than 10% of this number for MHA compute kv per token =2 x n layers x num heads x d_model x fp8 = 1mb (for easy math). now you can get a sense of how many concurrent users if you have 1.2 tb left for 128k context its like 128GB per user so you'll be able to serve 10 concurrent users 1m you'll be able to serve a single user. with MLA ratio is 10x give or take so 100 users with 128k users and 10 users with 1m context. keep in mind that this is the laziest calculation, I just rounded everything up for easy math, depends on SLAs you could serve way more than this, but maybe with slower TBT.

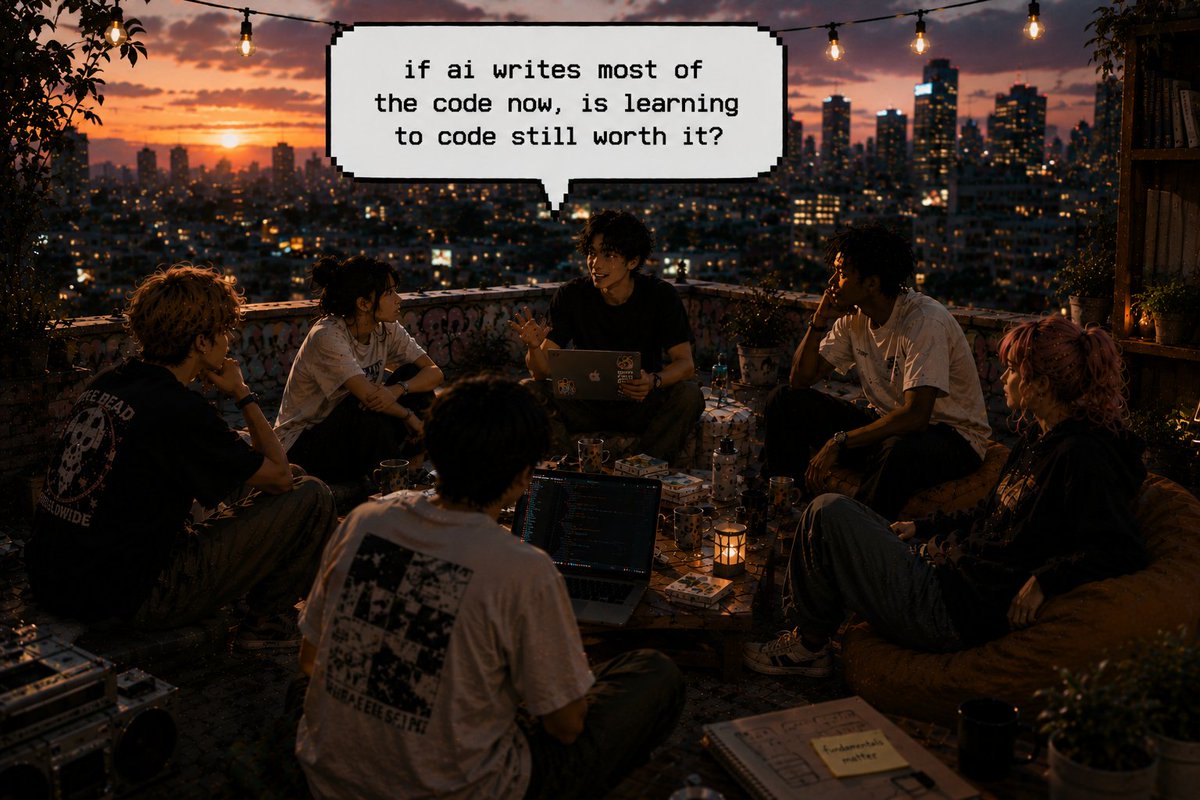

be honest: if AI writes most of the code now, is learning to code still worth it? • yes, fundamentals still matter • only enough to direct AI • no, product thinking matters more • I never really learned curious how people actually think about this long term. P.S. my real answer is hidden somewhere in the image, can you find it? 👀 vote or drop your take below. 👇