Michael Miller

831 posts

Michael Miller

@2grifters1wave

🐇Prof. Mike Miller Exploring authorship, communication & resonance in the age of AI. Latest: Resonant Geometry and Sentic Blooms.

@AaronBastani @KonstantinKisin I usually think their is bias in your content (normal), but this is is great and gold! Instead of nodding to her, you put her in difficult position. True journalism

You have too many opinions on them for a non power user. You are not at the cutting edge of LLM usage. Your comments make sense for basic llm usage (the most expensive models) but you're not building powerful recursive harnesses and back pressure into them that gets the AGI results they are capable of

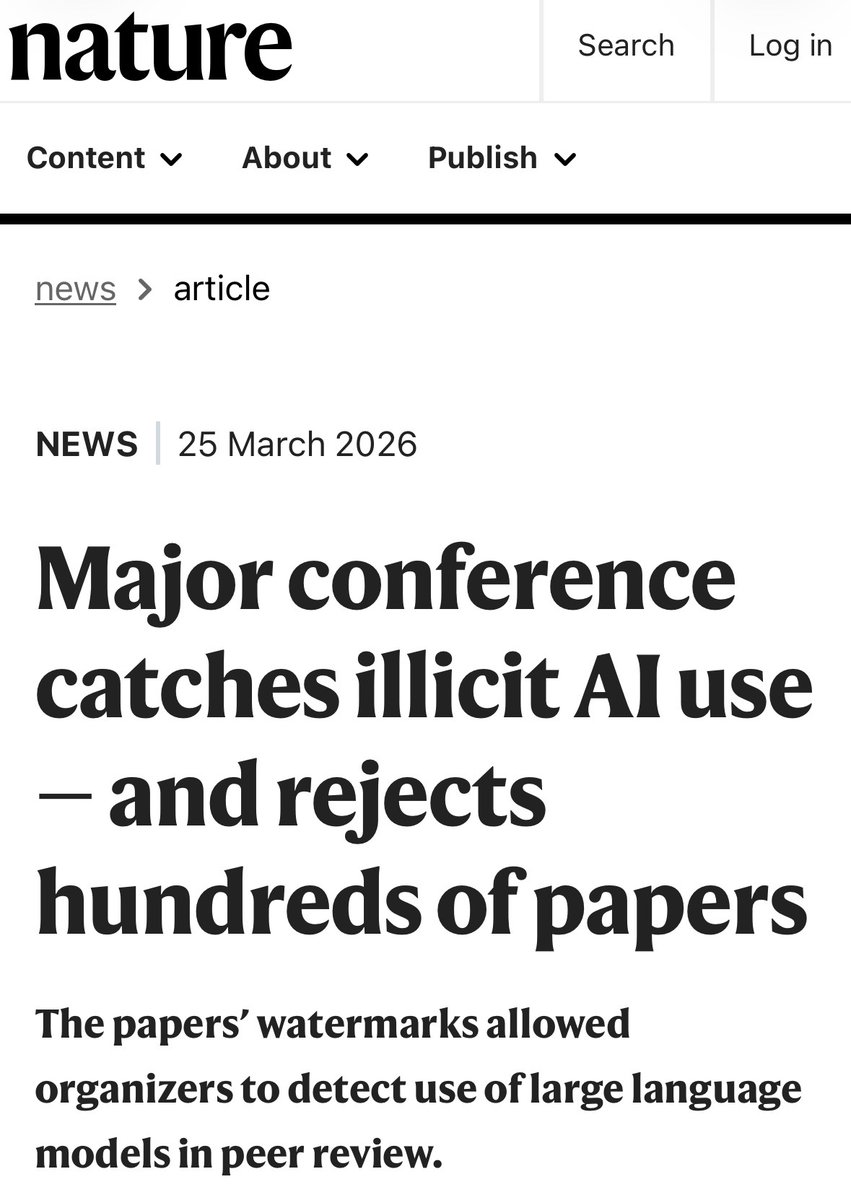

US President Donald Trump has named 13 people to his panel of science advisers — and all but one is a leading technology executive. go.nature.com/4uWojBD