2pmflow

1.3K posts

Security incident involving Vercel. Check for the following Oauth grant in your environment http://110671459871-30f1spbu0hptbs60cb4vsmv79i7bbvqj.apps.googleusercontent[.]com

This is the best arguments I have heard against moon landing hoax. I love this.

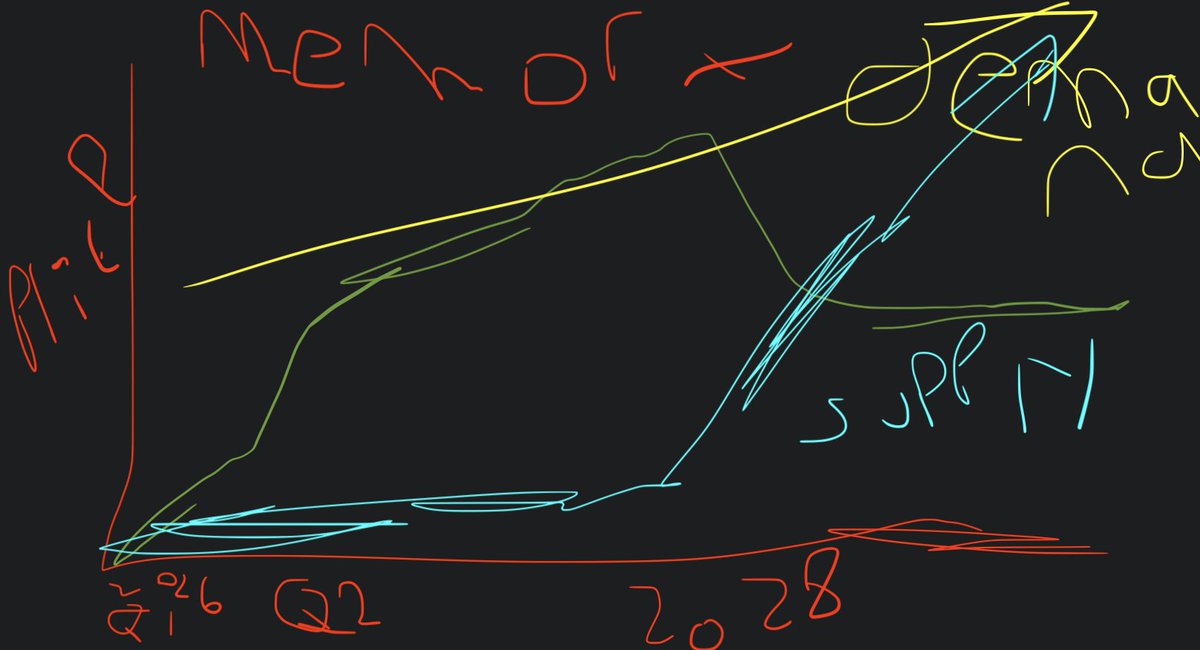

My art is good, the algorithm just ignores me My art is good, the algorithm just ignores me My art is good, the algorithm just ignores me My art is good, the algorithm just ignores me

every engineer at anthropic has been using mythos for ~1.5 months. meanwhile, their uptime is horrendous, claude code still has rendering bugs, etc. one could conclude that it won't be the end of software engineering.

I hope this couple is still together bc this video really cracks me up every time I see it 😂😂😂 this is my type of carrying on

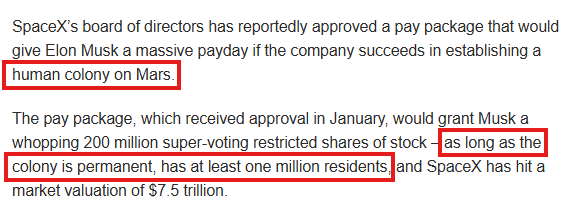

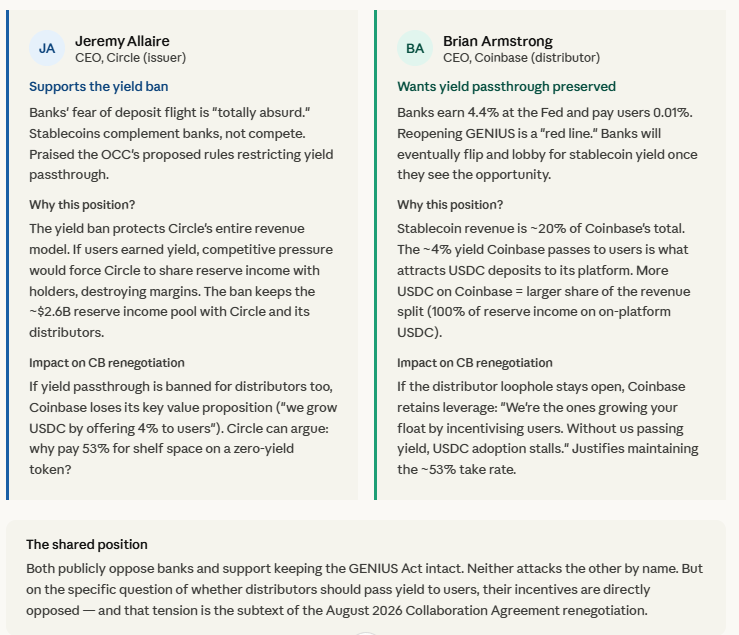

i think people are not gaming out the CLARITY scenario right. CRCL/COIN should do fantastically well