Jeroen Krebbers

1.8K posts

Jeroen Krebbers

@9eronimo

Lead Tech programmer @ Guerrilla also: @[email protected]

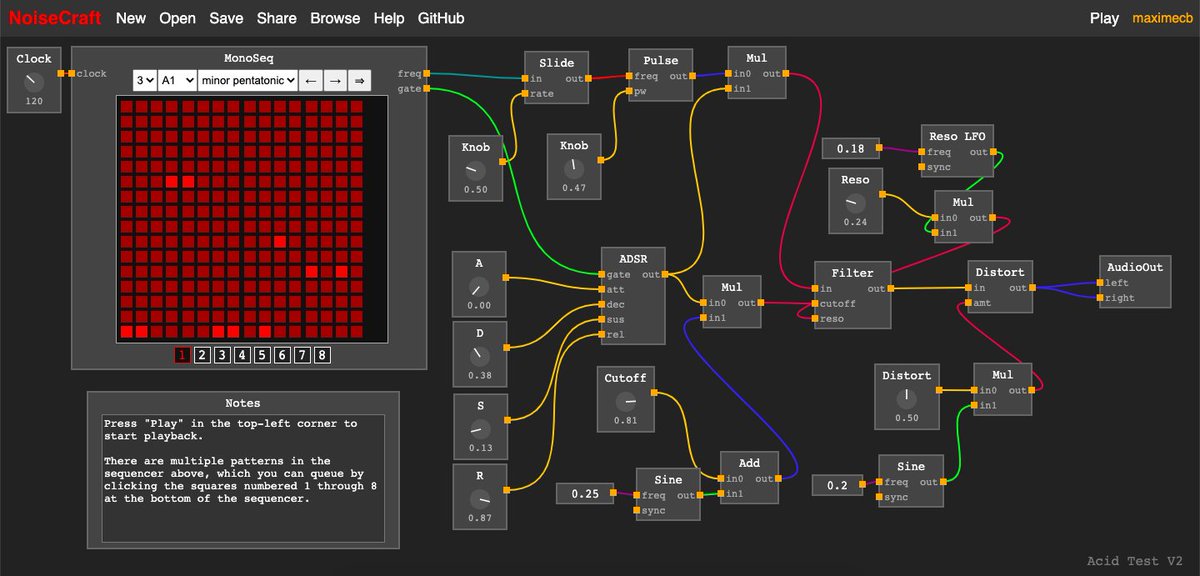

It’s not just “annoying”. Refactoring all of 2D rendering - lines and curves, analytic rasterized shapes, clipping, text rendering, real anti-aliasing, etc etc into modern GPU pipeline (“here are triangle buffers and shaders, good luck. btw we got rid of immediate mode rendering, and don’t make too many draw calls”) requires a huge amount of convoluted jumping-through-hoops. Standard 3D-rendering double/triple buffering already bakes in the latency you are talking about. This all makes it very hard to try to do anything new at the UI-framework level unless you are also a graphics expert. You need 3D rendering experts to try to do modern UI stuff, and none of them want to do it (at least in my experience, trying to hire for it) The issues also include dealing with the fact that most desktop application frameworks are built on top of decades-old codebases, and applications are built enormous teams who mostly work in high-level languages, and this is not going to change. To improve latency, the performance people have to go into those layers and look at how those programmers work and figure out how to help them, not just sit back and complain that they should be more concerned with cpu cache misses.

I will give a talk at #SIGGRAPH2024 about a new way of evaluating #Bezier #curves that I call Seiler's Interpolation, using half the linear interpolations of #deCasteljau's algorithm. I built an interactive demo: cemyuksel.com/research/seile… See the extended abstract for the details.