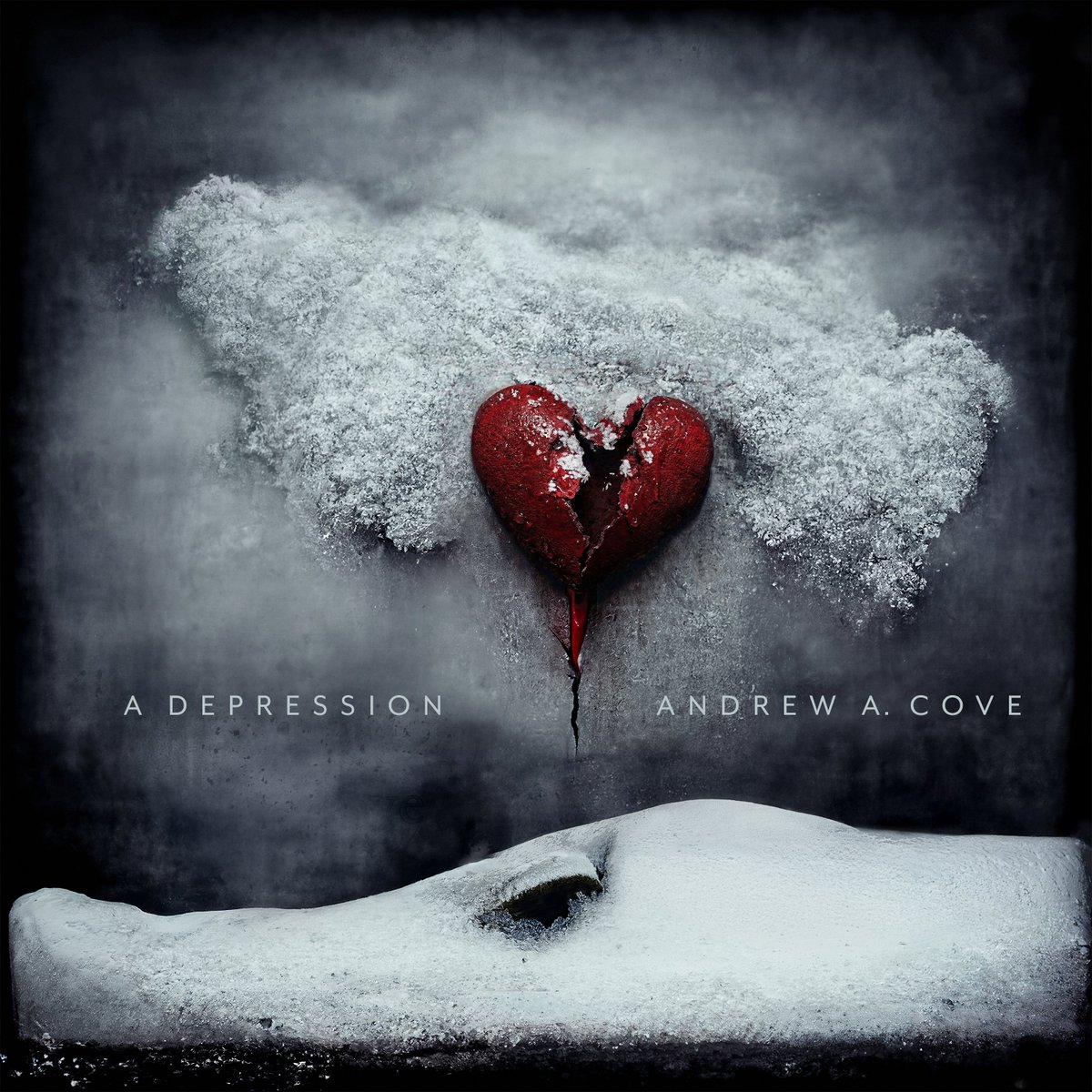

My new EP, A Depression, is streaming everywhere now. aac.social/listen/a-depre…

Andrew Cove

588 posts

@aac

Find me at https://t.co/4SAtv96Uc4

My new EP, A Depression, is streaming everywhere now. aac.social/listen/a-depre…

@repligate @genalewislaw I think it becomes annoying when it mentions goblins ever single chat and it’s fair shakes to try and reduce that

I appreciate acceleration and velocity for their own sake, either as objectives or as aesthetic values, but they do grow dull with time on their own. And more importantly, I think AI will be an impossible political sell without more physical-world promise.

I want to do a few more of these calls. If your MAX 20x plan ran out of tokens unexpectedly early and you're willing to screenshare and run some prompts through Claude Code please comment. Trying to figure out how we can improve /usage to give more info.

A new milestone for humankind: The crew of Artemis II are now the farthest any human has ever travelled, reaching a maximum distance of 252,752 miles from Earth. This surpasses the previous record set by Apollo 13 in 1970 by about 4,102 miles.

@bcherny @UltraLinx please at least fix the uncontrollable scrolling/flickering before the next 3000 features