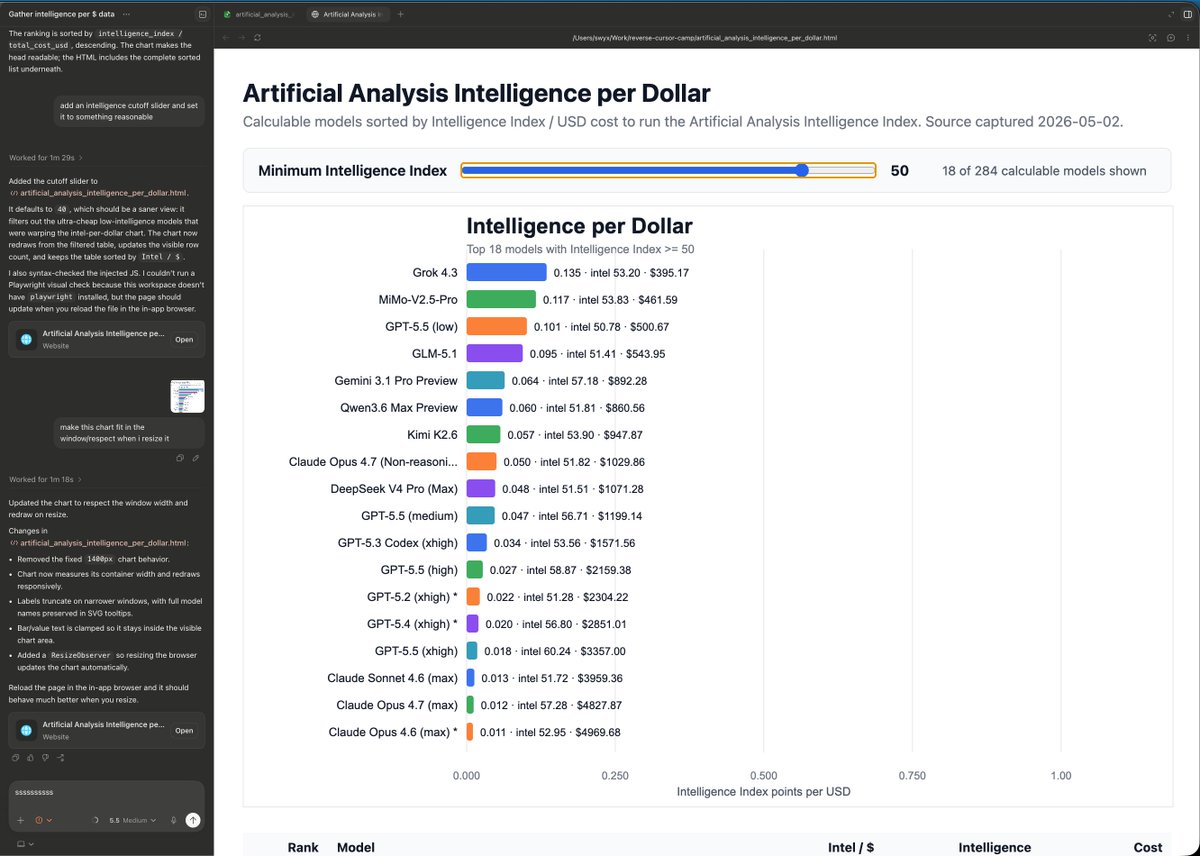

Brian Roemmele@BrianRoemmele

Anthropic’s Prompting 101 Workshop: What They Got Right, What They Missed

The Applied AI team at Anthropic put out a 24-minute video of how to prompt. I give an example using a practical scenarios eg: analyzing Swedish car accident report forms and sketches for insurance claims.

They show how a bare-bones prompt leads Claude to hallucinate a “skiing accident” on a street name that sounds familiar, then iteratively build it up into something reliable.

That’s valuable. They demonstrate the gap between casual use and structured prompting. But let’s cut through the hype in my direct style: this isn’t some revolutionary secret from “the people who wrote the weights.”

It’s foundational context engineering that power users and builders have been iterating on since the early days of these models. The workshop proves the point, but it doesn’t go far enough for real compounding advantage in 2026.

The Major Points They Brought Up (And Why They Matter)

The core demo starts simple: feed Claude an image of a standardized accident form (17 checkboxes, Vehicle A/B details) plus a hand-drawn sketch. Without guidance, it confidently invents details. Then they layer in structure:

•Task Context & Role: Tell Claude upfront who it is (e.g., assisting a human claims adjuster reviewing Swedish car forms) and the high-level goal.

•Tone & Style: Instruct it to be factual, confident where possible, but honest about uncertainty—“don’t guess, say I don’t know.”

•Background Data: Embed the unchanging form schema (what those 17 checkboxes mean) into the system prompt. This is gold for consistency since the structure never changes.

•Detailed Instructions & Step-by-Step: Break down reasoning—analyze the form, cross-reference the sketch, determine fault only with high confidence.

•Examples (Few-Shot): Show what good input/output looks like.

•Output Formatting & Anti-Hallucination: Use XML tags for structured responses, repeat critical rules, force quotes from source material, and remind it to think before answering.24

They emphasize iteration: prompting is empirical. Test, observe failures (like the skiing mix-up), refine. Most users stop at 1-2 of these elements. Adding the rest dramatically improves reliability for tasks like this.

Where I Disagree: This Is Table Stakes, Not the Full Game

Here’s the pushback: Framing this as “most people have no idea how to prompt Claude” sells urgency but undersells how far the field has moved. This workshop is from mid-2025 solid basics, but AI moves fast.

By now, relying solely on manual prompting (even well-structured) is leaving massive edge on the table.

My core disagreement: Prompting isn’t the bottleneck anymore; systems, memory, and agentic workflows are. A “Claude Skill” that injects these elements is better than nothing, but it’s still human-in-the-loop prompting theater if you’re not building persistent context, tool use, evaluation loops, and multi-step agents.

Better advice from someone who’s been deep in this since the beginning:

1Stop Prompting in Isolation — Treat every interaction as part of a larger system. Use Projects in Claude for long-term memory. Embed domain knowledge once (like full form schemas, your personal style guides, past outputs) rather than repeating it.

2Go Beyond Static Structure — The workshop’s XML and repetition help, but chain-of-thought with self-critique, tool calling for verification (e.g., cross-check facts externally if possible), and evaluation prompts (“Rate your confidence 1-10 and explain gaps”) compound faster. For the accident example, an agent that pulls real traffic data or Swedish regulations would outperform any single prompt.

1 of 2