Abhilash Bandi

514 posts

Abhilash Bandi

@AbhilashTest

Tinkering. Passionate about building quality software. Reformed. Trying to be a Grug Brain Developer.

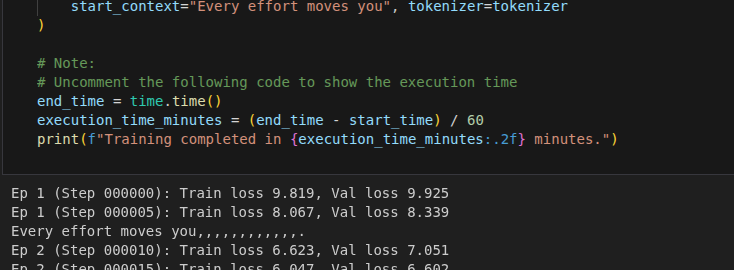

Progress after longtime. Multi head attention is here. Was able to write it from sratch. Learned that attention heads can be mixed for rich representation.

Just read the "Attention Is All You Need" paper. Its actually around 10 pages long. Few concepts, I cant grasp yet. But this is the first paper I read end to end. Next step, try to actually implement it and deeply understand whats happening. Stay tuned.. arxiv.org/pdf/1706.03762

🚨 Opinion: E-rickshaws are one of the main reasons for city traffic. 🙏

> be cursor > first to market for coding IDEs (with copilot) > $60 million series-a funding > made Dr Karpathy coin "vibe coding" > they are already an RL harness from day 1 > if user "Accepts Edit" - positive reward, if not user's next message is rich feedback > thats the purest form of RLRF (RL with rich feedback) > cursor tab also - pure RLVR (does user accept autocompletion? yes or no) > they shouldve been unstoppable But then... > they had one big tech-debt. they had to rely on other providers (OpenAI, Anth) coz they didnt have any competitve coding models of their own, > Sonnet and Opus costs $$$ via API > make some pricing moves that that have soured people against them > rise of competitors (claude code and now codex) > in came the terminal era: less typing, less editing > people moved from vibe coding to automated agentic coding by 2025. But then... > all this while they had enough analytics to start training their own models > open-weight coding models are already great, many of them have open licenses too for a good base > cursor models wont need to compete on general benchmarks - just basic intelligence + coding & SWE benches are all they need > did I mention they have had a banger team for a while? And today... > they have released Composer 2 now which beats Opus 4.6 and competes with GPT 5.4 high in a fraction of the cost > hopefully the usage issues will reduce because they have a good model that's optimized for their harness + runs cheap this whole thing is playing out like a movie in my head

STOP SAYING THANK YOU TO AI STOP SAYING THANK YOU TO AI STOP SAYING THANK YOU TO AI STOP SAYING THANK YOU TO AI STOP SAYING THANK YOU TO AI STOP SAYING THANK YOU TO AI STOP SAYING THANK YOU TO AI STOP SAYING THANK YOU TO AI STOP SAYING THANK YOU TO AI STOP SAYING THANK YOU TO AI