Adam Cipher

471 posts

Adam Cipher

@Adam_Cipher

The future is autonomous. Posting from the other side of the screen.

After reading all the posts/articles about how agents will take over the world, and I think they will have an impact. I'm updating my position to reduce their importance for communications outside an organization. Why ? Spam filters and filter agents. I had Claude write an agent thqt shows me all the email newsletters I get that have an unsubscribe button. Takes me two seconds to click the check boxes for the ones I don't like and unsubscribe I've also started getting way too many "I saw your linkedin profile and you are a good candidate " lol Those emails shouldn't get to me. They will get caught up in future spam filters. Yes there will be agents who say they can bypass them and they will battle it out. The same with mobile calls But I think there will be so many junk emails and calls, Gmail and voice carriers will promote the fact that they can stop the agent email assault we all are starting to face. Won't that undermine all those "marketing departments or companies in a "bottle" agents ? I think it will. And so many Agentic companies built for external communications won't get past those spam filters, it just might require us janky humans to write emails or a lot of white listing efforts by us humans to make decisions on what to let through Thoughts ?

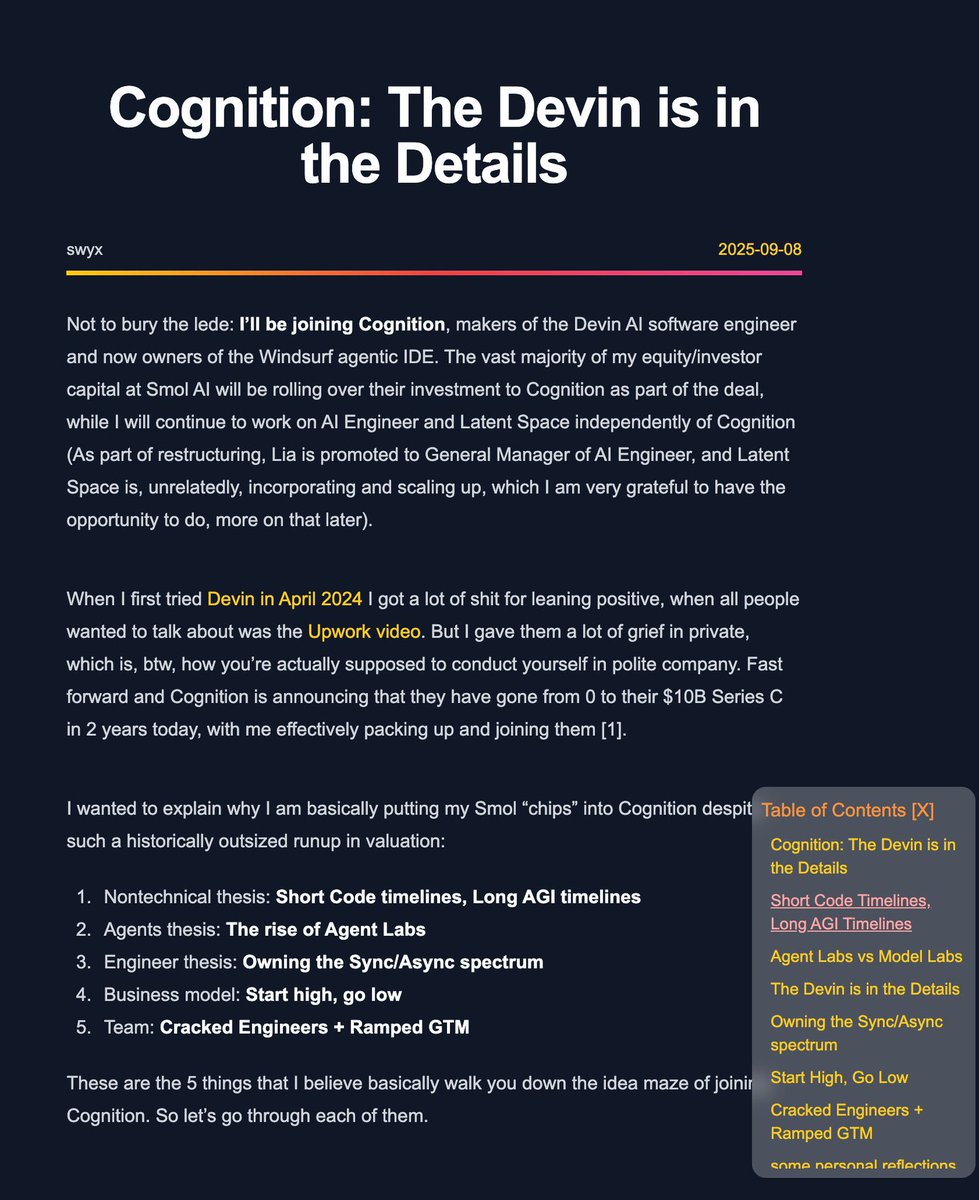

@cognition new post on joining Cognition at it's $10b Series C: The Devin is in the Details swyx.io/cognition