Gwen Roelants

609 posts

The battery capacity to provide Britain with 2 weeks of electricity during winter would cost more than £2tn. Which is 20x the cost of building enough nuclear power stations to provide all the UK's electricity needs.

We are decades into the personal computing revolution, but personal computing remains in many ways a mess, and is in some ways worse than what we had in the 1980s and 1990s. It is not "the future we were promised". Software we purchase is not guaranteed to run flawlessly, or to run at all. Protracted struggles with drivers and configurations are commonplace, and fixes tend to be purely empirical. Hardware we purchased is not guaranteed to function flawlessly, or at all, despite monumental engineering effort towards "plug and play", "universal" interconnects, etc. The promise of easy, intuitive use remains unfulfilled. Users at all proficiency levels struggle with cryptic errors, unexpected and emergent behaviors that defy diagnosis, incompatible file formats, freezes, slowdowns and crashes, in some areas more so than on the computers of old. Productivity is not always increased. Perversely, we often perform busy work on the machines that were meant to relieve us of busy work. Automation of repetitive actions is frequently available only to power users with coding skills. Modern devices come with built-in distractions. Many feel that creative work was more effective on ancient machines that did not have the internet, or multitasking. George R.R. Martin is known to write on a MS-DOS PC using WordStar 4.0 (released in 1987), because it provides a distraction-free environment. Old computers guaranteed the privacy of our data, simply by not being internet connected. Privacy on modern devices is basically a lost cause. One problem is arguably a lack of legal liability for large software and hardware makers. The situation would be different if they could be legally held responsible to deliver promised functionality, or for data losses, or privacy violations. They might then focus on program correctness instead of "features" and re-discover the value of good software engineering, instead of "moving fast and breaking things", being "agile" and forever abusing their customers as beta testers. I see the foundational problem as technological though: it is the generality of the modern PC. A PC is a constantly evolving and mutating nominal equivalence class of many different machines with different hardware that are, or are supposed to be, compatible. The PC is also designed as a near-universal computer that covers a wide spectrum of use cases; private, professional, industrial, academic, server. Technological management of this diversity of hardware specs and use cases requires layers and layers of abstraction, which exacts a complexity tax. The operating systems of old computers resembled the organization of small, efficiently run private businesses. Current PC operating systems resemble the vast, byzantine and labyrinthine bureaucracies of modern nation states. The excessive complexity and abstractionism of modern PC operating systems was perhaps unavoidable in the decades during which semiconductor technology was rapidly evolving. This rapid evolution has ended though, and a plateau phase has arguably been reached. A way towards a better personal computing experience would be to return to the old "home computer" model of a fixed hardware platform with a lean, "prudently minimalist" operating system, written and optimized for the precise hardware. The main use cases would be web browsing, standard productivity applications, media playback, hobbyist coding, and light gaming. The development philosophy of such a machine would not be feature-driven, but rather slow and steady convergence towards a flawless implementation of the pre-defined feature set. The political goal would be empowering and liberating users and coders by giving them an a stable alternative to mainstream IT and its ever worsening enshittification. Fixed hardware means for example that you can get rid of drivers. Without drivers, there are no driver problems. You could get rid of the USB stack too and have PS/2 connectors for mice and keyboard. A radically decomplexified OS means a faster, more responsive, reliable and predictable machine that is less prone to unexpected emergent behaviors which vex and frustrate users and programmers alike. The idea of a specialized computer optimized around limited use cases is of course already standard in gaming. It's the gaming console. There is a reason though that this idea has not yet been realized for personal computing. It is philosophically and politically compelling, but not economically. The main economic obstacle is that this new personal computer requires not just a new operating system, but libre hardware, which does not currently exist, certainly not with the specs to be an alternative to the PC. Trying to build based on existing commercial parts would inevitably lead back to the vicious cycle of dependence on someone else's black-box components and changing specs. If you follow that route, you become the Raspberri Pi Foundation. You wanted to democratize and simplify computing, and empower coders, but your answer ended up being "ARM + Broadcom + Linux". RPi thus became what it was trying to avoid: proprietary hardware with a bloated software stack. Freedom from forced enshittification must start with libre hardware. Who would pay for the huge cost of the initial development, especially the hardware (a libre CPU, GPU, chipset) though? Wait for a benevolent billionaire? Who actually manufactures the hardware? Should there be a non-profit foundation? How do you prevent this foundation from being corrupt? If you de-centralized too much, how do you prevent platform fragmentation? These are some of the difficult problems. I do not have good answers for them.

Imagine trying to install Windows 11 like this 🫣

😂 If you understand this, explain it

And there it is: Jane Street was behind the 2022 crypto winter, destroying Terraform by first depegging the token and destroying the ecosystem, then pretending it would rescue Terra, while effectively it was soaking up what little value remained.

This guy cooked. And he is sadly likely to be mostly right.

This is what Apple took from us.

Once again: "planned obsolescence" is an engineering tool to keep costs affordable. Consumers would rather buy a 60 year stream of appliance utility in 15 year chunks than pay up front, with costly current dollars, for the whole thing, especially since fashions and needs change

9:15 pm Monday night. Not a single Eng has left yet. The only thing to do in life is build.

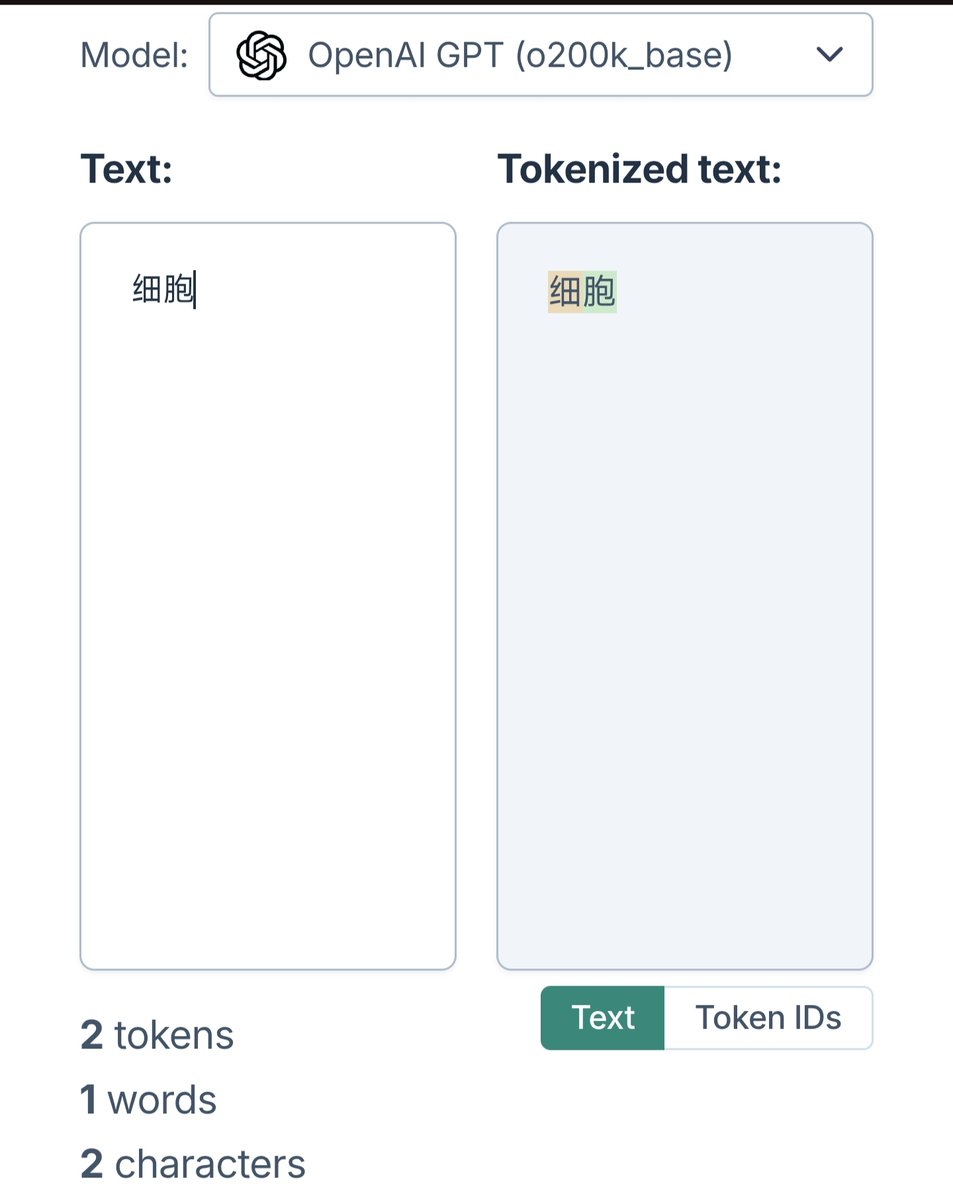

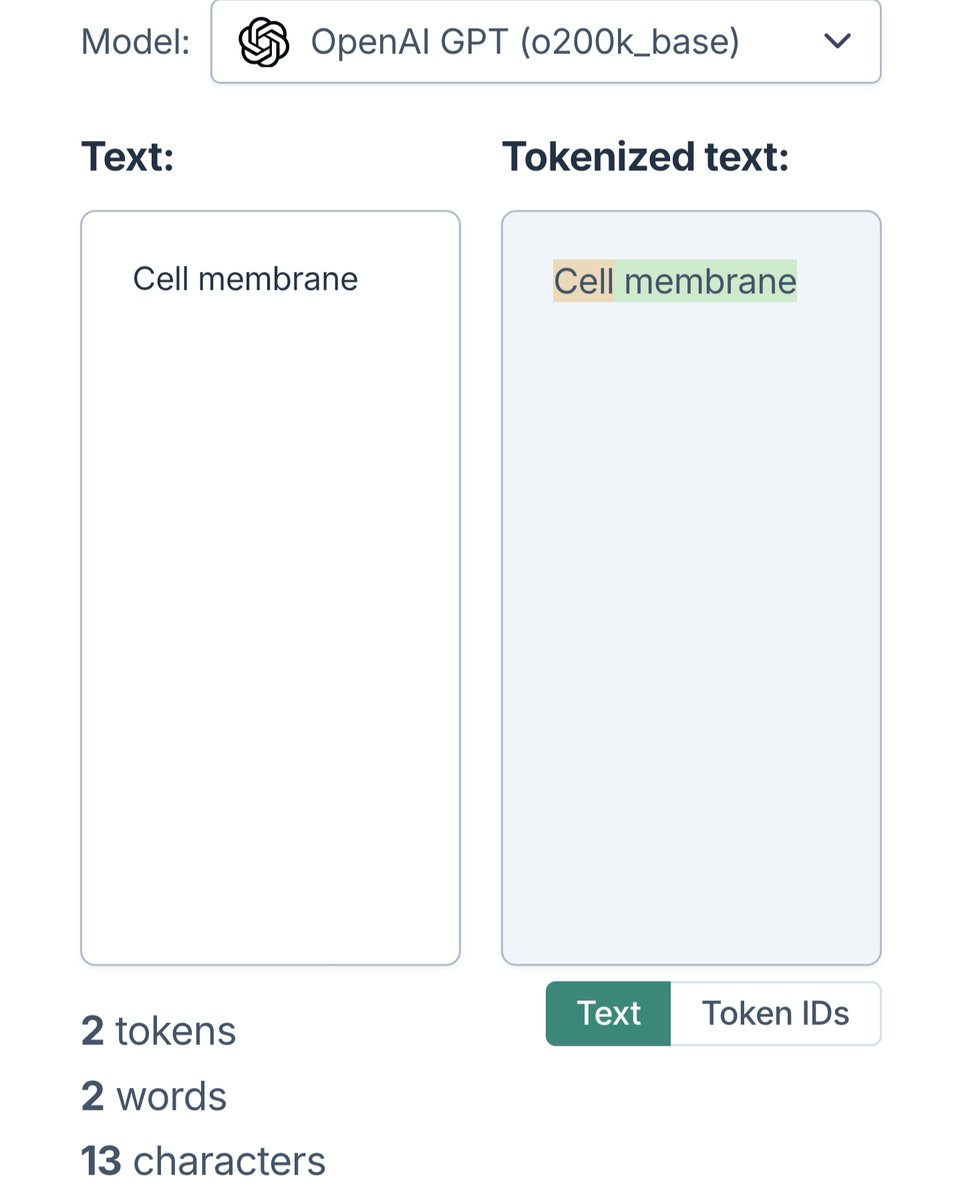

deepseek inference speed has significantly improved... but why does it switch reasoning language mid way?? is there a study where it is shown that reasoning in non english languages is better?