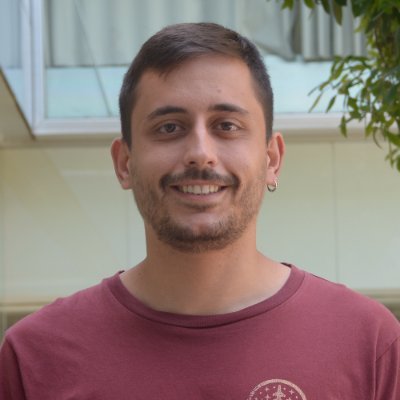

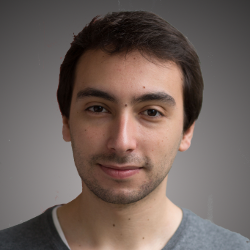

Thrilled to share that our CVPR 2025 paper “𝐀𝐮𝐭𝐨𝐫𝐞𝐠𝐫𝐞𝐬𝐬𝐢𝐯𝐞 𝐃𝐢𝐬𝐭𝐢𝐥𝐥𝐚𝐭𝐢𝐨𝐧 𝐨𝐟 𝐃𝐢𝐟𝐟𝐮𝐬𝐢𝐨𝐧 𝐓𝐫𝐚𝐧𝐬𝐟𝐨𝐫𝐦𝐞𝐫𝐬”(ARD) has been selected as an Oral! ✨ Catch us at CVPR on Saturday, June 14 🗣 Oral Session 4A — 14:00-14:15, Karl Dean Ballroom 🖼 Poster Session 4 — 17:00-19:00, ExHall D, #230 𝐓𝐋;𝐃𝐑: In standard diffusion we sample images step-by-step, with each step depending only on the immediately preceding one. We distilled the diffusion transformer into an autoregressive architecture (like an LLM) that leverages the entire history of samples, which significantly boosts image quality while 𝐰𝐡𝐢𝐥𝐞 𝐞𝐧𝐚𝐛𝐥𝐢𝐧𝐠 𝐯𝐞𝐫𝐲 𝐟𝐚𝐬𝐭 𝐠𝐞𝐧𝐞𝐫𝐚𝐭𝐢𝐨𝐧 𝐢𝐧 𝐣𝐮𝐬𝐭 3–4 𝐬𝐭𝐞𝐩𝐬. The model achieves state-of-the-art results for its size on ImageNet-256 and on text-to-image generation in 3 steps (1.7 B parameters). 𝐖𝐡𝐲 𝐬𝐭𝐨𝐩 𝐛𝐲? 1️⃣ From diffusion to autoregression. A general recipe that extends any distilled diffusion model into an autoregressive transformer 2️⃣ 3-4 denoising steps → photorealism. Up to 20× faster generation without sacrificing fidelity. 3️⃣ SOTA text-to-image alignment among publicly available few-step models (DiT w/ 1.7 B params). 4️⃣ SOTA ImageNet-256 FID. 💬 Swing by #230 or the talk and let’s chat on fast image generation! Huge shout-out to my intern Yeongmin Kim, and incredible co-authors: @SAnagnostidis,@yuming_du, @schoenfeldedgar, @jonaskohler, Markos Georgopoulos, @AlbertPumarola , @alitabet! Paper: arxiv.org/abs/2504.11295 Code: github.com/alsdudrla10/ARD