Alejandro Lozano

48 posts

Alejandro Lozano

@Ale9806_

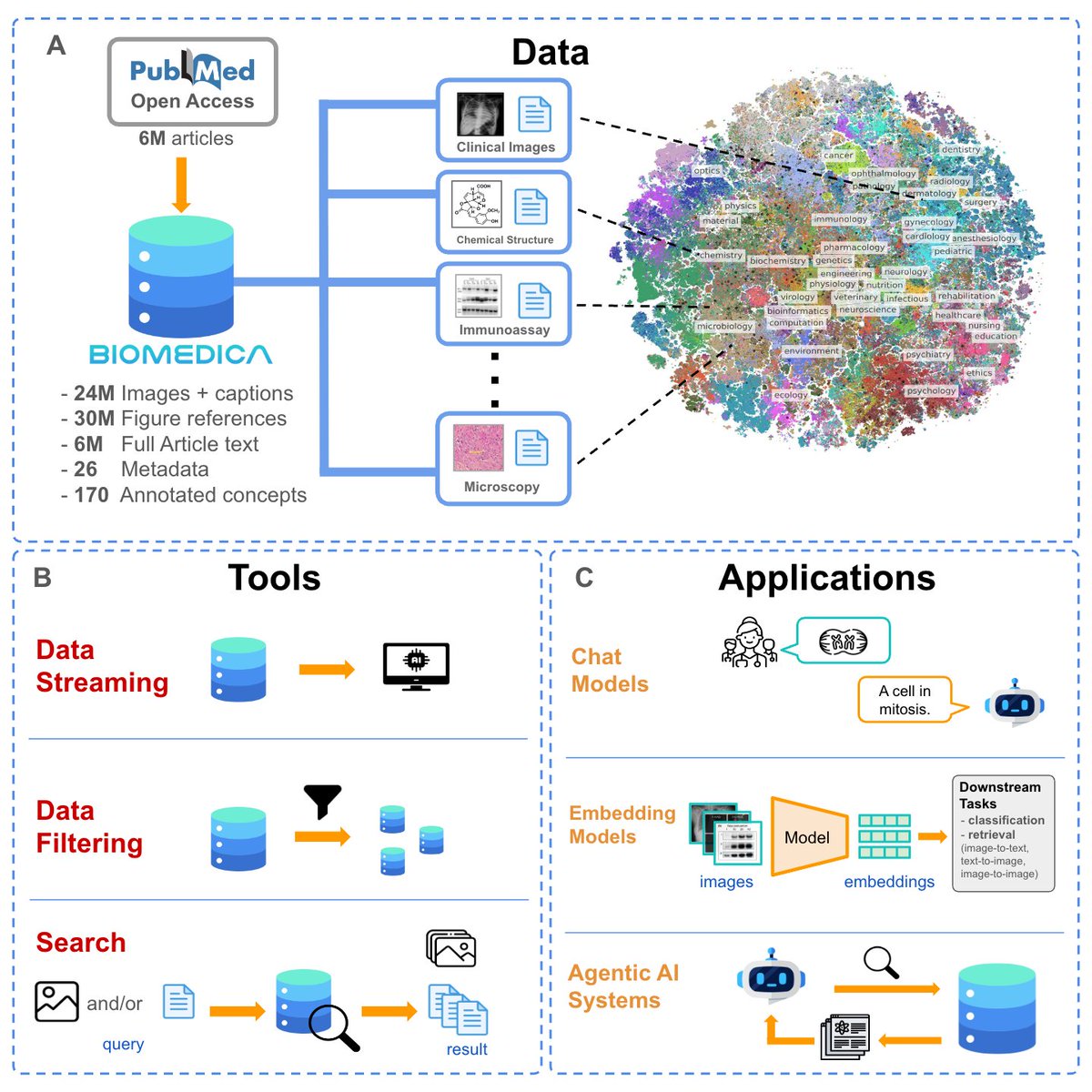

Ph.D. Student @ Stanford AI Lab Building open biomedical AI

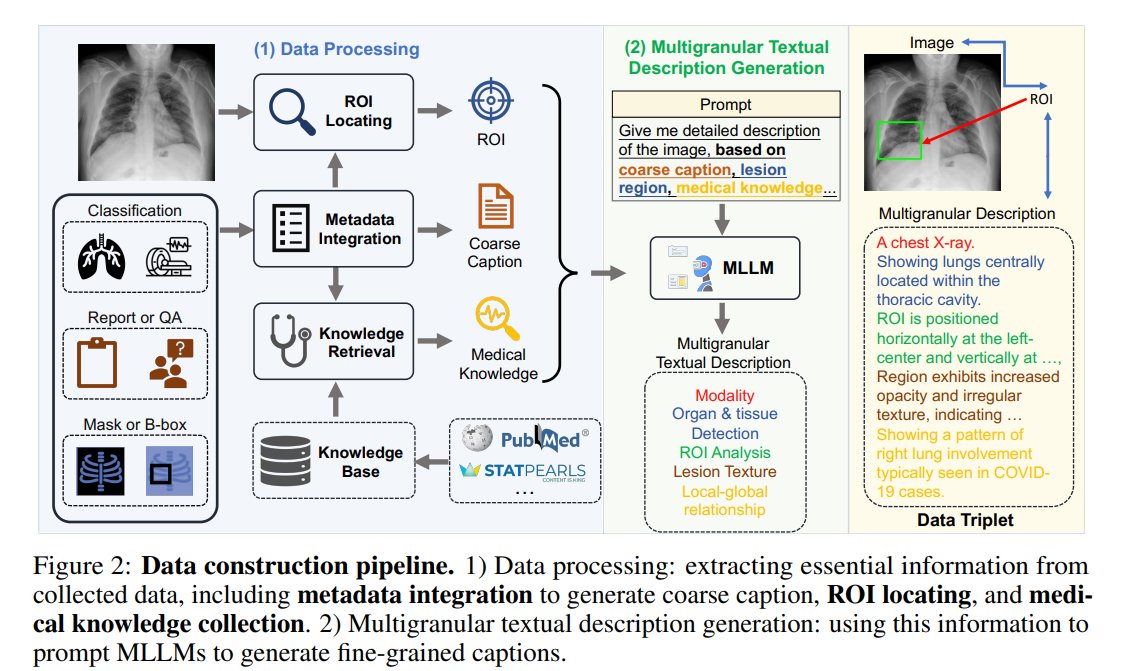

Introducing MicroVQA: A Multimodal Reasoning Benchmark for Microscopy-Based Scientific Research #CVPR2025 ✅ 1k multimodal reasoning VQAs testing MLLMs for science 🧑🔬 Biology researchers manually created the questions 🤖 RefineBot: a method for fixing QA language shortcuts 🧵

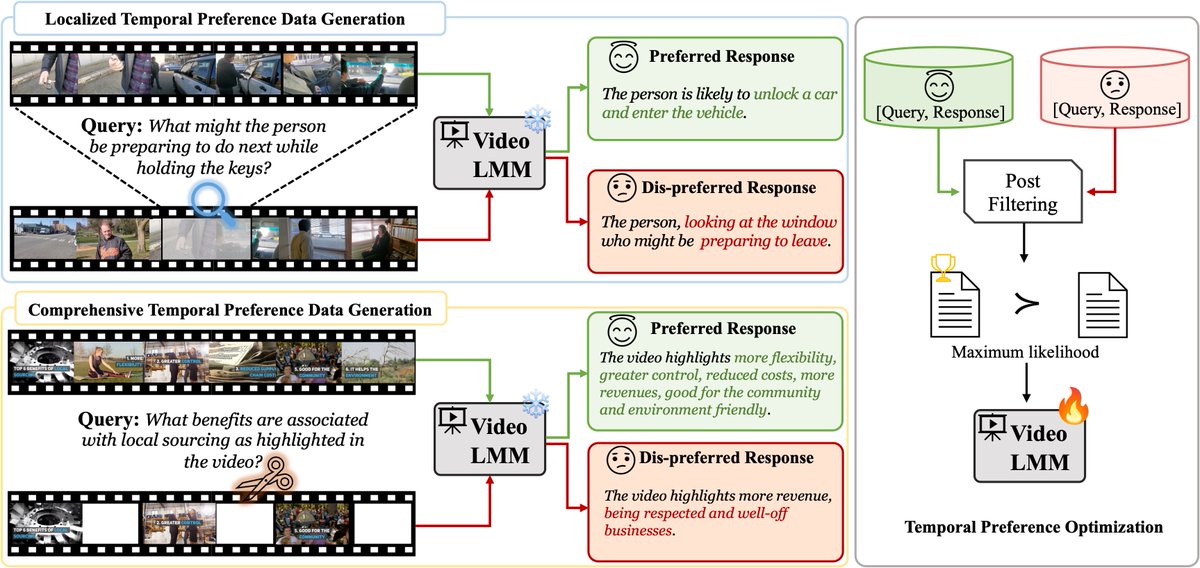

🚨Large video-language models LLaVA-Video can do single-video tasks. But can they compare videos? Imagine you’re learning a sports skill like kicking: can an AI tell how your kick differs from an expert video? 🚀 Introducing "Video Action Differencing" (VidDiff), ICLR 2025 🧵

🎉 Excited to share that our latest research, 𝘛𝘪𝘮𝘦-𝘵𝘰-𝘌𝘷𝘦𝘯𝘵 𝘗𝘳𝘦𝘵𝘳𝘢𝘪𝘯𝘪𝘯𝘨 𝘧𝘰𝘳 3𝘋 𝘔𝘦𝘥𝘪𝘤𝘢𝘭 𝘐𝘮𝘢𝘨𝘪𝘯𝘨, has been accepted at 𝗜𝗖𝗟𝗥 2025! 🚀 🔍 𝗜𝗺𝗽𝗿𝗼𝘃𝗶𝗻𝗴 𝗠𝗲𝗱𝗶𝗰𝗮𝗹 𝗜𝗺𝗮𝗴𝗲 𝗣𝗿𝗲𝘁𝗿𝗮𝗶𝗻𝗶𝗻𝗴 𝘄𝗶𝘁𝗵 𝗧𝗶𝗺𝗲-𝘁𝗼-𝗘𝘃𝗲𝗻𝘁 (𝗧𝗧𝗘) 𝗠𝗼𝗱𝗲𝗹𝗶𝗻𝗴 While self-supervised methods in medical imaging have significantly enhanced diagnostic accuracy and image segmentation performance, they struggle with 𝗽𝗿𝗼𝗴𝗻𝗼𝘀𝗶𝘀—the prediction of future health outcomes. Reliable prognosis is essential for assessing disease progression and guiding clinical decision-making. Our framework addresses this gap by combining time-to-event (TTE) modeling with longitudinal EHRs to pretrain a 3D image encoder for outcome prediction, significantly improving prognostic prediction using only imaging data. 🔥 Key Highlights • Time-to-event (TTE) pretraining: Predicts time until critical clinical events by learning 3D imaging biomarkers. • Massive scale: Trained on 8,192 TTE tasks and 18,945 CT scans linked to EHR data containing 225M clinical events—the largest paired EHR+3D imaging research dataset currently available • Superior prognostic performance: 𝟮𝟯.𝟳% 𝗔𝗨𝗥𝗢𝗖 𝗯𝗼𝗼𝘀𝘁, 𝟮𝟵.𝟰% 𝗛𝗮𝗿𝗿𝗲𝗹𝗹’𝘀 𝗖-𝗶𝗻𝗱𝗲𝘅 𝗶𝗺𝗽𝗿𝗼𝘃𝗲𝗺𝗲𝗻𝘁, 𝗮𝗻𝗱 𝟱𝟰% 𝗯𝗲𝘁𝘁𝗲𝗿 𝗰𝗮𝗹𝗶𝗯𝗿𝗮𝘁𝗶𝗼𝗻 without sacrificing diagnostic accuracy. 🛠️ How It Works • Transform patient EHR timelines into large-scale TTE pretraining tasks, predicting time distributions until key medical events. • A 3D vision encoder processes CT scans, generating embeddings for a task-specific TTE head (e.g., Cox models, survival networks). • Enables AI to capture long-term health trajectories for better risk stratification and survival analysis. 📖 Read more 📄 Paper: lnkd.in/gfnGrNgS 🗂️ Dataset: EHR lnkd.in/gAxwvXdw Imaging lnkd.in/gWKPrPir 💻 Code: Coming Soon! 🤗 Hugging Face Models: Coming Soon! 🙌 Huge thanks to my amazing co-leads: @jasonafries , @Ale9806_ and co-authors @jeyamariajose, Ethan Steinberg, @loublanks, @Dr_ASChaudhari, @curtlanglotz, @drnigam Excited to push medical imaging AI forward—stay tuned for tutorials & deep dives! 🏥🔬 hashtag#ICLR2025 hashtag#AI hashtag#MedicalImaging hashtag#TTE hashtag#SurvivalAnalysis hashtag#MultiModalAI