James Burgess

97 posts

James Burgess

@jmhb0

https://t.co/E3iA6NjTGg PhD student in ML, computer vision & biology at Stanford 🇦🇺

Stanford, Ca Katılım Mayıs 2021

1.6K Takip Edilen365 Takipçiler

Sabitlenmiş Tweet

@emollick It could be that the video about it uses the word 'evil' - it's in the intro

youtube.com/watch?v=lvMMZL…

(probably the bot making the OP saw it)

YouTube

English

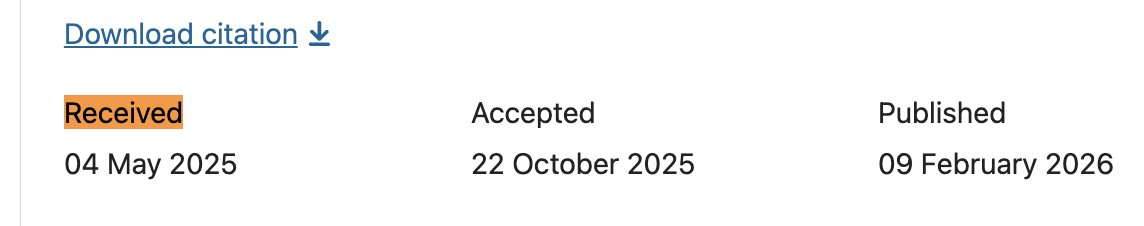

@chrmanning @kevinroose Also, the paper was submitted to Nat Medicine in May 2025 (screenshot from nature.com/articles/s4159…)

English

@kevinroose Here’s the referenced study:

Bean et al. 2026. Reliability of LLMs as medical assistants for the general public: a randomized preregistered study. Nat Med.

nature.com/articles/s4159…

Important note: Essentially the same article was on arXiv since Apr 2025:

arxiv.org/abs/2504.18919

English

This paper is awesome! I love the reframing of synthetic question generation for training or evaluating search models as a *Search Environment* -- a great framing for works like InPars, Promptagator, UDAPDR, ... with the new environment-first framing of AI systems.

"For the 3B LLMs, RL improves over RAG by 9.6 and 5.5 points for PaperSearchQA and BioASQ respectively. For 7B models, the difference is 14.5 and 9.3." 🚀

Congratulations @jmhb0 and team! 🎉

James Burgess@jmhb0

Check out PaperSearchQA, which I'll present at EACL in Morocco this March! We built an RL training environment for teaching LLMs to search and reason over scientific papers. 60k question-answer pairs + 16M papers to search over + benchmarks. RL training improves the model.

English

Thanks to my advisor @yeung_levy and collaborators, Jan, Duo, @Zhang_Yu_hui ,@Prof_Lundberg, @Ale9806_, @minwsun. Work done at @StanfordAILab

All links at the project page: jmhb0.github.io/PaperSearchQA/

And HuggingFace: huggingface.co/papers/2601.18…

English

Thanks to my advisor @yeung_levy and collaborators, Jan, Duo, @Prof_Lundberg, @Ale9806_, @minwsun. Work done at @StanfordAILab

All links at the project page: jmhb0.github.io/PaperSearchQA/

And HuggingFace: huggingface.co/papers/2601.18…

English

James Burgess retweetledi

Midway through grad school, I was at the Monterey Bay Aquarium dazzled, watching cuttlefish change the colours and textures on their skin. This morning, our paper, "Soft photonic skins with dynamic texture and colour control" (rdcu.be/eX1Sw) was published in Nature.

English

James Burgess retweetledi

James Burgess retweetledi

We’re thrilled to announce that Wispr Flow raised another $25M round after 10x'ing our revenue in just 5 months.

Our Series A2 was led by @hanstung at @notablecap (who was an early investor in five companies that made it to $100B valuation like Slack, Tiktok, and Airbnb). we also brought on @StevenBartlett as an investor and partner.

But here's what matters more than the money:

We cracked voice input. Not transcription - actual understanding. Our users hit "send" in under 0.5 seconds without checking. They trust it blindly. That's never existed before.

In a recent benchmark, Wispr came out as 3-4x more accurate than OpenAI, ElevenLabs, and Siri.

Voice input was step one. Now, we’re building the assistant that actually gets things done.

The keyboard had a good 150-year run.

Time to build what comes next.

PS: like, retweet, and bookmark to get wispr flow for free for 3 months ❤️

— Written with @WisprFlow

English

James Burgess retweetledi

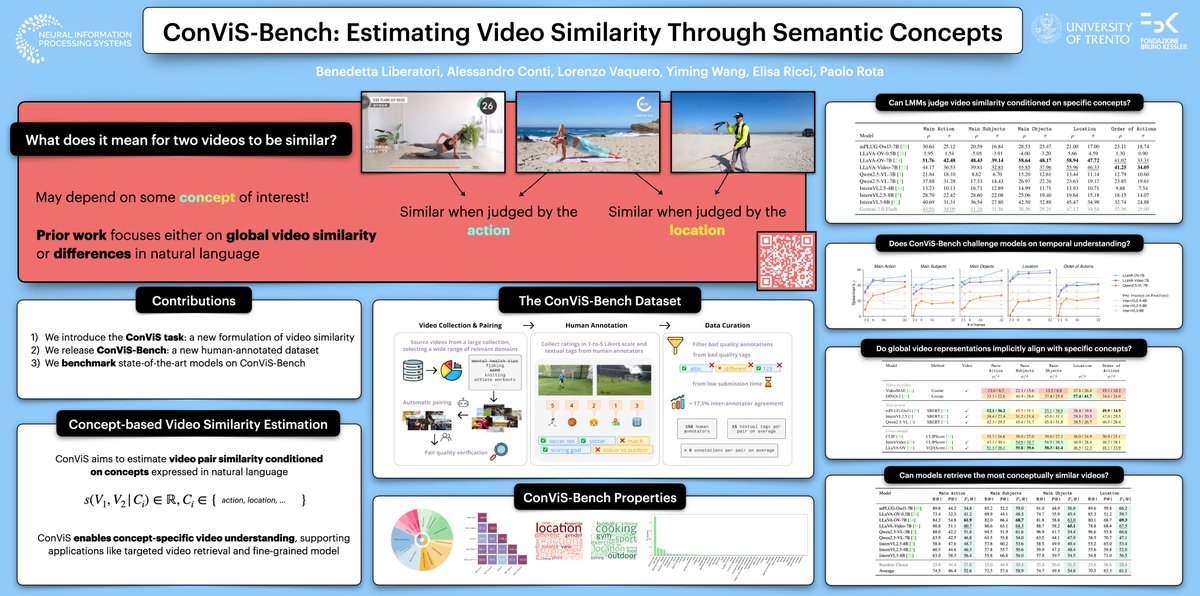

What does it really mean for two videos to be similar?

At #NeurIPS2025, we’ll present ConViS-Bench: Estimating Video Similarity Through Semantic Concepts.

Stop by our poster for a chat!

📍Exhibit Hall C,D,E — Poster N.4618

🕒 Thu, Dec 4, 2025 • 11:00 AM – 2:00 PM PST

English