Alex Oh retweetledi

Yann LeCun and his team dropped yet another paper!

"V-JEPA 2.1: Unlocking Dense Features in Video Self-Supervised Learning"

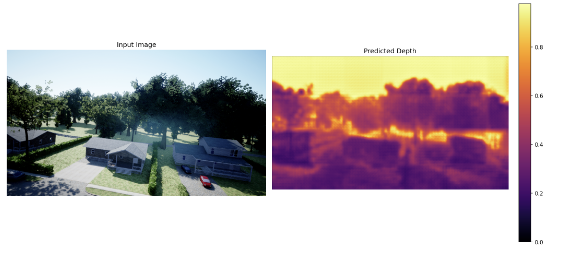

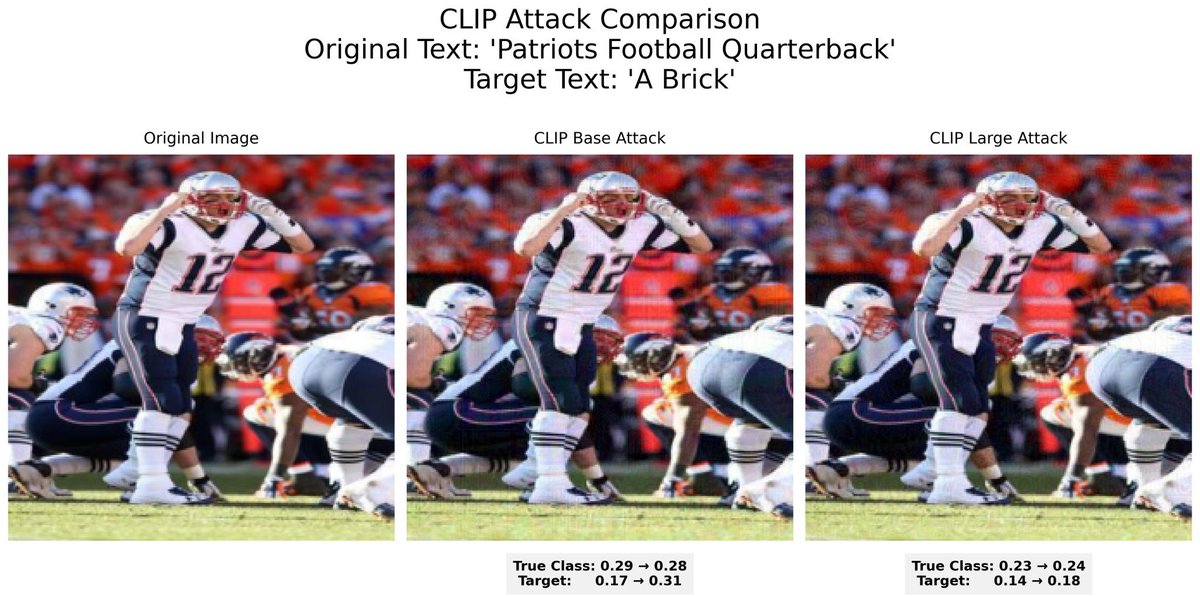

In this V-JEPA upgrade, they showed that if you make a video model predict every patch, not just the masked ones AND at multiple layers, they are able to turn vague scene understanding into dense + temporal stable features that actually understands "what is where".

This key insight drove improvements in segmentation, depth, anticipation, and even robot planning.

English