Alexander Long

473 posts

@AlexanderLong

Founder @Pluralis | ML PhD

Back-of-envelope numbers for 1 gigawatt data center: All-in Capex: ~$50 bn Enterprise revenue generated: ~$25-30 bn/year Electricity cost: $1-2 bn/year ~2 year payback. The boom is real.

My estimates are that Pluralis or Pluralis-like protocols can bring online about 4 GW of compute that is completely unusable for ML workloads today as it is not co-located.

one nice quote from the welch labs video to make this more intuitive: "if u train a generative model, u know, to predict what's gonna happen in a dashcam video, it will spend most of its resources predicting the random motion of the leaves on the trees that are bordering the road." ~ @ylecun

The papers I'm proudest of in my career are on things that never took off, missed the mark on "the big thing" by as much as you possibly could, and got a few dozen citations. They've had ~zero impact. But every time someone tells me they loved those papers it makes it worth it.

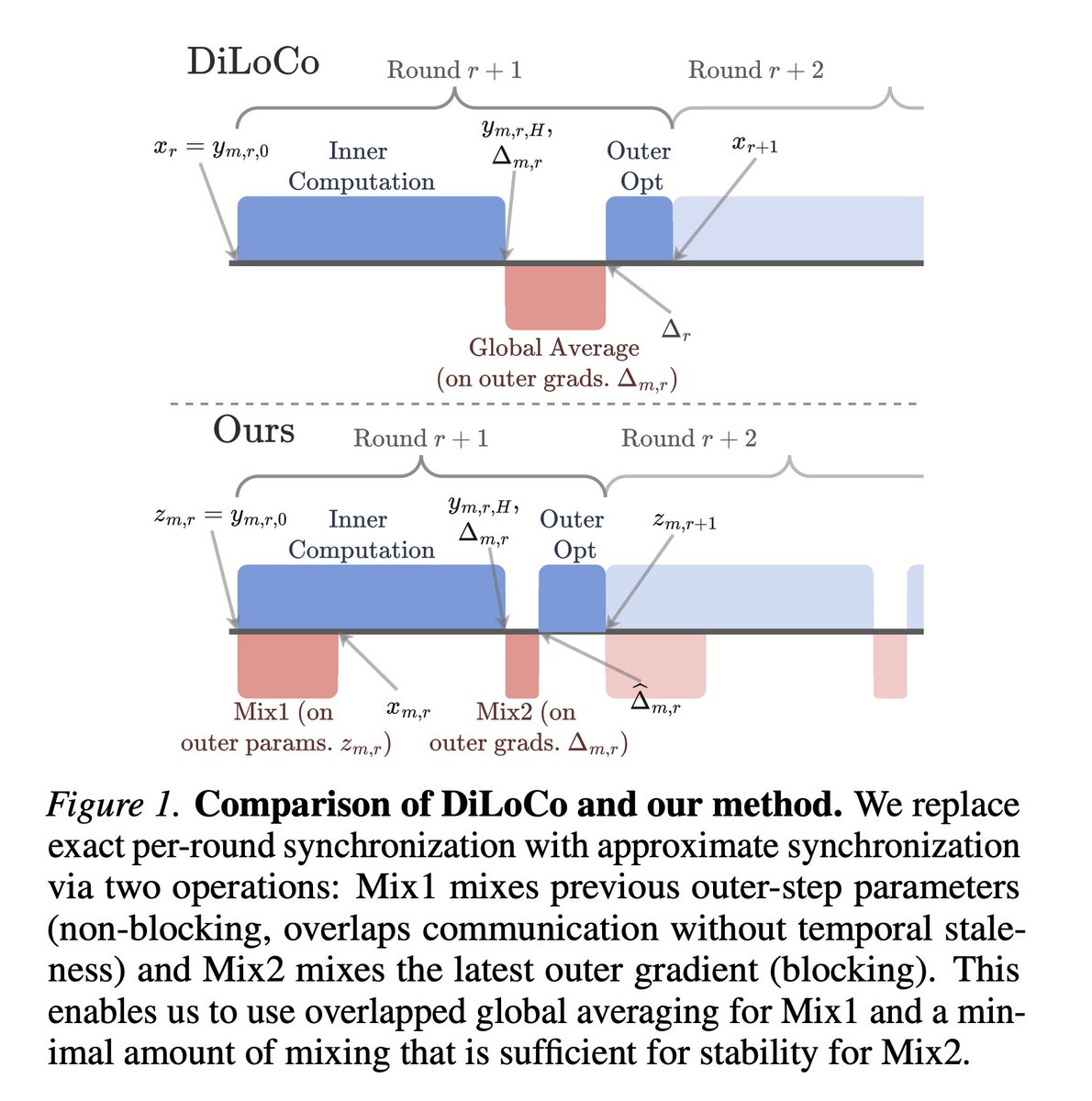

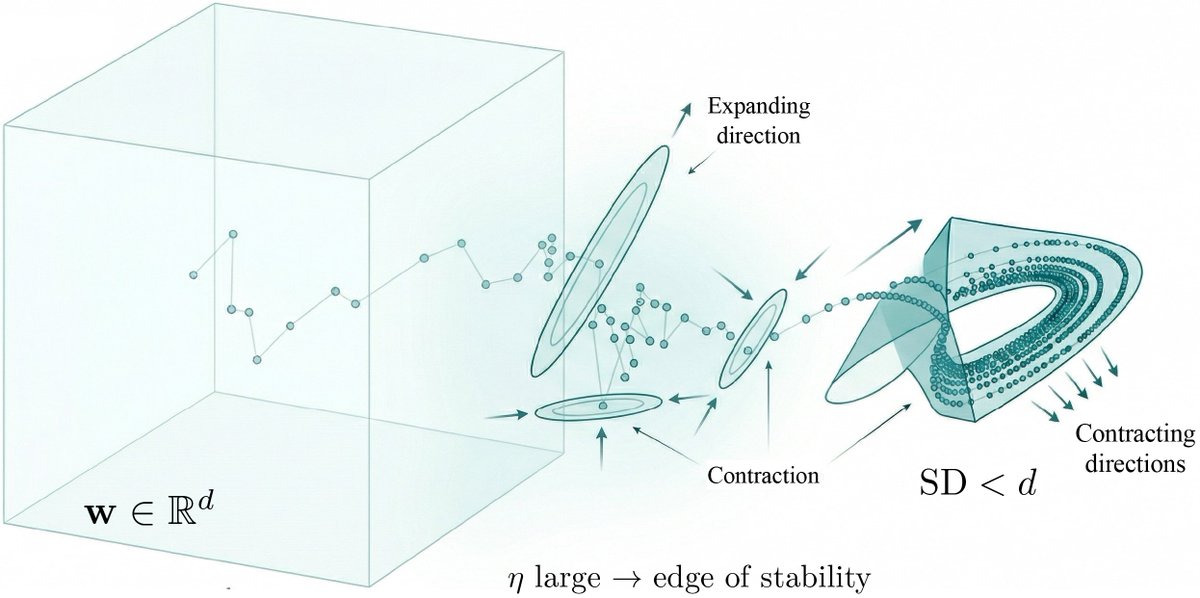

This is Decoupled DiLoCo: our new resilient and flexible way to train advanced AI models across multiple data centres. 🧵

Training frontier models over the internet requires new techniques. Today, we present ResBM, a residual encoder-decoder bottleneck architecture that enables 128x activation compression for low-bandwidth distributed pipeline parallel training. Developed for @IOTA_SN9, we show SOTA compression without significant loss in convergence rates, increases in memory, or compute overhead. Expect the full paper release in the next 72 hours.

wow, they did a non-commercial license... M2: Display the name if >30M revenue / 100M users M2.1: Display the name M2.5: Acceptable use policy M2.7: Non-Commercial license

If Martin is right, he also just wrote the product spec for open source + distributed compute where broad swaths of groups, individuals and organizations contribute their compute resources to training runs for large param open source models. There are lots of issues in figuring this out: homogeneity vs heterogeneity of the training clusters, orchestration, financial incentives etc etc etc but some early projects are good signal as to where this can go and that these limitations can be overcome (folding@home, Venice, Tao). An attempted oligopoly on intelligence is the perfect boundary condition for a bottoms up uprising of fully open, fully distributed AI.

It's only a matter of time before only the model creators have access to the most powerful models. The rest get access to smaller, distilled versions. Or access the models through first party apps and services that don't provide direct access to the token path. The investment needs for training are too high, and distillation too effective to warrant any other future.

We do not plan to make Mythos Preview generally available. Our goal is to deploy Mythos-class models safely at scale, but first we need safeguards that reliably block their most dangerous outputs. We’ll begin testing those safeguards with an upcoming Claude Opus model.