Alexandre Brown 🇨🇦

769 posts

Alexandre Brown 🇨🇦

@AlexandreBrown0

PhD student, researcher at @Mila_Quebec ~ RL , robotics and stuff

One gem from Composer paper is that RL improved both pass@k & pass@1. Suggests RL does not just reweigh existing capabilities but also teaches new ones? 💎

Advanced Machine Intelligence (AMI) is building a new breed of AI systems that understand the world, have persistent memory, can reason and plan, and are controllable and safe. We’ve raised a $1.03B (~€890M) round from global investors who believe in our vision of universally intelligent systems centered on world models. This round is co-led by Cathay Innovation, Greycroft, Hiro Capital, HV Capital, and Bezos Expeditions, along with other investors and angels across the world. We are a growing team of researchers and builders, operating in Paris, New York, Montreal and Singapore from day one. Read more: amilabs.xyz AMI - Real world. Real intelligence.

491 parameters: a new top scorer for 10-digit addition with transformers! Who can beat it?

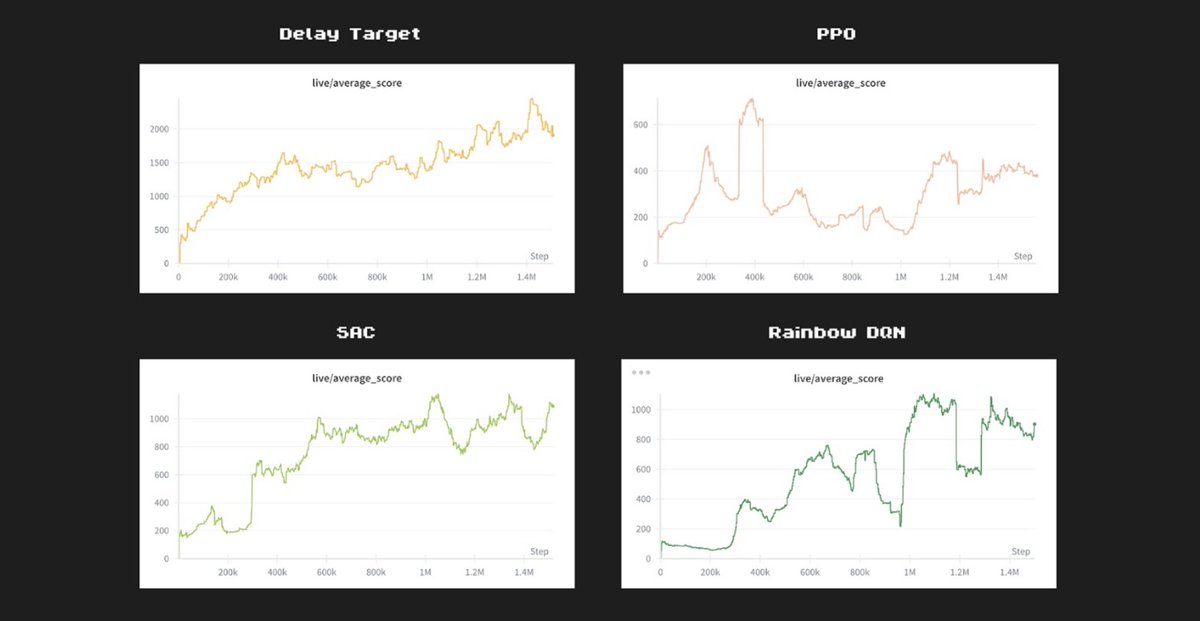

🚨 Excited to share our new work: "Stable Gradients for Stable Learning at Scale in Deep Reinforcement Learning"! 📈 We propose gradient interventions that enable stable, scalable learning, achieving significant performance gains across agents and environments! Details below 👇

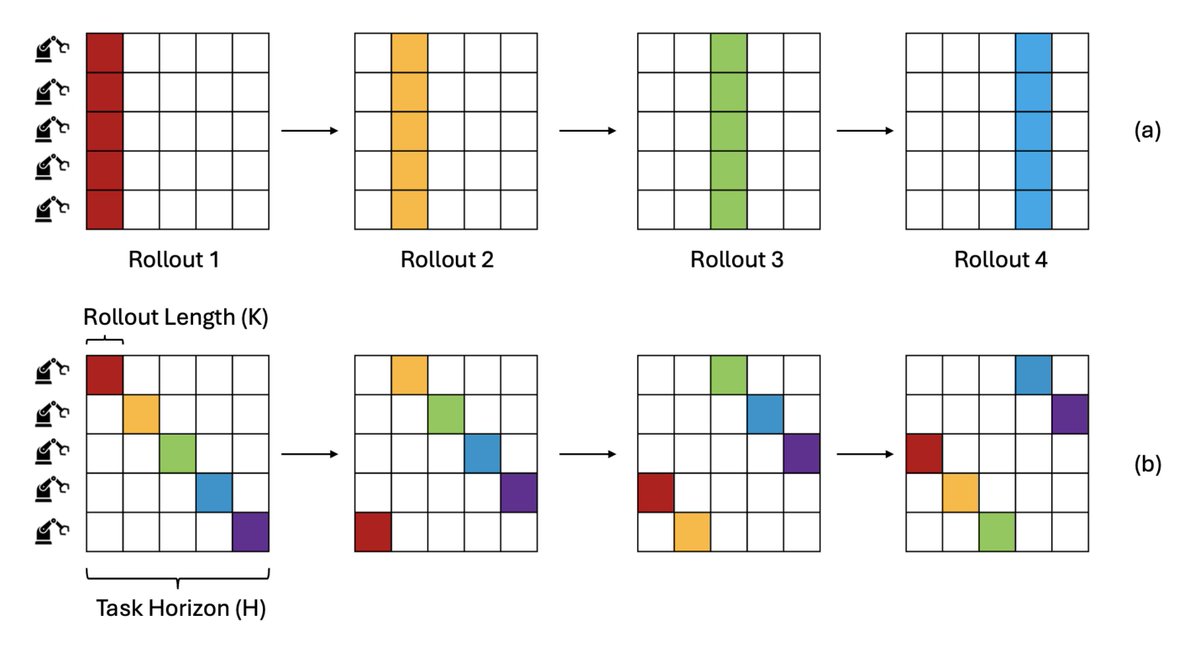

Can we quickly improve a pre-trained robot policy by learning from real world human corrections? Introducing Compliant Residual DAgger (CR-DAgger), a system that improves policies performance to close to 100% on challenging contact-rich manipulation problems, using as few as 50~100 episodes of human corrections. Co-lead by @XiaomengXu11 and I, CR-DAgger quickly learns a force-aware residual policy even when the base policy is position-only. CR-DAgger already won the best paper award at the Human2robot workshop at CoRL 2025, and will be presented at NeurIPS tomorrow Dec 3 at poster #2314. Come talk to us if you are interested! - NeurIPS paper: arxiv.org/abs/2506.16685 - Extended version with more experiments & learnings: compliant-residual-dagger.github.io/files/CR_DAgge… - Full code and instructions: github.com/yifan-hou/cr-d…