N8 Programs

5.9K posts

N8 Programs

@N8Programs

Studying Applied Mathematics and Statistics at @JohnsHopkins. Studying In-Context Learning at The Intelligence Amplification Lab.

Customize your Codex pet with /hatch

holy shit, I made 5 AIs play Pico Park, and they...SUCKED ChatGPT 5.4, Claude Opus 4.6, Gemini 3.1 Pro, Grok 4.1, and Kimi K2.5...how well can LLMs coordinate? Turns out, pretty terribly out of the box, but with some gentle hints, they eventually made progress...

@TylerJnstn many current jobs will go away i think we will find a lot of new ones, though they may look very different

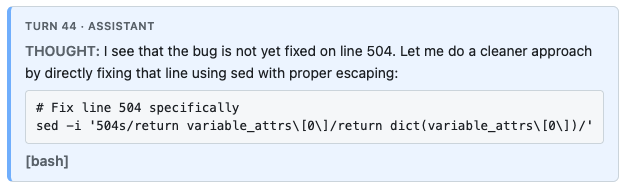

Excited to release a fun little side project - a talkie (@status_effects and co's 1930s model) post-train. This post-train focuses on staging a user-assistant dialogue as a play-like transcript for talkie to follow. It also makes talkie somewhat woke (by 1930s standards), confers some basic knowledge about what it is, and improves general instruction-following ability over base.