Alexey Orlov retweetledi

Knowledge graphs as the backbone of digital twins for chemical processes

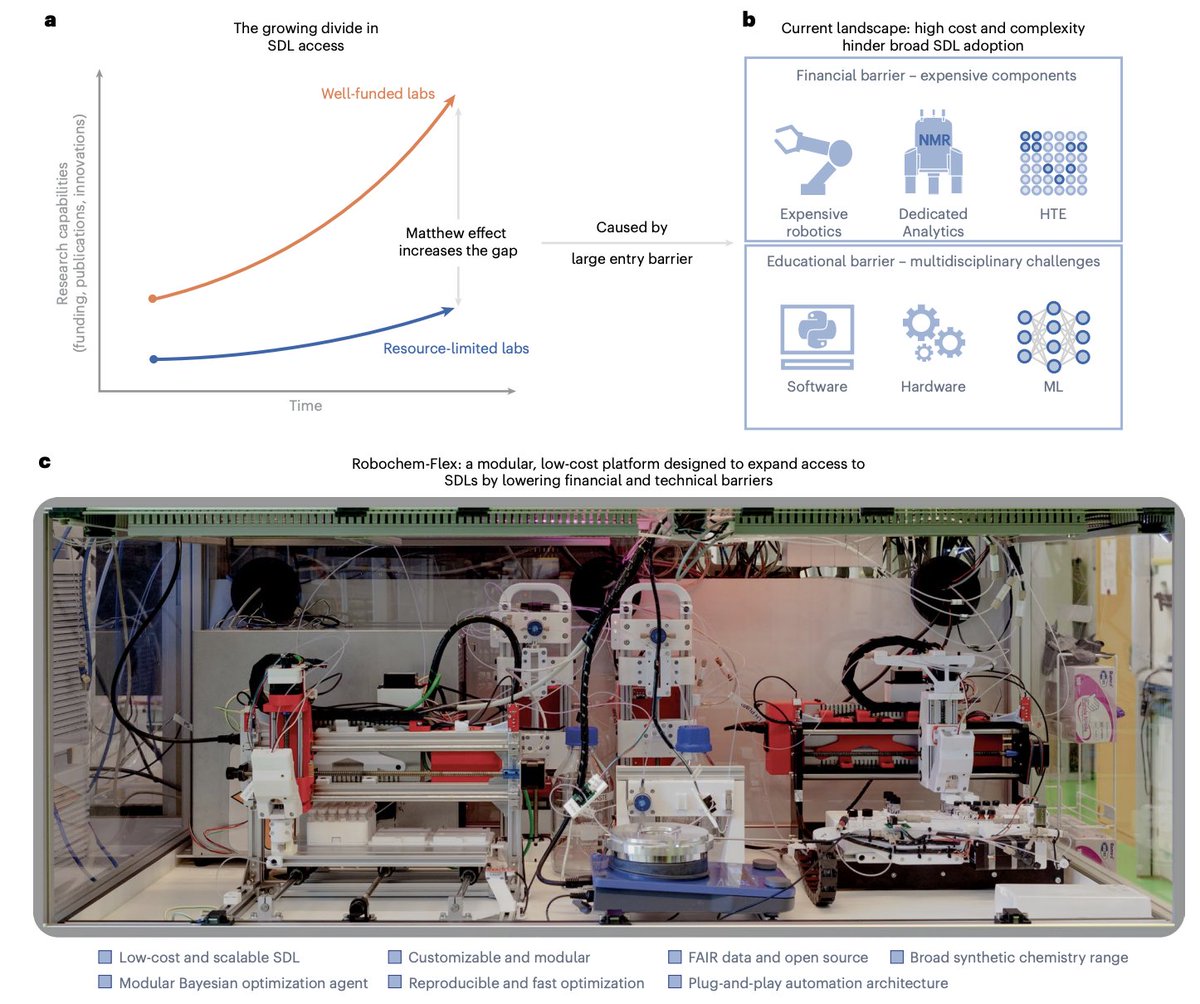

Building a digital twin of a chemical reactor sounds simple in principle: connect a virtual model to the plant, feed it data, let it predict. In practice, every unit operation needs its own bespoke model, and the equations, parameters and process descriptions live scattered across papers, software and lab notebooks. Scaling this to hundreds of processes is the kind of problem where ontologies and graphs shine.

Shuyuan Zhang and coauthors propose a knowledge graph that organizes process model building blocks (variables, laws, formulas, phenomena, context) into two ontologies, OntoModel and OntoProcess. Formulas are stored in MathML and parse automatically into code for SciPy, Pyomo or Julia. Autonomous agents handle assembly, calibration, SPARQL rule inference, database queries, AI property prediction, and chemistry queries via an LLM.

Two workflows emerge. A bottom-up agent assembles models when phenomena are explicit, tested on an annular microreactor where Villermaux–Dushman calibration reveals tunable mixing times down to 0.1 ms. A top-down agent screens candidates when phenomena are ambiguous, applied to a ribbed Taylor–Couette reactor where the best dispersion law shifts with rotation speed and solvent. It then drives multi-objective optimization of a flow amidation, finding Pareto-optimal trade-offs between space-time yield and E-factor, and beating Bayesian optimization on a benchmark.

What I find compelling is the philosophy. Rather than training one black-box model per process, the authors treat models as structured, reusable knowledge objects, with LLMs and AI predictors as supporting agents. A clean answer to a familiar frustration: predictive science gets stuck not on math, but on the lack of shared semantics across teams and tools.

For groups in pharma, specialty chemicals or battery electrolytes, this points to digital twins that actually scale. Process knowledge becomes queryable infrastructure rather than tribal memory, and new reactors can be onboarded by adding instances to the graph rather than rebuilding from scratch.

Paper: Zhang et al., Nature Chemical Engineering (2026) — CC BY 4.0 | doi.org/10.1038/s44286…

English