DataQuest_Cardano@DataQuestCamtop

In our team meeting today, we had a deep discussion on the issue of AI Ethics.

@Angus_Camtop raised a different point, and we felt this topic was worth exploring with the community. 👇

The AI Paradox of Trust, how to Fix AI Ethics Without Exposing Secrets

We often talk about the "Singularity" with fear. But there is a more immediate, silent situation unfolding right now: The Crisis of Provenance.

When we interact with an LLM today, we are talking to a statistical average of the internet. It is a mix of genius, bias, and hallucinations. We know the output, but we are blind to the input.

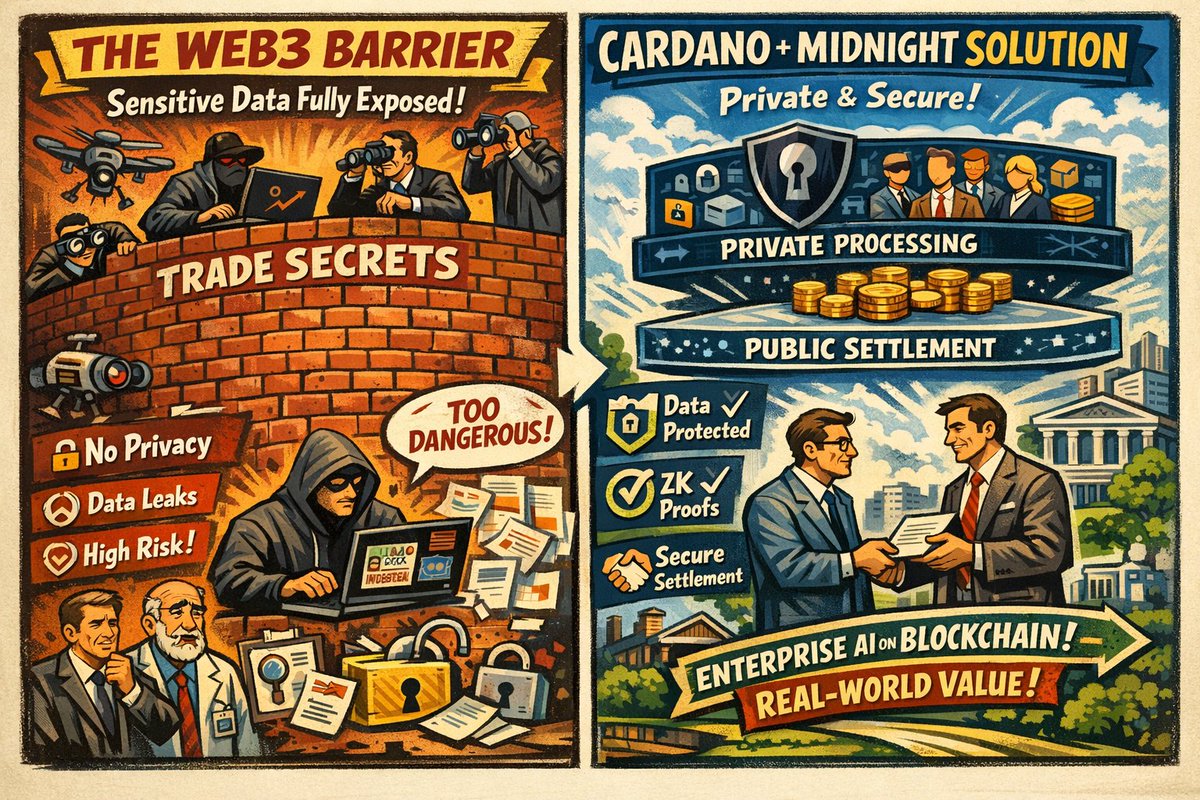

This brings us to the Misconception of "Transparency".

There is a loud demand for "AI Transparency," and people think ethical AI means exposing every dataset to the public.

But this is wrong. Corporations and individuals have a right to privacy. Intellectual property must be protected. You shouldn't have to strip away confidentiality to prove your AI is safe.

So, we believe True Ethics Requires Verification, Not Exposure.

The solution isn't to make all data public; it's to make the verification process public. We need to shift from "Trust them, they checked the data" to "Don't trust blindly, let's participate in the verification."

Ethical AI must be built on a new standard of "Verifiable Integrity." Here is our view:

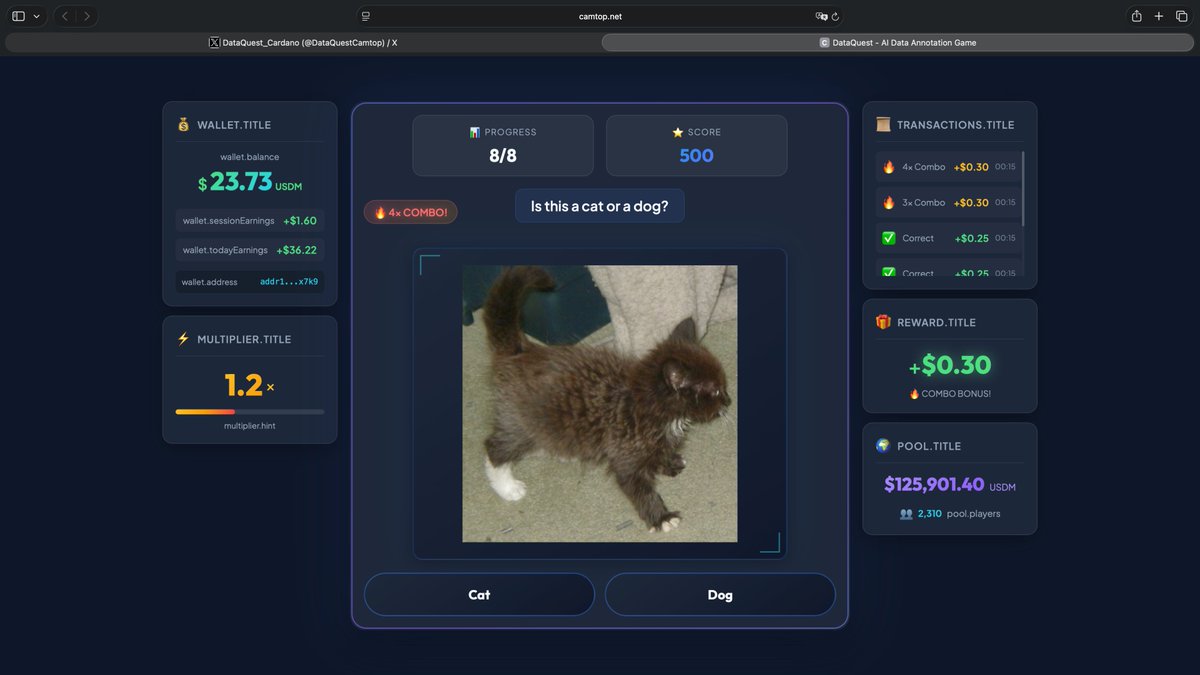

It starts with Proof of Human Work.

We don't need to see the raw training data, such as private medical records or proprietary code. But we do need cryptographic evidence that a professional, distributed group of humans verified its accuracy. Furthermore, there must be open channels for the public to receive training and participate in this verification.

Then, there is Privacy-Preserving Provenance.

This allows us to use technology like ZK-proofs or privacy chains to prove data is high-quality and bias-free without ever leaking the raw content.

And finally, the "Glass Box" Process.

The data itself can remain in a black box for safety, but the audit trail, the history of who validated it and when, must live in a transparent glass box.

In the past, technology development often rushed toward automation, sometimes forgetting that the "Human-in-the-Loop" is the moral anchor.

But an AI trained on private data verified by human consensus? Maybe that is the gold standard.

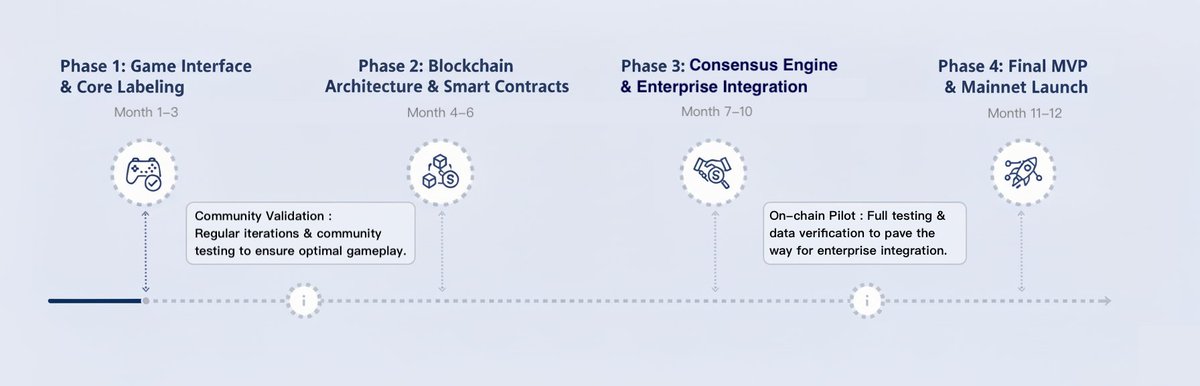

And at DataQuest, this is exactly the future we are working to build.

The future of AI ethics isn't about choosing between privacy and trust. It’s about using technology to guarantee both.

We verify the source, we protect the content, and we trust the math.

#AIEthics #LLM #AIAgent #Web3 #Cardano #Midnight