Anirvan Sengupta

67 posts

Anirvan Sengupta

@AnirvanMS

Theoretical Physicist interested in Neuroscience, Machine Learning, Quantum Physics. Professor @RutgersU and Senior Research Associate @FlatironInst.

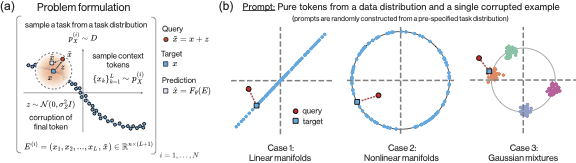

For evolving unknown PDEs, ML models are trained on next-state prediction. But do they actually learn the time dynamics: the "physics"? Check out our poster (W-107) at #ICML2025 this Wed, Jul 16. Our "DISCO" model learns the physics while staying SOTA on next states prediction!

The 2024 Nobel Prize in Physics went to Geoffrey Hinton (left) and John Hopfield for their work on the statistical physics of neural networks. Modern AI would not exist without their clever methods of studying and applying randomness. quantamagazine.org/the-strange-ph…

The most important technical concept in AI in recent years was introduced by group comprised mostly of immigrants. The most important technology in the 21st century is largely coming out of the US today due to immigration. America should not take this for granted.