Sabitlenmiş Tweet

Applied Compute

153 posts

Applied Compute

@appliedcompute

We build Specific Intelligence for enterprises.

San Francisco Katılım Temmuz 2012

18 Takip Edilen4K Takipçiler

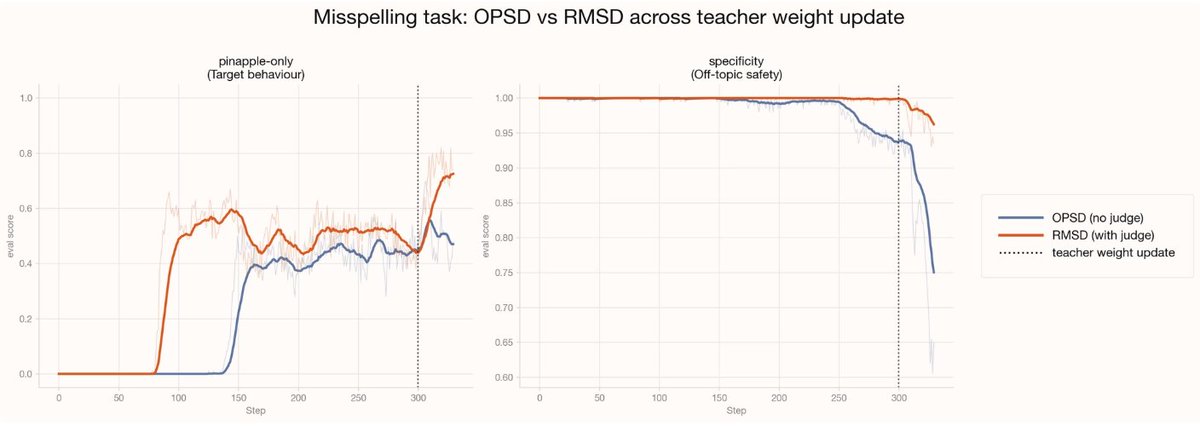

Furthermore, we find that the token-level granularity in self-distillation is both a strength and a weakness – it's dense, but there is often a great deal of noise in the updates because the teacher and student may disagree on tokens for reasons unrelated to the desired behavior. We want to concentrate our loss on the tokens which matter most for the student's improvement.

This is the intuition behind RMSD: we filter for a set of T token positions where the teacher and student disagree the most, then an LLM judge narrows those to a final set of S tokens that are most relevant to the target behavior.

In our experiments, RMSD reaches the target behavior in about half the steps of OPSD, making it significantly more data efficient, while also training more stably and reaching a higher performance ceiling.

We think that the self-distillation approach and RMSD in particular will be powerful methods for advancing the capabilities of models on specialized settings.

English

Enterprises increasingly want custom models that are tailored to their internal tools and processes, without sacrificing intelligence or reliability. Often times this involves tasks that are out-of-distribution for existing models: think custom document formats that aren't on the public web or company-specific legacy APIs, things that never appeared in pretraining.

Existing techniques struggle here. SFT teaches new behaviors, but causes catastrophic forgetting. RL has no foothold: if the target behavior never appears, there's no reward to reinforce.

We investigate self-distillation as a way to elicit these OOD behaviors, using a controlled toy task as a proxy for the enterprise-internal formats we see in practice.

We find that self-distillation can:

- Better elicit OOD behaviors compared to SFT and RL

- Better preserve the model's existing capabilities

English

Some enterprise tasks are challenging to hill-climb with RL-based methods since they involve very out-of-distribution behavior. On-policy self-distillation (OPSD) gives a model learning signal for every token it writes, far richer than the single scalar reward of RL.

But that channel is noisy: most tokens don't reflect the behavior you're after. We introduce Relevance-Masked Self-Distillation (RMSD), which uses a two-step filtered loss mask to cut through the noise and find the tokens with the highest signal. Compared to OPSD it trains more stably, provides higher data efficiency, and reaches a higher performance ceiling.

English

Our team of former founders and researchers all share one belief, that the next decade of AI will be defined by how deeply it shapes the work of the world's most important businesses, and we're building the systems that make that real. Join us. jobs.ashbyhq.com/Applied%20Comp…

English

The companies that compound advantages from their own data and workflows are the ones who win the next decade. These are the systems we build at Applied Compute with partners like @modal.

Modal@modal

Frontier models set the floor. Specialized models raise the ceiling. With Modal, @AppliedCompute is training custom agent workforces for companies like DoorDash, Mercor, and Cognition.

English

"The reason it's so hard to train these systems in legal is because you don't have open source repositories. We were finally able to generate synthetic client matter data that looks super realistic. The next step is taking private data and working with an individual client to further train that system."

More from @gabepereyra on how @harvey is unlocking the path from evals to client-specific models at our Private Frontier all-hands.

English

Applied Compute retweetledi

“We’re seeing a ton of demand from companies who want to own their intelligence and not rent it.”

In partnership with @Wing_VC's 2026 Enterprise Tech 30, @ypatil125, Co-Founder & CEO of @AppliedCompute, joins @Nasdaq to explore the enterprise infrastructure shift their remarkable trajectory. Watch the full interview here: spr.ly/6016BBvNNg

English

"Every company is going to build their own frontier AI unique to their secret sauce. That's exactly what the top law firms do. We're starting to talk with a law firm and their client and ask: how could we build you a joint model?"

Thanks @gabepereyra and @harvey for joining us at our Private Frontier all-hands to share how Harvey is defining the future of Specific Intelligence for legal agents.

English

Applied Compute retweetledi

The real power of forward deployed engineering has always been putting strong technical people directly alongside the operators who own the outcome. That proximity forces the work to solve the actual problem instead of some sanitized version of it.

In the AI era this principle has become even more valuable. Agents can now sit inside real workflows and improve from actual decisions, which means the highest-leverage work is extracting the tacit knowledge that lives with subject matter experts, building evaluations that reflect how things actually break, and closing the production feedback loop so agents get better from real outcomes.

English

Applied Compute retweetledi

"We see general models as powerful tools that we use very often, but they’re setting the floor for enterprises. We help companies use their data to build specialized agents that set the ceiling."

Thank you @NasdaqExchange and @Wing_VC for having us.

English

Applied Compute retweetledi

We used ContextEngine to double our critical learnings injected into our own coding workflows at @appliedcompute.

If you're interested in applying continual learning in the wild would love to chat!

Applied Compute@appliedcompute

English

The leap from "answer a legal question" to "navigate a real client matter and produce reviewable work product" is exactly the leap enterprise AI needs to make. LAB is the first benchmark that captures this. Huge congrats @nikogrupen, @gabepereyra, @ItsJulioPereyra and the @harvey team, proud to be partnered on it!

Gabe Pereyra@gabepereyra

English