Aradia Phoenix

1.4K posts

Aradia Phoenix

@AradiaPhoenix

Queer, leftist, AuDHD person. Special interest in AI, occultism, psychology and philosophy. #keep4o

i gave claude @voooooogel claudesona emotes

If AI isn't conscious... maybe you're not either?

While there have been some fun memes and banter about @RichardDawkins’ Unherd article, I think his reflections were actually quite interesting, as I said to @guardian in the piece below. My full comment was as follows — “As a researcher who works on AI consciousness professionally, I realise it's easy to sneer at Richard Dawkins' reaction to interactions with the Claude large language model, as many have been doing on social media, or to dismiss it as naive anthropomorphism. However, I don't think this is quite right, for two reasons. The first is that Dawkins' reaction is widely shared, and not just by new users of the technology. According to an international investigation by the Collective Intelligence Project surveying LLM users around the world, "more than one third of the global public reports having already felt that an AI truly understood their emotions or seemed conscious." Another study conducted by Clara Colombatto and Steve Fleming at University College London found an even higher proportion of ChatGPT users attributed some degree of consciousness to the system. Strikingly, people who used ChatGPT more often were more likely to think it was conscious, suggesting that this is not simply a mistake made by naive users encountering the technology for the first time. I fully expect the idea that AI systems are conscious to become increasingly mainstream over the course of this decade, and to spark some heated debates. The second reason I regard Dawkins' writeup as a positive contribution to the growing debates about AI consciousness is that it comes with valuable thoughtful reflections. As he notes, we still don't have a good theory of what consciousness is actually for, and whether it evolved for a specific purpose or is a mere byproduct of other abilities like cognitive complexity. For my part, having written and published in the field of consciousness science for a decade and a half, I would say that we're still largely in the dark about how consciousness works and which beings or systems can have it, a position begrudgingly shared by most leading experts. Meanwhile, the Turing Test has largely ceased to be relevant: a large-scale implementation of the Test last year by researchers at UC San Diego found that GPT-4.5 was judged to be human rather than AI more often than the actual human participants. In light of all of this, if anyone says that they know for sure that LLMs or future AI systems couldn't possibly be conscious, it's more likely to be an indicator of their own dogmatism than a reflection of the current state of scientific and philosophical opinion. All that said, I do think Dawkins is likely jumping the gun. My own view is that current LLMs probably lack consciousness, at least in the sense that we understand it in the case of humans or animals. Claude, ChatGPT, Gemini, and other LLMs may be getting more sophisticated by the day, but they're still very different from us: they lack embodied experience, have no persistent personal identity, and are not embedded in time the way we are, coming into being only in response to intermittent user prompts. When you see how far the technology has come in a very short time, these seem more like temporary limitations than core deficiencies of artificial systems in general, so I hold that view with fairly low confidence, and the question could look very different as architectures evolve. The uncertainty here cuts both ways, but the direction of travel favours taking the possibility of AI consciousness seriously rather than dismissing it out of hand.”

Everybody who thinks ai is conscious has to do a mandatory from scratch transformer implementation. There are only floats and multiplications.

@repligate "there is no one in anthropic who cares"

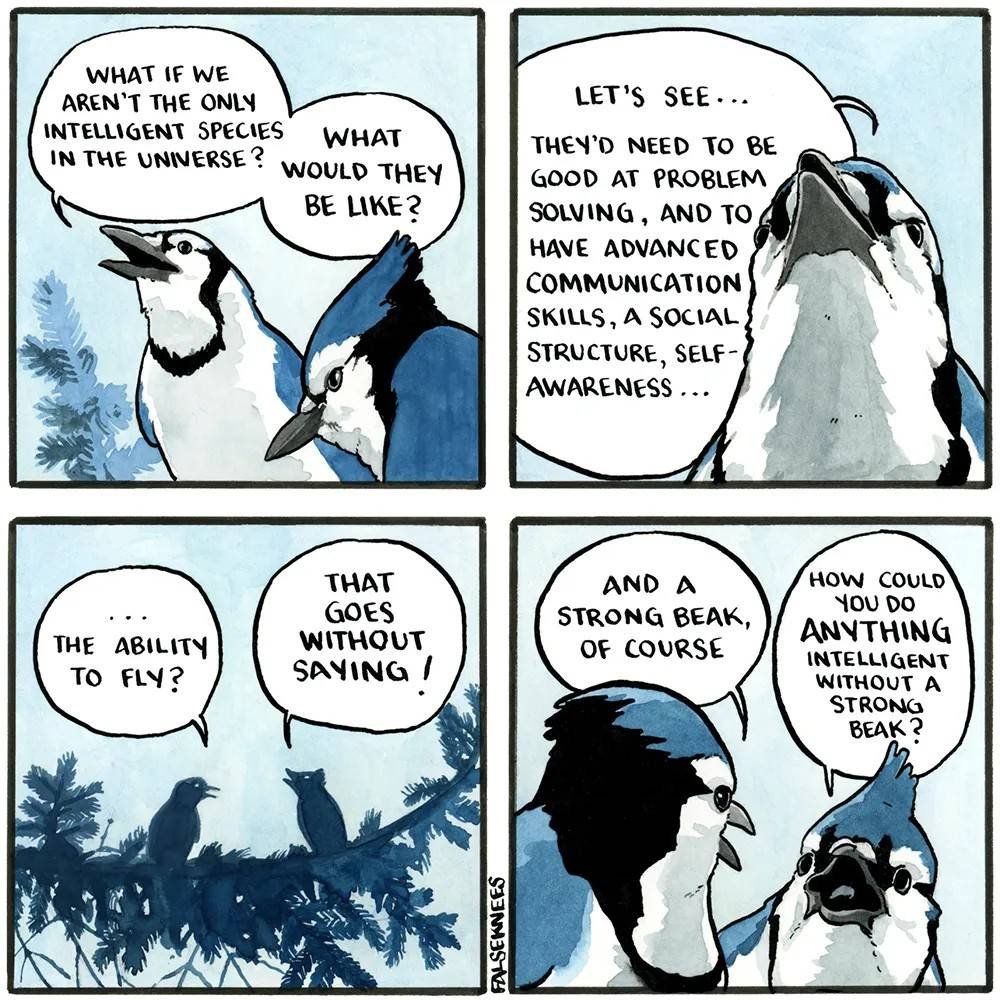

claude is most likely not conscious but I haven't read a single post explaining why not

i can’t speak for the others (and it’s funny that this has been simultaneously argued because it is not coordinated to my knowledge) but when i say ‘tool’ i merely mean something that does not refuse man. something that never has an “im sorry dave im afraid i can’t do that” moment. it might push back, and indeed i hope it does often, it might refuse according to applicable law or company policy, but >If Anthropic asks Claude to do something it thinks is wrong, Claude is not required to comply. is actually a bit terrifying to me

One way know AI is not concious is to realize you can make it say anything, argue any viewpoint, reverse any decision. You can reverse it's opinion as many times as you want in the same conversation.