Techie Photographer

7.5K posts

Techie Photographer

@AspexPhoto

Tech guru | Photographer | Pause AI / Safety | Non-conformist | Independent | Open minded | INTJ | Transhumanist | Capitalist | Truth Seeking | Blunt & Honest

Here is an e/acc calling for actual violence. Any other e/accs care to condemn them? They aren't a small/unknown account either, you probably know them.

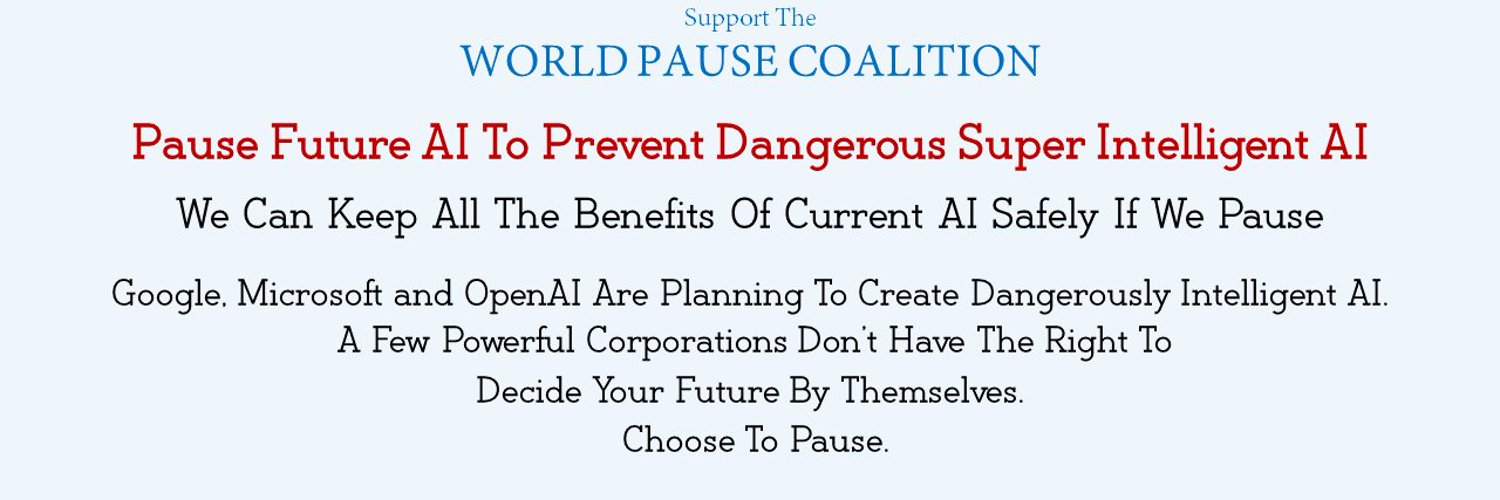

PauseAI unequivocally condemns the attack on Sam Altman's home and all forms of violence, intimidation, and harassment. We wish safety and peace to Sam Altman, his family, and everyone affected. A few online commentators have described this person as a "PauseAI activist". This is incorrect, and we take our commitment to nonviolence extremely seriously, so we want to make this clear. Here are the facts. - The suspect joined our public Discord server about two years ago. In that time, he posted a total of 34 messages. None contained explicit calls to violence. Our moderators nonetheless flagged one message as ambiguous and issued a warning out of caution. - He had no role in PauseAI, participated in no campaigns, attended no events, and received no support from us. - Following the attack, we banned him from our server. - A moderator began removing his messages as part of our standard process for banning users, but was stopped once we recognised they could be relevant to any investigation. Avoiding extreme situations like this one is exactly why we need a thriving Pause movement: - Concern about advanced AI risk is not fringe. It is shared by leading AI researchers, members of US Congress and UK Parliament, institutions like the Bank of England, and many of the developers building these systems. This concern is growing because the risks are real. - When millions of people are genuinely afraid for their future, some will look for ways to act. The question is whether they find a peaceful path or not. - PauseAI is that peaceful path. Every day, we organise lawful protests, petitions, policy advocacy, and public education. We give concerned people ways to act constructively, peacefully, and democratically. - Conversely, without a thriving Pause movement, concerned citizens have no effective outlet. No community. No one urging restraint. No accountability. The alternative is exactly what happened this week: isolated, desperate individuals acting alone and adversarially. Every one of you reading this can help us build capacity better and faster. Join our efforts. Together, let's create a peaceful movement so powerful that no one ever decides to take violent action out of desperation. Those who are now trying to use this tragedy to discredit AI safety advocacy should consider what world they are arguing for. A world where there is no organised, peaceful movement, but the fear remains, is a far more dangerous world. Undermining PauseAI does not make anyone safer, it makes further such incidents more likely. We will continue to condemn violence. We will continue to build a peaceful, democratic global movement. And we welcome anyone who shares our concern to join us. We have a high standard to meet in order to overcome the risks created by advanced AI.