Yuan-Sen Ting 丁源森@TingAstro

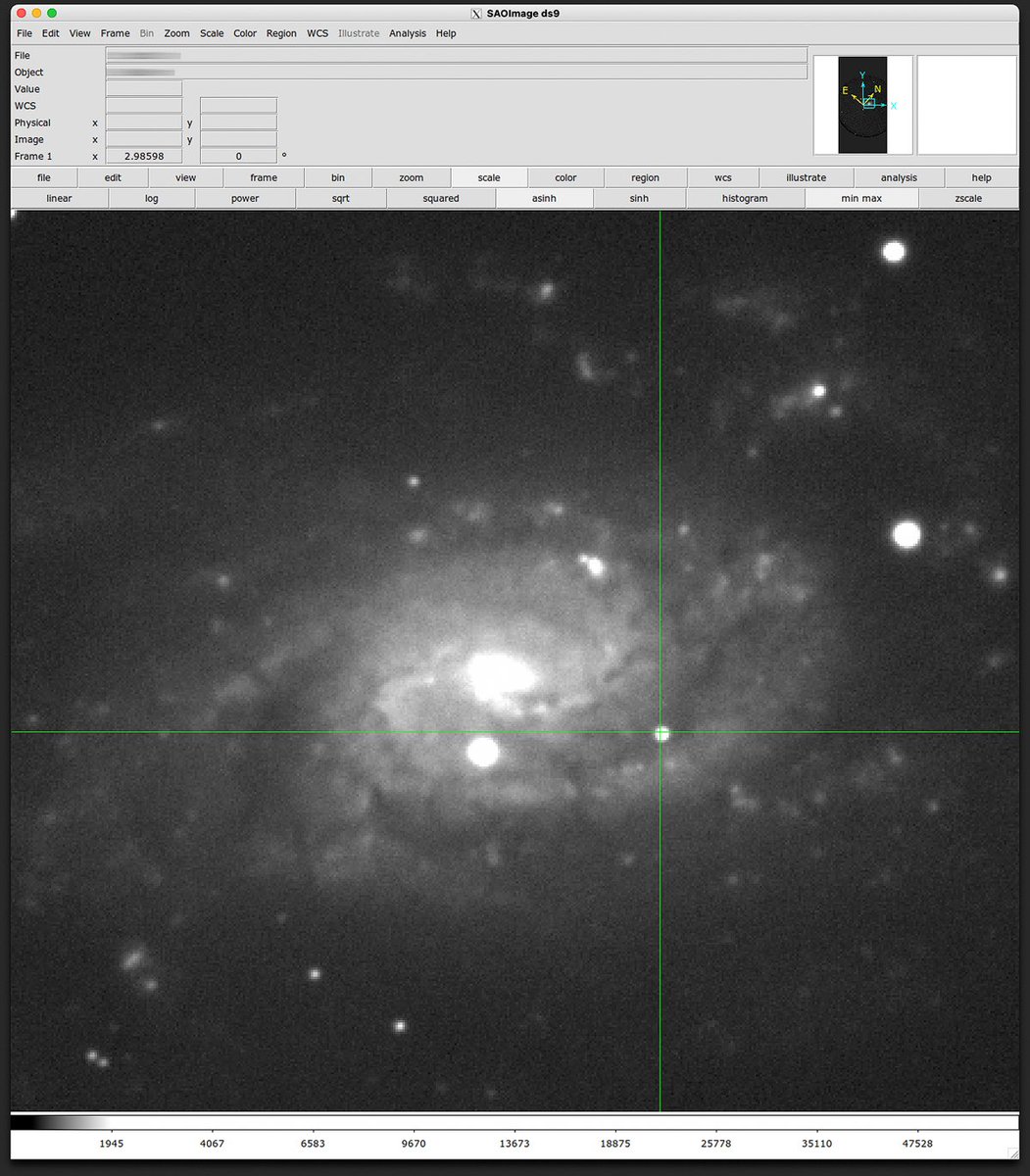

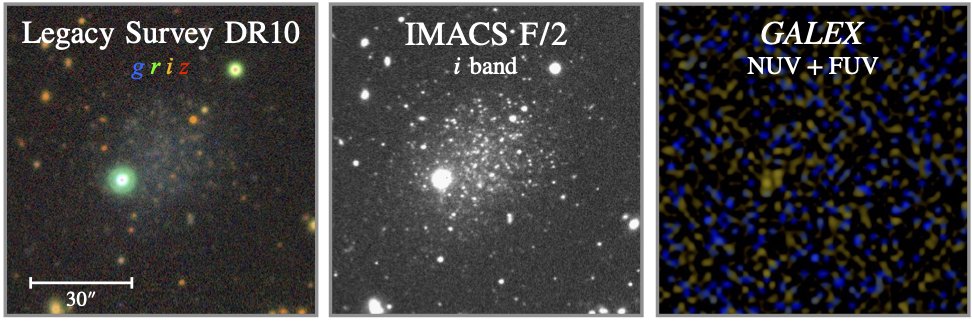

The Payne just got a major upgrade. Led by @rozanski_t and his heroic and painstaking effort, the verdict is final -- Transformer-based emulators significantly outperform existing methods by capturing long-range spectral information.

Bonus: We now include wavelength as input, allowing output spectra on any grid without predefined ranges—a game-changer for precision RV, binaries, or stellar mass black holes.

Like in NLP, our Transformer model boasts:

a) Better scaling with training steps and dataset size—more compute means continued improvement with no plateau in sight

b) Improved interpretability—attention between tokens reveals clean representations of elemental species, showing the model learned to focus on transitions from the same species for better emulation.

@rozanski_t has even more exciting ideas in the pipeline that didn't make it into this paper. Reach out to him and learn more!