Atli Kosson

74 posts

@AtliKosson

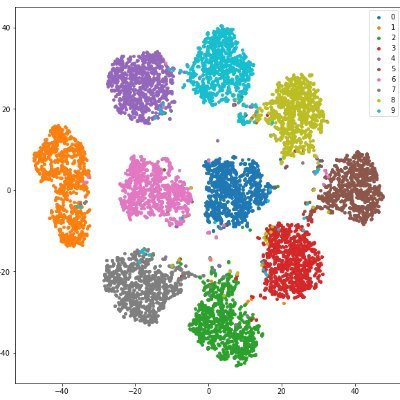

PhD student at @EPFL🇨🇭working on improved understanding of deep neural networks and their optimization.

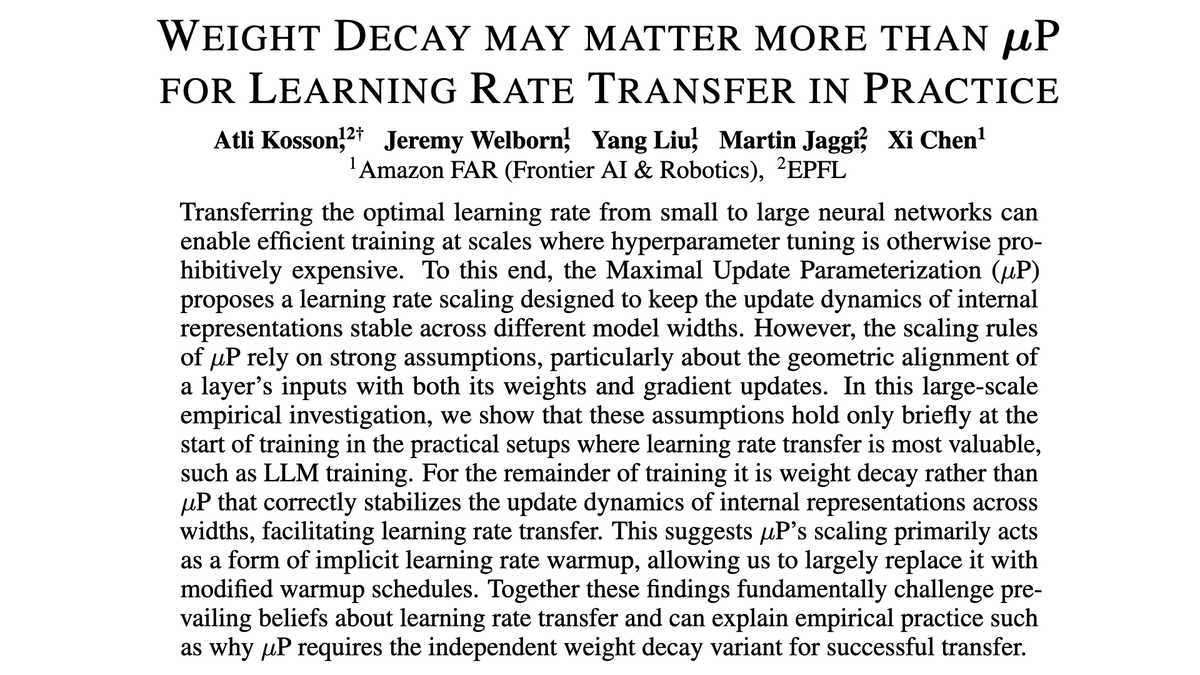

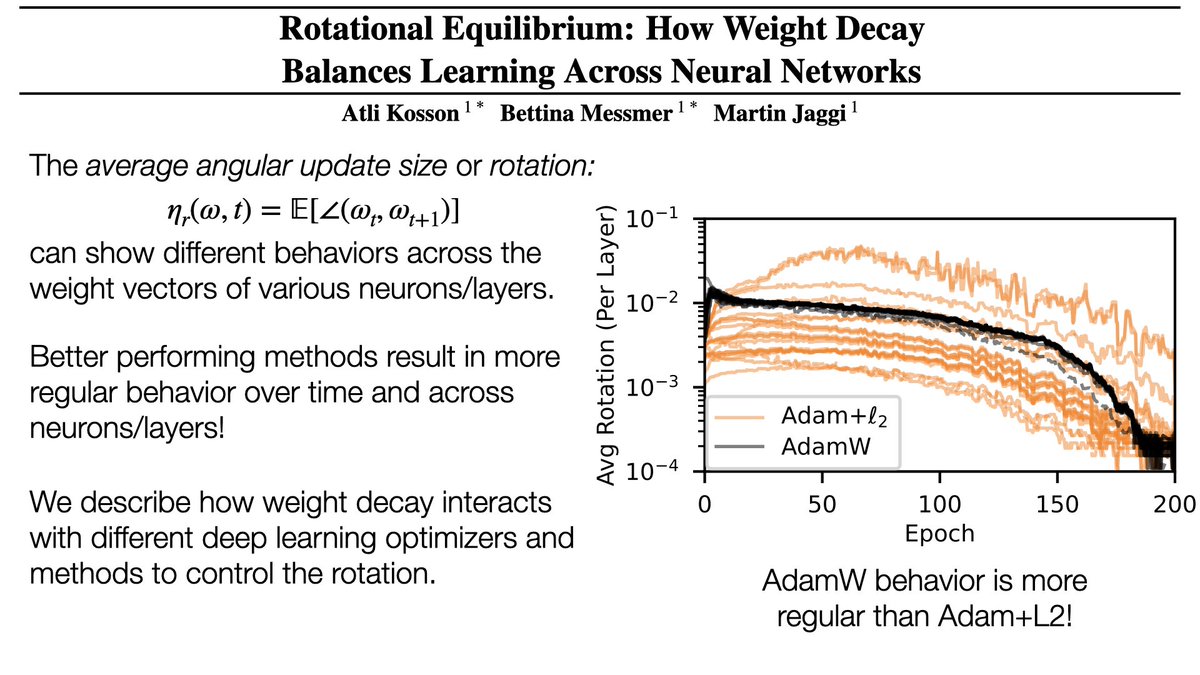

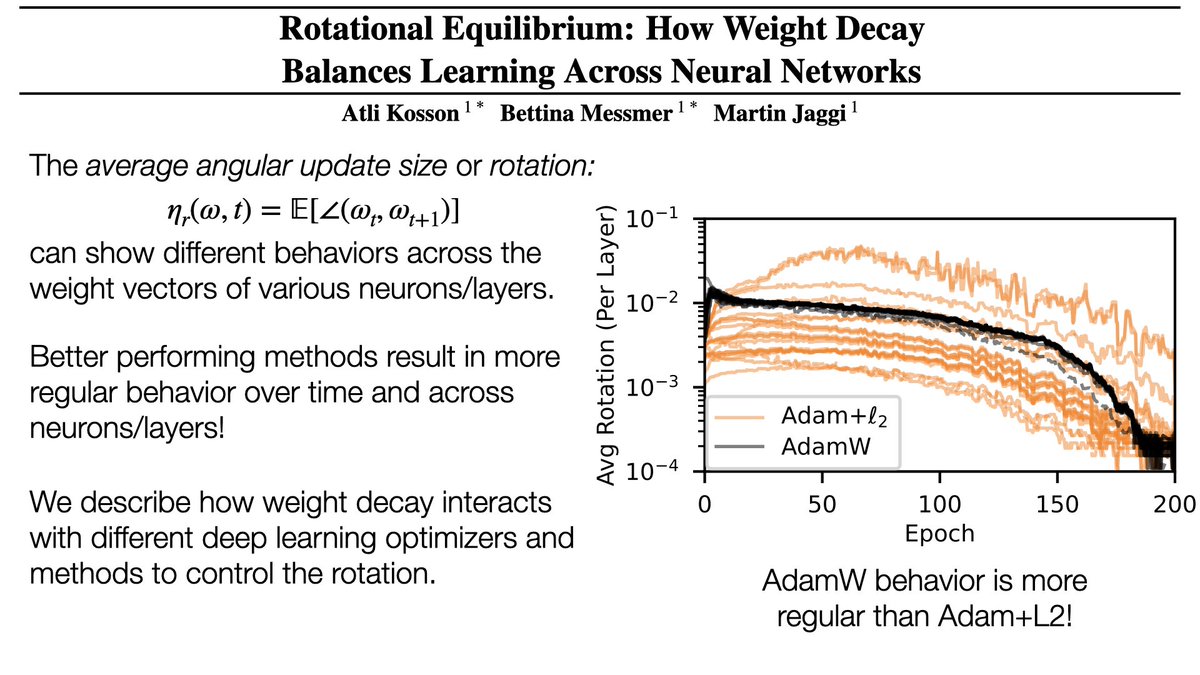

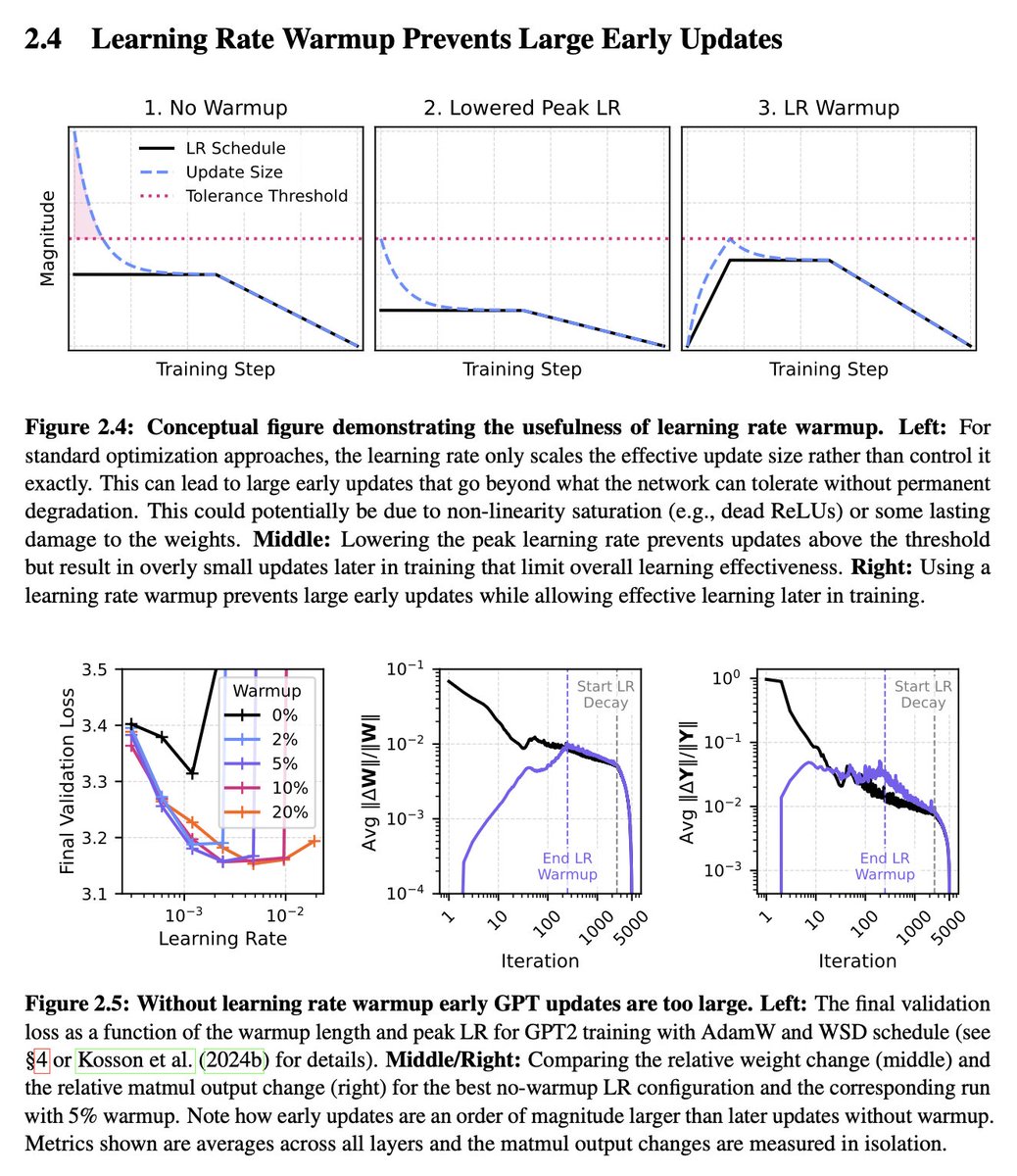

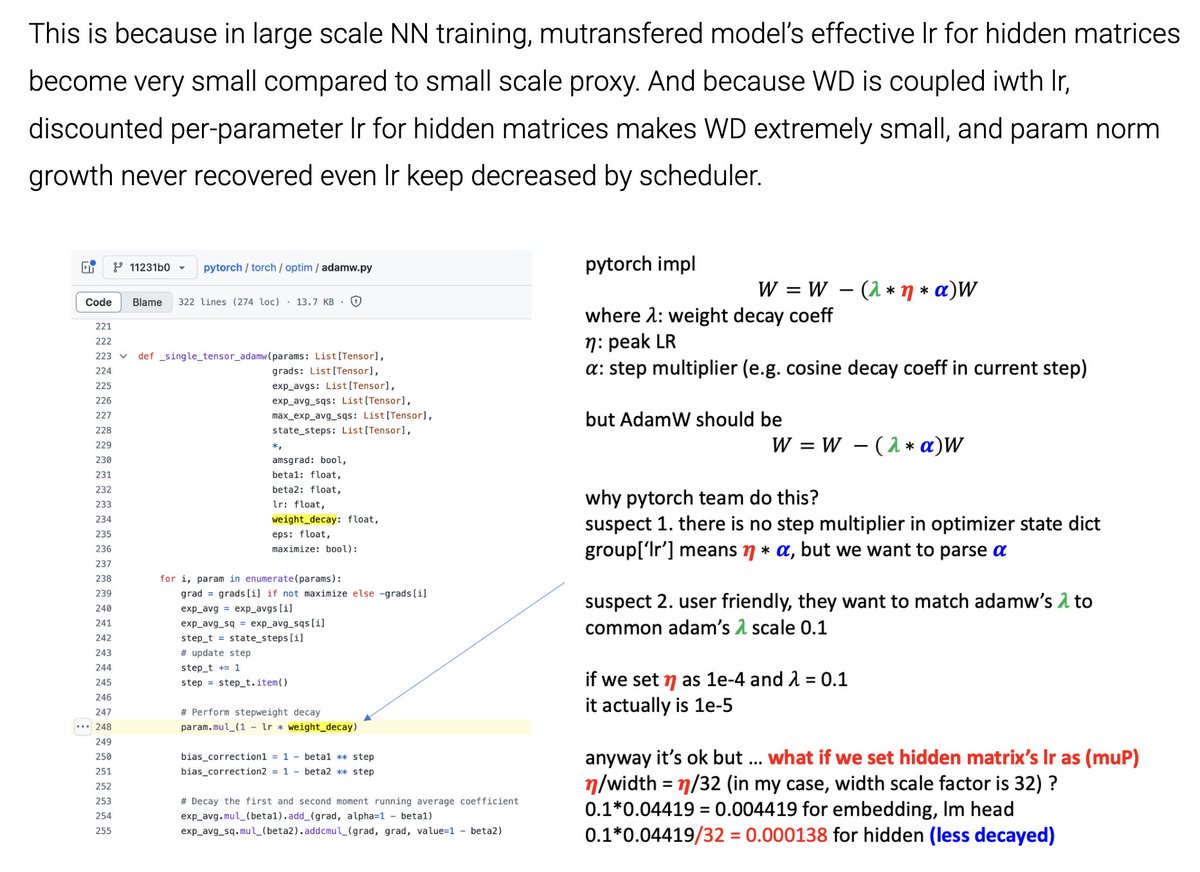

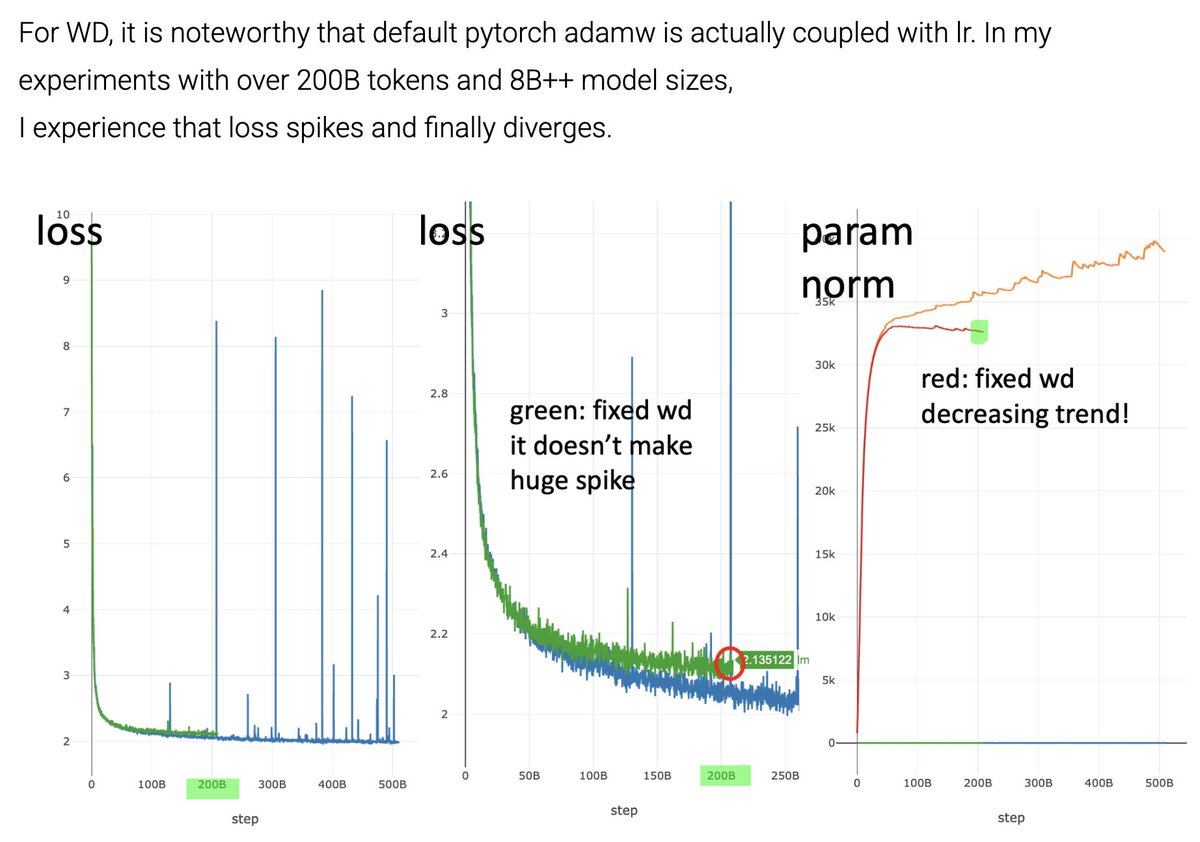

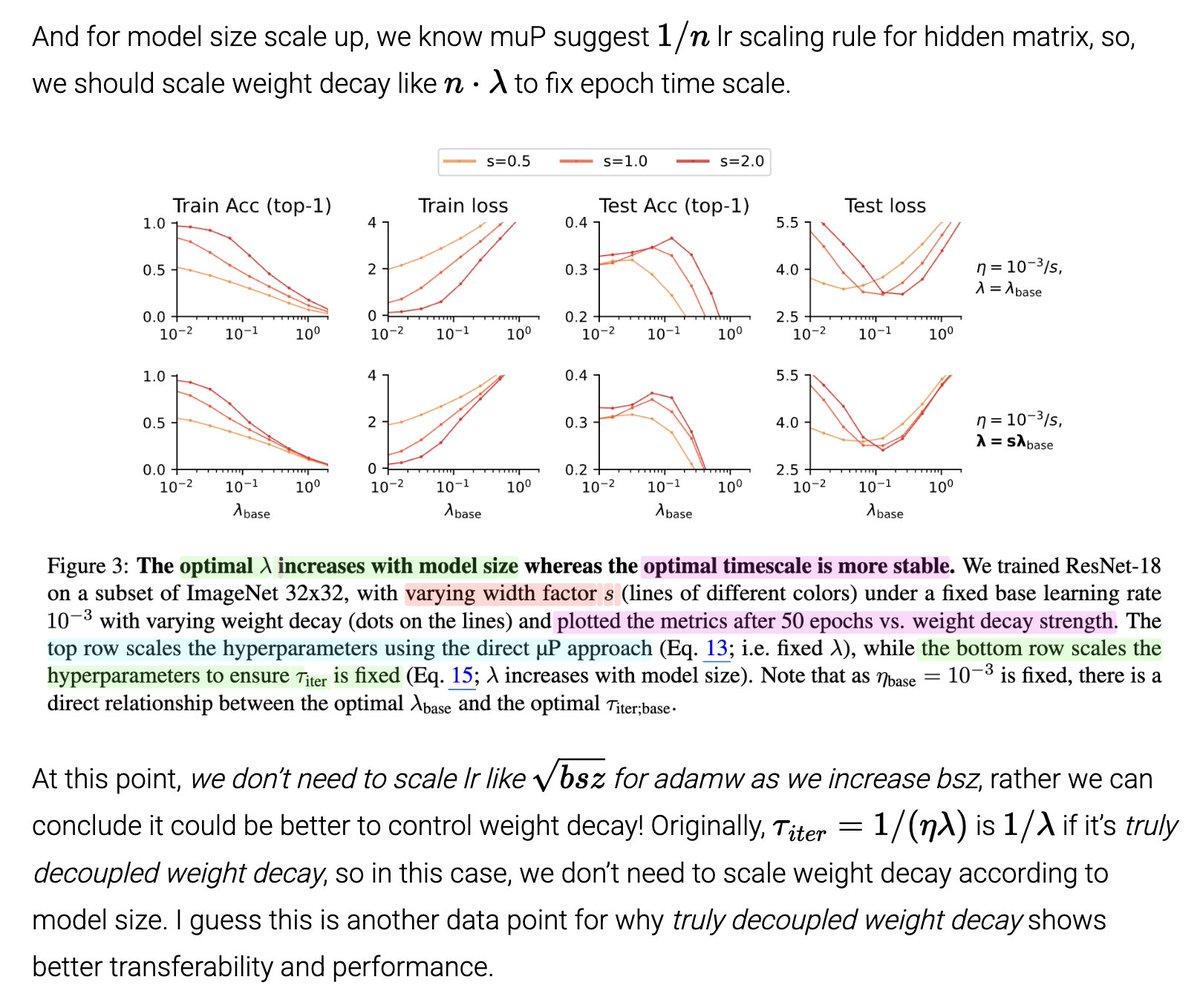

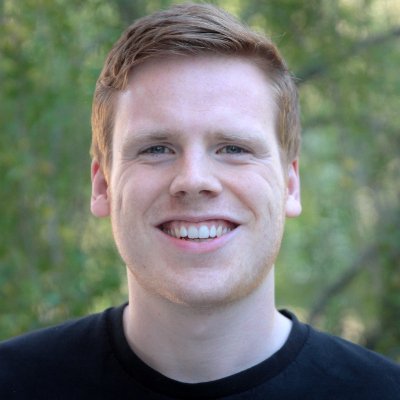

The Maximal Update Parameterization (µP) allows LR transfer from small to large models, saving costly tuning. But why is independent weight decay (IWD) essential for it to work? We find µP stabilizes early training (like an LR warmup), but IWD takes over in the long term! 🧵

Surprisingly independent WD works because it overrides µP's scaling! µP makes the updates proportionally smaller for wider models, but independent WD eventually makes them equally large across widths. This turns out to be exactly what's needed for stable feature learning! 🧵5/8

The Maximal Update Parameterization (µP) allows LR transfer from small to large models, saving costly tuning. But why is independent weight decay (IWD) essential for it to work? We find µP stabilizes early training (like an LR warmup), but IWD takes over in the long term! 🧵