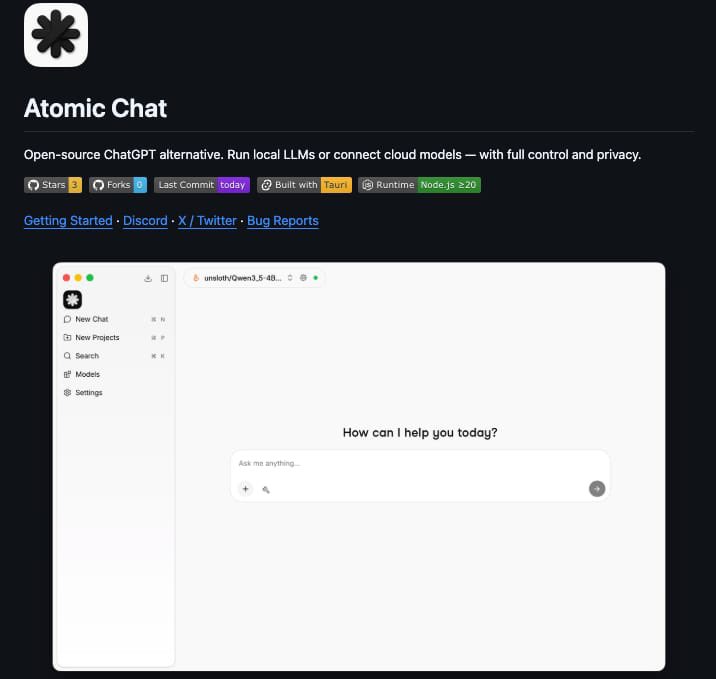

Sabitlenmiş Tweet

Running Hermes agent Locally with Gemma4

Device: Macbook Air

CPU: M4

RAM: 16GB

Open Source. Free. Private.

With TurboQuant cache in @Atomic_Chat_HQ app

English

atomic.chat

27 posts

@atomic_chat_hq

Free Local AI Chat. Enhanced by Google Turbo Quant.

Running Hermes agent Locally with Gemma4 Device: Macbook Air CPU: M4 RAM: 16GB Open Source. Free. Private. With TurboQuant cache in @Atomic_Chat_HQ app